v1.0.

Yayyyyyyyyy!!!!!!

Check it out under its new home, gatographql.com.

]]>v1.0.

Yayyyyyyyyy!!!!!!

Check it out under its new home, gatographql.com.

]]>

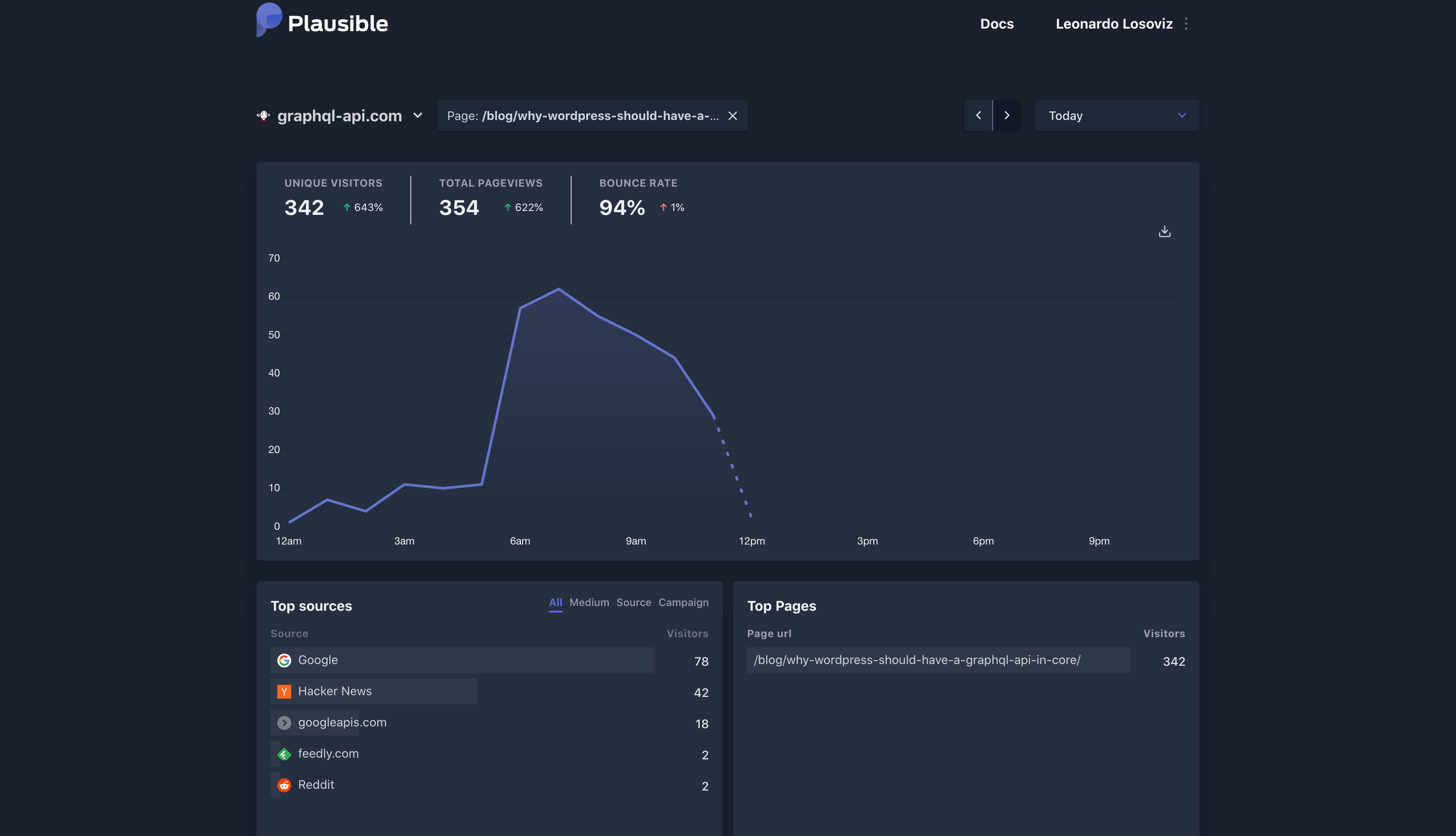

I noticed because, as I woke up on Sunday morning and I checked my traffic, I saw a wonderful spike:

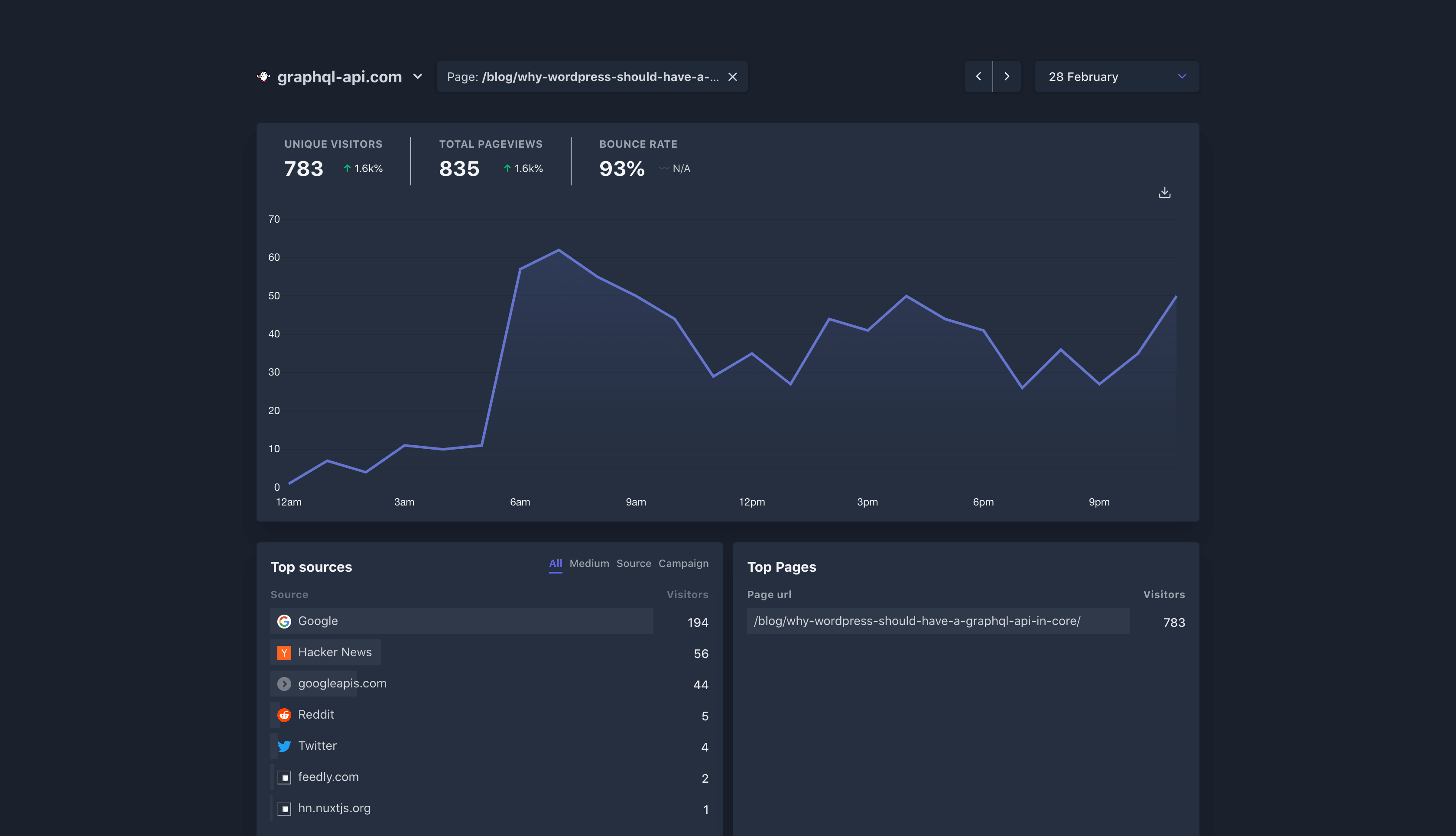

By the end of the day, that blog post had brought in near 800 visitors (and they kept arriving the following day):

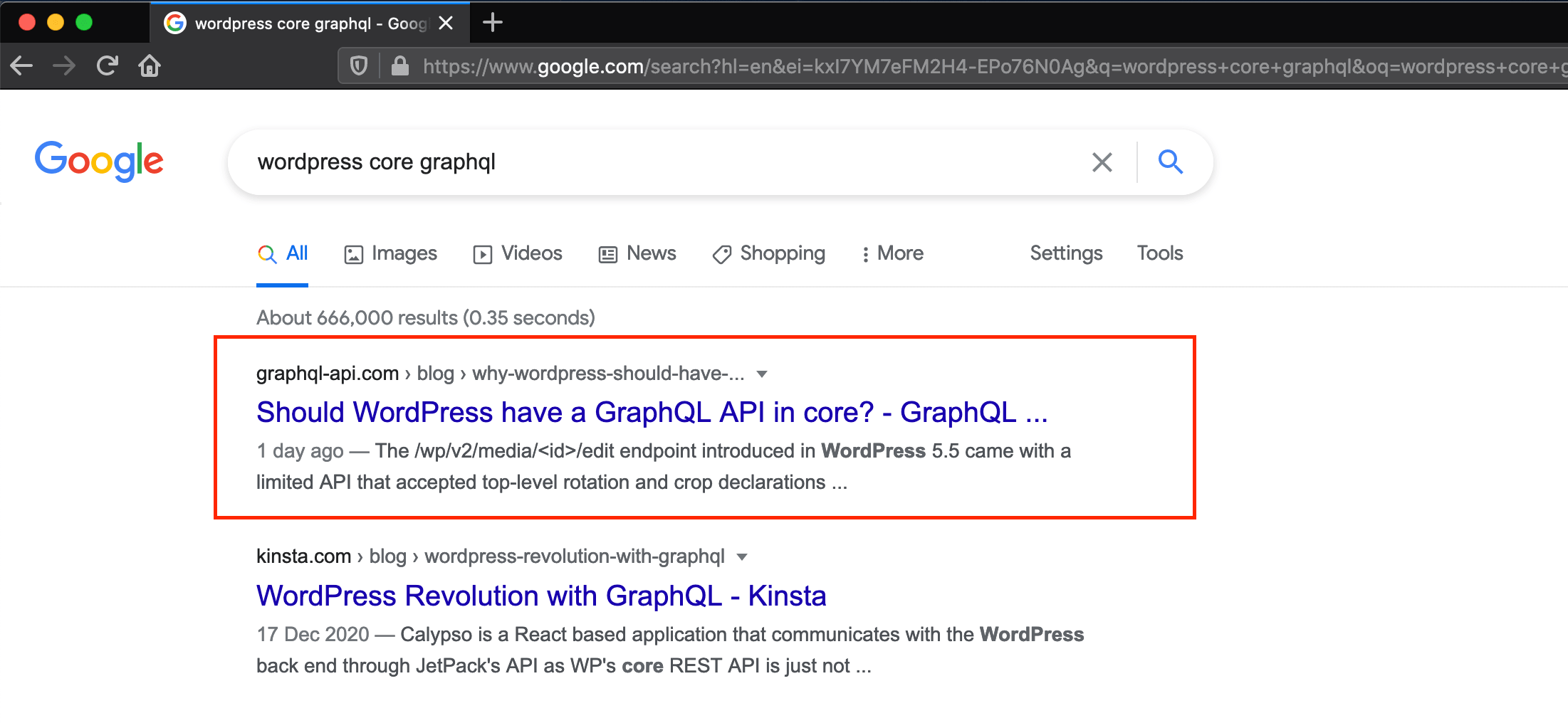

I believe this is the first time I reach the top of Google, when searching for some rather generic terms (say, without mentioning my name as part of the search).

I must admit, making it to the top of Google feels good!

Ok, so this is how it happened.

On Saturday, I wrote the blog post 🛠 Should WordPress have a GraphQL API in core? for my plugin's blog.

I had the blog post's URL, why-wordpress-should-have-a-graphql-api-in-core, contains those keywords I wanted the post to be associated with:

I then promoted the post on Reddit's /r/php channel, and shared it on Hacker News.

For HN, I posted it under the special section "Show HN", because the number of articles submitted there is lower, hence each post remains visible longer (before falling out of the first 30 results shown on the page).

The traffic on the "new" section is low, but really high on the front page. Then, the intention is to get the article upvoted, so it will make it to the Hacker News' front page (at least the one for Show HN).

A way to improve one's chances is to use a compelling title. Then, instead of using the blog post's actual title ("Should WordPress have a GraphQL API in core?"), I chose one more suitable to the Show HN ethos: "GraphQL API in WordPress core would look like this".

I crossed my fingers that the article would get upvoted, and went to sleep.

I woke up, and saw to my delight that the article got upvoted, and it made it to Show HN's front page. Yay!

I wish I had taken a screenshot. I did not. But it looked like this:

Google (I believe) picked it up from there, and the traffic then went through the roof 🚀

I was lucky this time, because people upvoting my article is out of my control. However, this is part of a long-term strategy, to have my plugin the GraphQL API for WordPress feature higher on Google.

That search result is actually a bit esoteric: "wordpress core graphql". Who adds the word "core"?

This is a step in between. The actual objective is to feature higher when searching for "wordpress graphql". And in this concern, my plugin is not doing great yet, but it's been improving!

When Googling "wordpress graphql", my plugin now shows on the homepage! (This was not the case as far as last week). It shows on the 7th position and, in addition, the 4th and 6th positions also concern my plugin:

WPGraphQL is currently dominating results for this search, taking positions 1, 2 and 3, which are the ones that truly matter.

But I'm coming behind, and will battle my way up 😜

It took so long, because when you're doing everything on your own (which is my case, I don't have a team), you literally need to do everything. So to make this website, I had to learn so many things:

And I even had to design the logo (with help from my wife):

It looks not bad, right? 😅

And all of that while still developing the plugin, and writing documentation, so that users can start playing with it immediately.

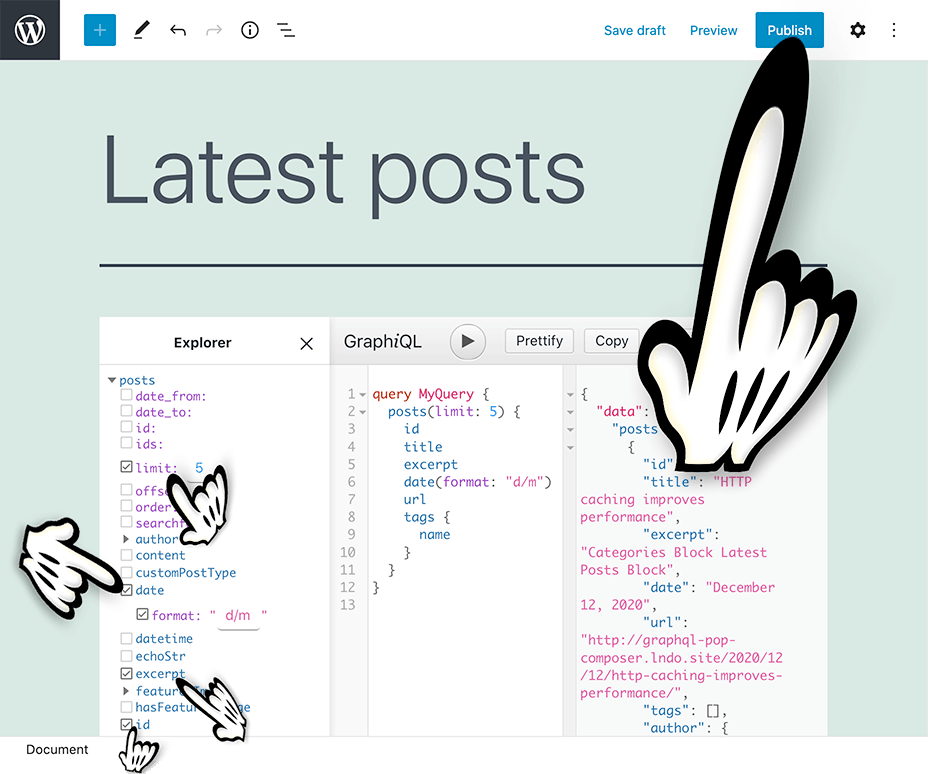

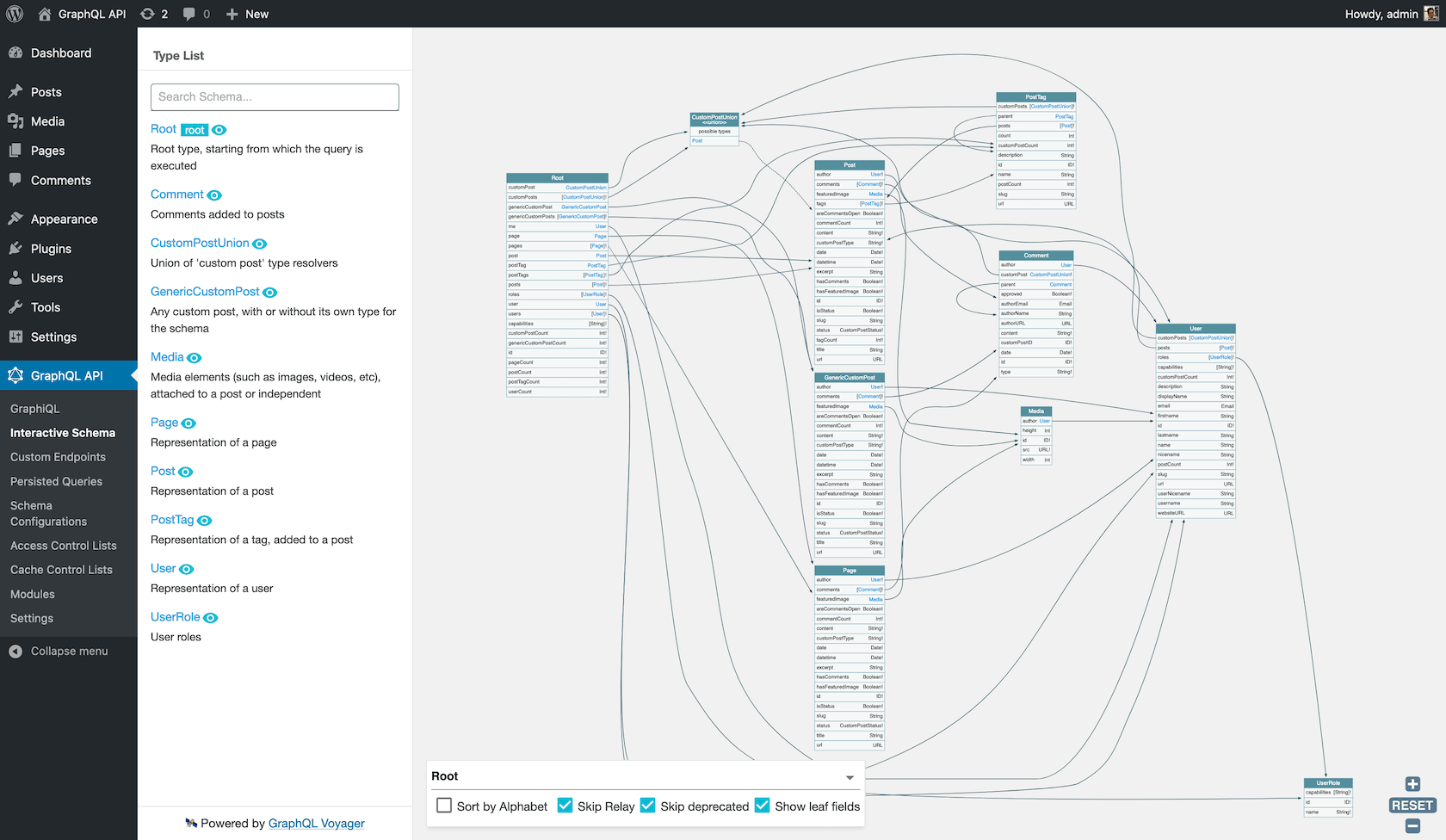

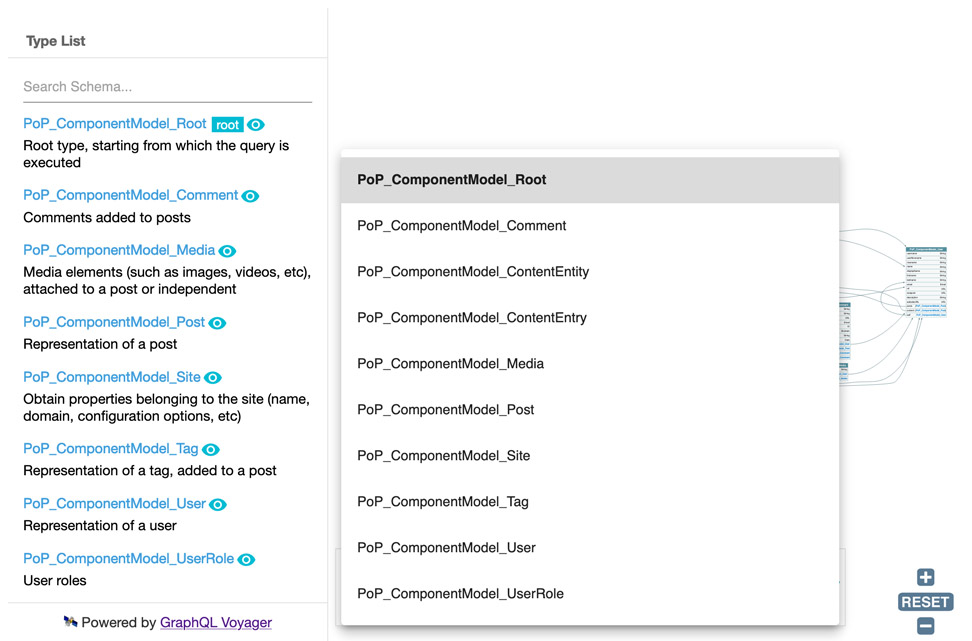

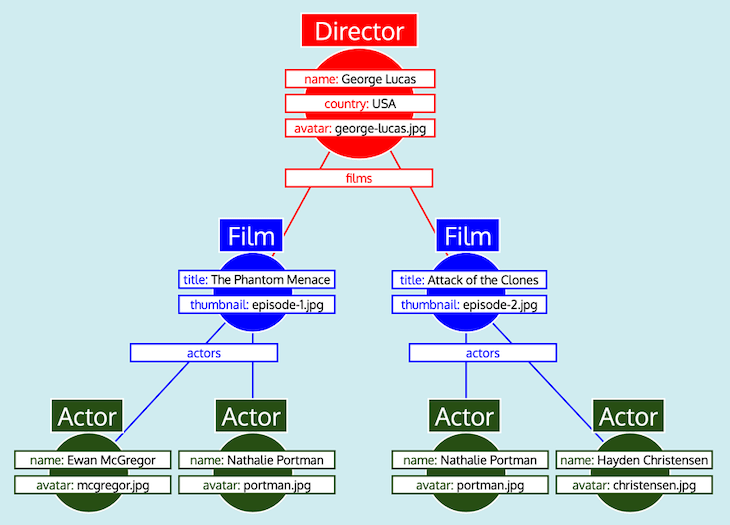

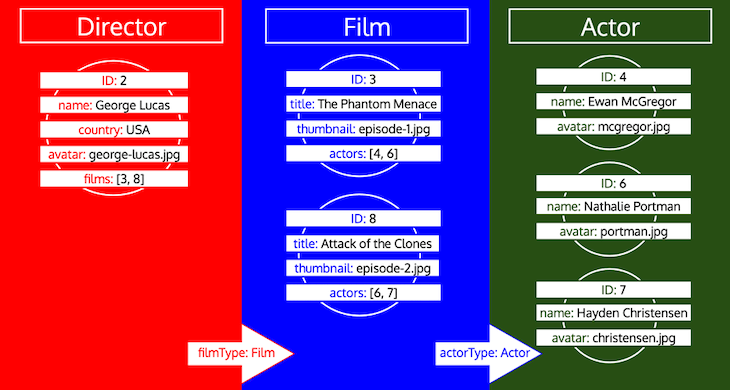

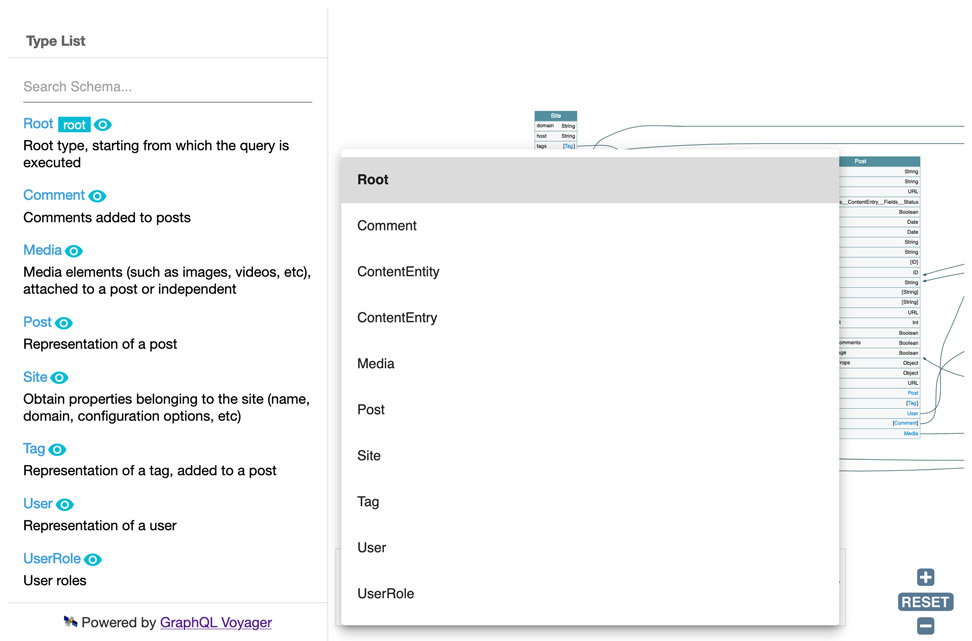

But it's been worth it. I'm very pleased with how it looks. Check this image for instance, added to the homepage:

I believe it's able to convey how powerful the product is, which was my goal all along.

Now, on to the next challenge: how to get people to visit it 🙀

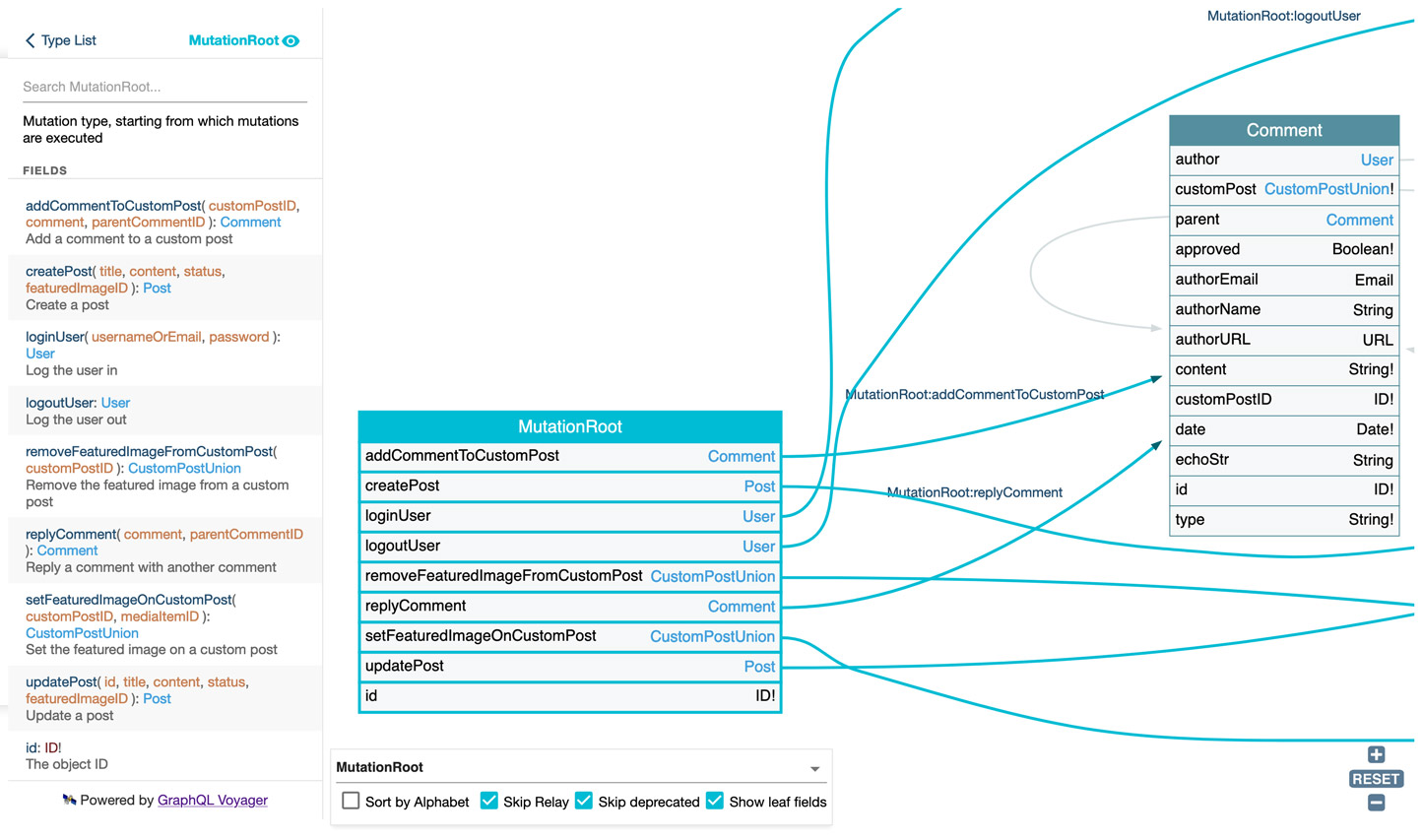

]]>I released version 0.7 of the GraphQL API for WordPress, supporting mutations, and nested mutations! 🎉

Here is a tour showing the new additions.

GraphQL mutations enable to modify data (i.e. perform side-effect) through the query.

Mutations was the big item still missing from the GraphQL API. Now that it's been added, I can claim that this GraphQL server is pretty much feature-complete (only subscriptions are missing, and I'm already thinking on how to add them).

Let's check an example on adding a comment. But first, we need to execute another mutation to log you in, so you can add comments. Press the "Run" button on the GraphiQL client below, to execute mutation field loginUser with a pre-created testing user:

[🔗 Open GraphiQL client in new window]

Now, let's add some comments. Press the Run button below, to add a comment to some post by executing mutation field addCommentToCustomPost (you can also edit the comment text):

[🔗 Open GraphiQL client in new window]

In this first release, the plugin ships with the following mutations:

✅ createPost

✅ updatePost

✅ setFeaturedImageforCustomPost

✅ removeFeaturedImageforCustomPost

✅ addCommentToCustomPost

✅ replyComment

✅ loginUser

✅ logoutUser

Nested mutations is the ability to perform mutations on a type other than the root type in GraphQL.

They have been requested for the GraphQL spec but not yet approved (and may never will), hence GraphQL API adds support for them as an opt-in feature, via the Nested Mutations module.

Then, the plugin supports the 2 behaviors:

For instance, the query from above can also be executed with the following query, in which we first retrieve the post via Root.post, and only then add a comment to it via Post.addComment:

[🔗 Open GraphiQL client in new window]

Mutations can also modify data on the result from another mutation. In the query below, we first obtain the post through Root.post, then execute mutation Post.addComment on it and obtain the created comment object, and finally execute mutation Comment.reply on it:

[🔗 Open GraphiQL client in new window]

This is certainly useful! 😍 (The alternative method to produce this same behavior, in a single query, is via the @export directive... I'll compare both of them in an upcoming blog post).

In this first release, the plugin ships with the following mutations:

✅ CustomPost.update

✅ CustomPost.setFeaturedImage

✅ CustomPost.removeFeaturedImage

✅ CustomPost.addComment

✅ Comment.reply

You may have a GraphQL API that is used by your own application, and is also publicly available for your clients. You may want to enable nested mutations but only for your own application, not for your clients because this is a non-standard feature.

Good news: you can.

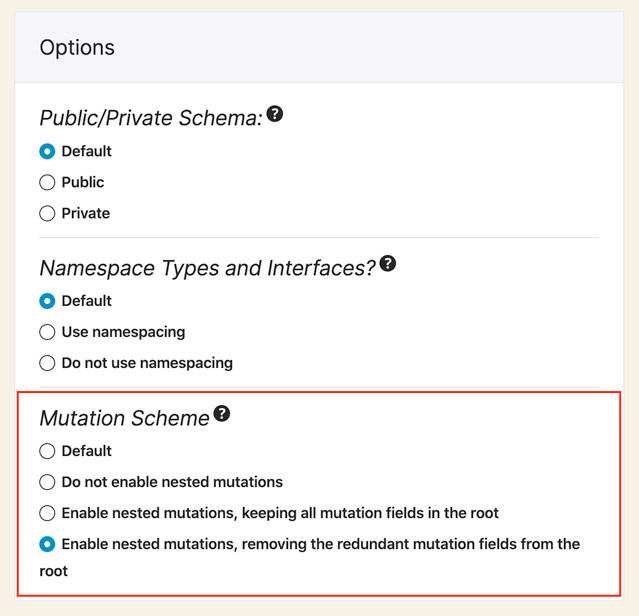

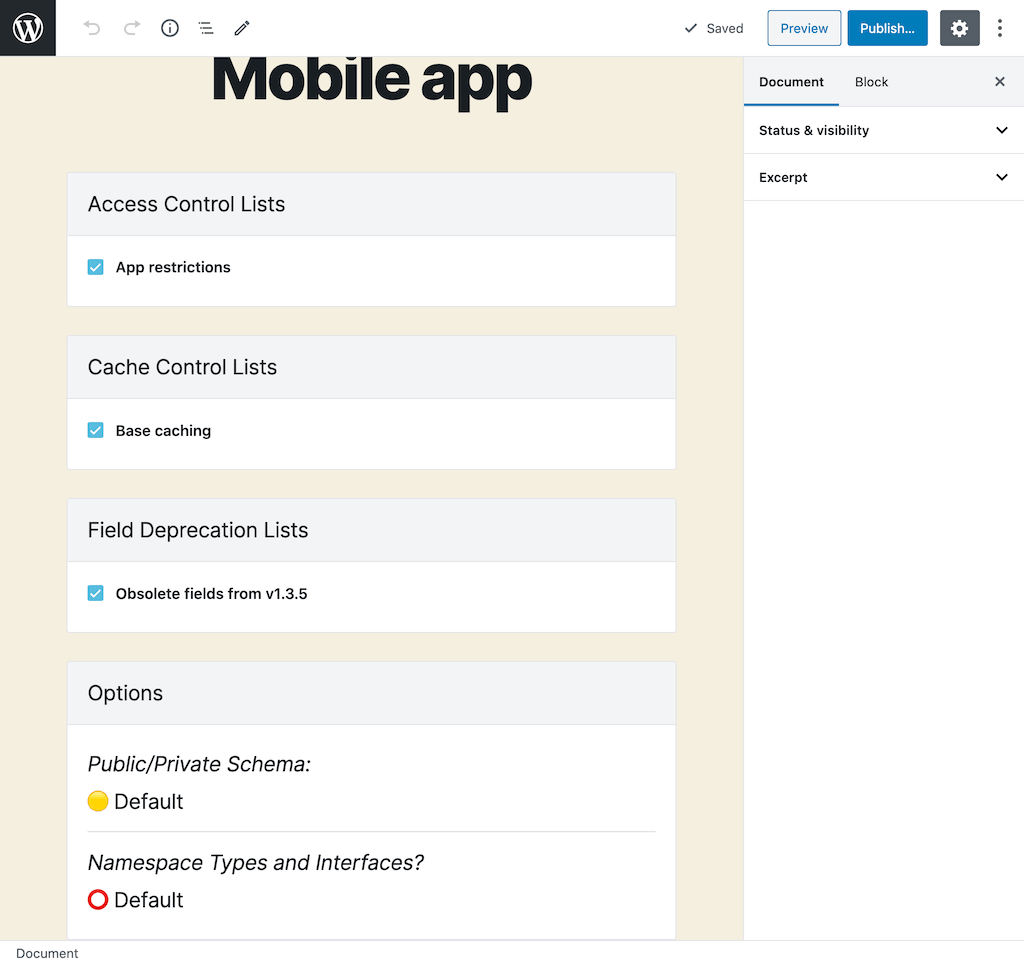

I've added a "Mutation Scheme" section in the Schema Configuration, which is used to customize the schema for Custom Endpoints and Persisted Queries:

Hence, you can disable the nested mutations everywhere, but enable them just for a specific custom endpoint that only your application will use. 💪

With nested mutations, mutation fields may be added two times to the schema:

For instance, these fields can be considered a "duplicate" of each other:

Root.updatePostPost.updateThe GraphQL API enables to keep both of them, or remove the ones from the root type, which are redundant.

Check-out the following 3 schemas:

QueryRoot to handle queries and MutationRoot to handle queriesRoot type handles queries and mutations, and redundant mutation fields in this type are keptRoot type✱ Btw1, these 3 schemas all use the same endpoint, but changing a URL param ?mutation_scheme to values standard, nested and lean_nested. That's possible because the GraphQL server follows the code-first approach. 🤟

✱ Btw2, these options can be selected on the "Mutation Scheme" section in the Schema configuration (shown above), hence you can also decide what behavior to apply for individual custom endpoints and persisted queries. 👏

Check out the GraphQL API for WordPress, and download it from here.

Now it's time to start preparing for v0.8!

🙏

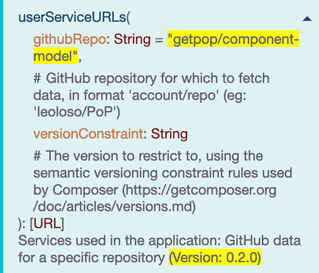

]]>Coding in PHP 7.4 and deploying to 7.1 via Rector and GitHub Actions

It explains all the hows and whys:

Enjoy!

]]>👉 What is the most effective way to reuse code, within a (single or multi-block) WordPress plugin?

The answer is here:

Reusing Functionality for WordPress Plugins with Blocks

Enjoy!

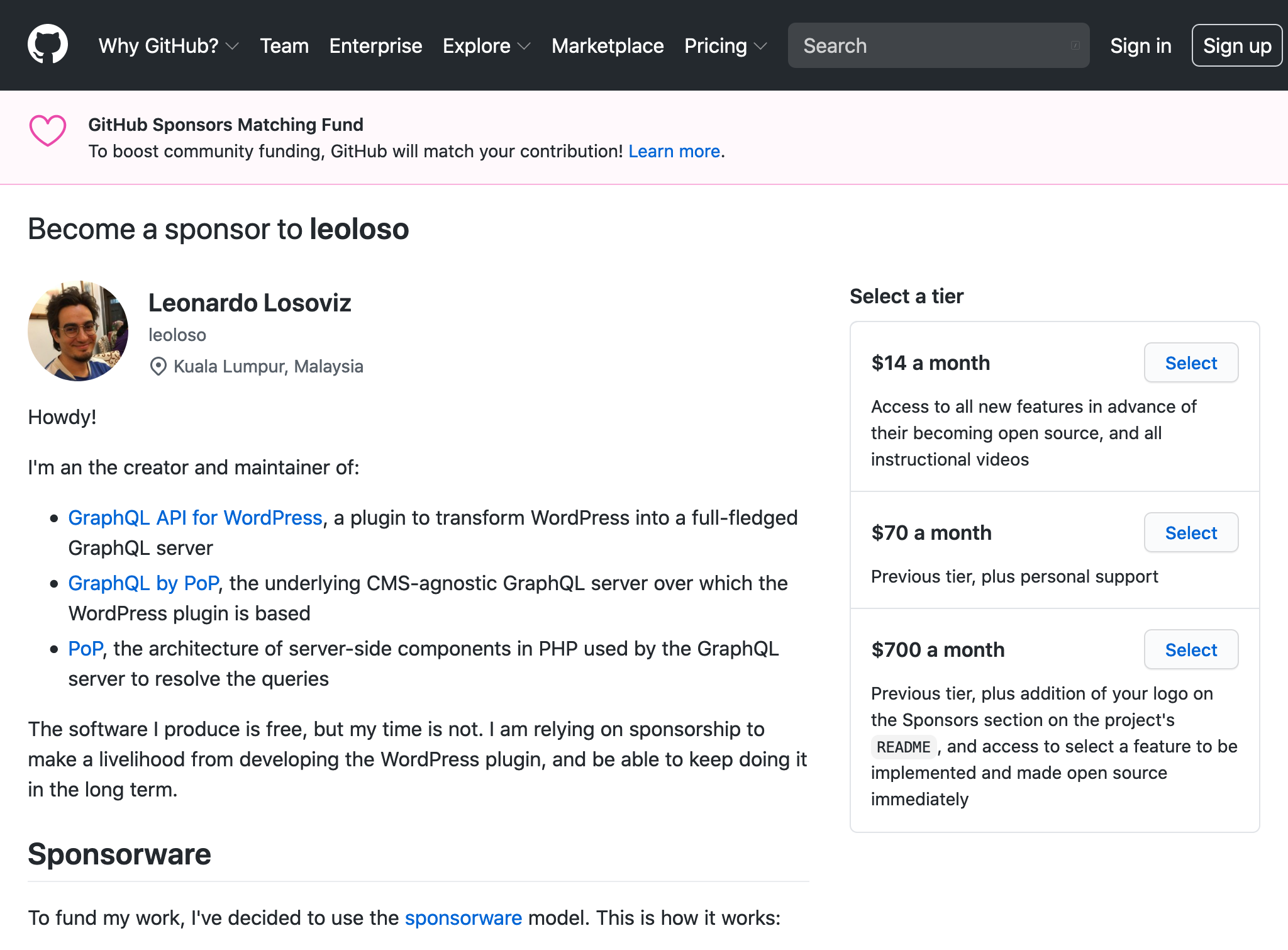

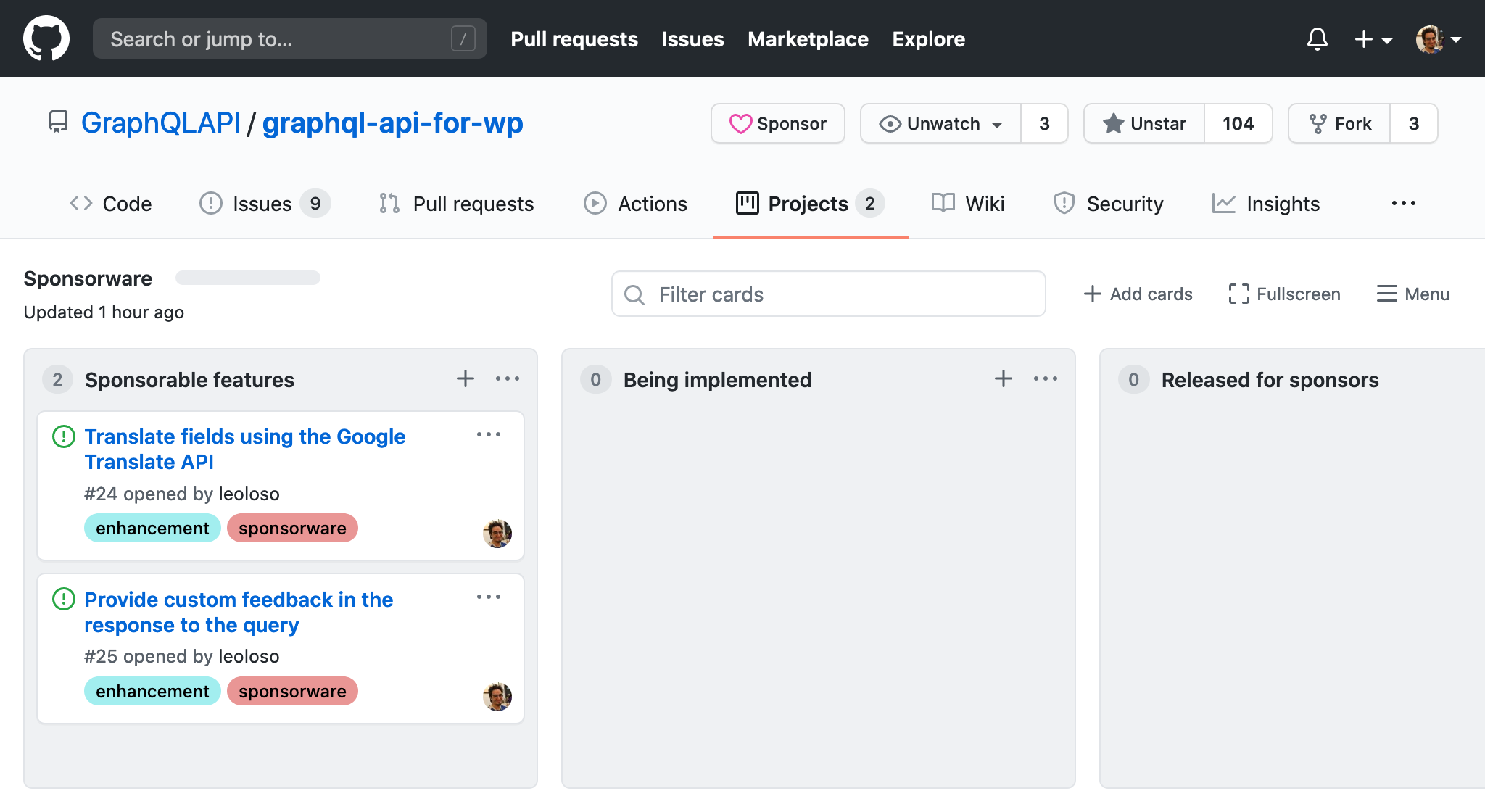

]]>I've learnt about Caleb Porzio's sponsorware model as a way to fund an open source project. The idea is to release a new feature only to the funders and, once you got X new funders, only then the new feature becomes open source, available to everyone.

But I haven't seen much success with this strategy yet to fund my open source plugin. The reason is clear: Sponsorware initially worked for Caleb because he asked the 10.000 subscribers on his newsletter for support, from which 75 agreed to be part of it. But I do not have 10.000 subscribers or followers or users, and building such a list takes time.

Caleb's second strategy seems much more promising: He also started selling access to tutorials on using the software. He says this strategy has been incredibly successful: as I'm writing this, he's surpassed 1100 sponsors!

The quest for learning appears to be a strong motivator to fund a project.

I've been delaying implementing this strategy, though, because it takes effort to:

The day has only 24 hs, and I'm working alone on my project, so there's only so much I can do. I've so far decided to prioritize improving the plugin first, adding all the minimal basic features that I would love to have as a user, and only then start producing tutorial videos.

I've actually been lucky: just a few days ago, site spatie.be (based on Laravel) was open sourced, making available the code implementing several of the required features:

So building the site is now within my reach. I just need to work on creating the videos.

Hopefully I'll soon be able to comment if selling tutorial videos can succeed in funding the open source plugin.

]]>Since I like owing my own content, I reproduce it here in my own blog.

I think Matt’s brutal honesty is welcome, because most information out there about the Jamstack praises it. However, it also comes from developers using these modern new tools, evaluating their own convenience and satisfaction. As Matt points out, that doesn’t mean it makes it easier for the end user to use the software, which is what WordPress is good at.

I actually like the Jamstack, but because of how complex it is, it’s rather limiting, even to support some otherwise basic functionality.

The definitive example is comments, which should be at the core websites building communities. WordPress is extremely good at supporting comments in the site. The Jamstack is sooooo bad at it. In all these many years, nobody has been able to solve comments for the Jamstack, which for me evidences that it is inherently unsuitable to support this feature.

All attempts so far have been workarounds, not solutions. Eg:

Also, all these solutions are overtly complicated. Do I need to set-up a webhook to trigger a new build just to add a comment? And then, maybe cache the new comment in the client’s LocalStorage for if the user refreshes the page immediately, before the new build is finished? Seriously?

And then, they don’t provide the killer feature: to send notifications of the new comment to all parties involved in the discussion. That’s how communities get built, and websites become successful. Speed is a factor. But more important than speed, it is dynamic functionality to support communities. The website may look fancy, but it may well become a ghost town.

(Btw, as an exercise, you can research which websites started as WordPress and then migrated to the Jamstack, and check how many comments they had then vs now… the numbers will, most likely, be waaaaaaay down)

Another way is to not pre-render the comments, but render them dynamically after fetching it with an API. Yes, this solution works, but then you still have WordPress (or some other CMS) in the back-end to store the comments :P

The final option is to use 3rd parties such as Disqus to handle this functionality for you. Then, I will be sharing my users’ data with the 3rd party, and they may use it who knows how, and for the benefit of who (most likely, not my users’). Since I care about privacy, that’s a big no for me.

As a result, my own blog, which is a Jamstack site, doesn’t support comments! What do I do if I want feedback on a blog post? I add a link to a corresponding tweet, asking to add a comment there. I myself feel ashamed at this compromise, but given my site’s stack, I don’t see how I can solve it.

I still like my blog as a Jamstack, though, because it’s fast, it’s free, and I create all the blog posts in Markdown using VSCode. But I can’t create a community! So, as Matt says, there are things the Jamstack can handle. But certainly not everything. And possibly, not the one(s) that enable your your website to become successful.

]]>

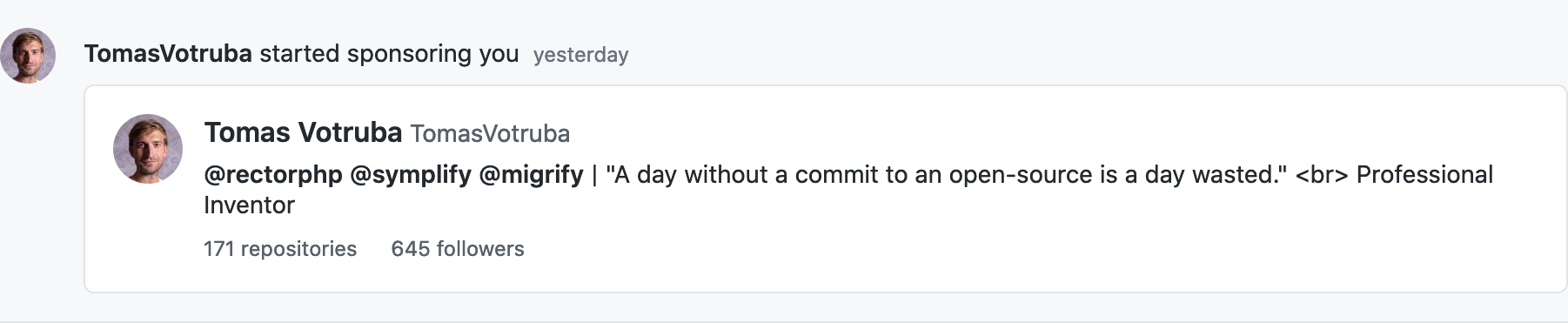

This is the second sponsor that I get, and the first one at the u$d 1400/m tier (the other was is at u$d 70/m).

This is a huge step forward, since it gives me the economic certainty as to keep developing the plugin (at least for the short/medium term... I still need a few more sponsors for it to become a living wage and long-term economic reliability).

I'll describe how it happened.

Several weeks ago, there was a proposal to introduce a fixed schedule to WordPress to bump the minimum required PHP version. Among the comments, one of them struck me:

So effectively this means that we cannot use PHP 8 syntax in themes/plugins if we want to support all WordPress versions until December 2023, 3 years after it has been released. This is very disappointing.

I work with WordPress. My plugin is for WordPress. Not being able to use the latest PHP features in 3 more years feels very disempowering.

So I decided to look for some solution, and I discovered Rector, a tool to reconstruct PHP code based on rules. It is like Babel, but for PHP. I asked if I could use Rector to transpile code from PHP 7.4 to 7.1, and they said yes, it could be done, but the rules to do it had not been created yet.

So I created them.

I contributed to this open source project full time for some 2 weeks, and produced some 15 rules to downgrade code, which I have applied to my plugin: Now I can code it using features from PHP 7.4 (and even from PHP 8.0), and release it to run on PHP 7.1, so it can still target most of the WordPress user base (only users running PHP 5.6 and 7.0 are out). That's a huge win!

After implementing those 15 rules, I documented the remaining rules to downgrade PHP code, and called it a day. I didn't mind working on them, but I didn't have the time to do it. Nevertheless, I also created this task as a sponsorable feature for my plugin; if anyone sponsored my time to work on it, I could then attempt to finish the task.

Well, Tomáš Votruba, creator of Rector, liked my contributions so he decided to become my sponsor.

Yay! 🍾 🎉 🎊 🥳 🍻 🥂

In exchange, I'll work on the downgrade rules, and even attempt to have Rector itself run on PHP 7.1.

Now, let's be clear: Tomáš is sponsoring me to work on Rector, not on GraphQL API for WordPress. That's why the sponsoring tier is u$d 1400/m, since it involves me working on the sponsor's repo.

While I get to increase the price for the tier, it makes no difference to me if the code is in my repo or my sponsor's: when I first worked those 2 weeks, it was for the benefit of my own plugin anyway, even if the code does not belong to my project.

Ultimately, where the code lives is not really important. What is important is that my project (and, for that matter, any project based on PHP) will be able to benefit from it.

In addition, anyone from the PHP community who starts using Rector because of my work on it, may learn that it was the GraphQL API for WP that made it possible, so I gain face and recognition.

So I think this is a win-win-win for all parties involved:

Some personal takeaways from this experience:

My next step is to share my work on Rector with the broader WordPress and PHP communities: I've just published an intro to downgrading from PHP 8.0 to 7.x, and in a few weeks I'll publish a step-by-step guide on transpiling code for a WordPress plugin, using my repo as the example.

Hopefully, along the way I'll be able to get new sponsors, and eventually achieve long-term economic certainty with my plugin 😀

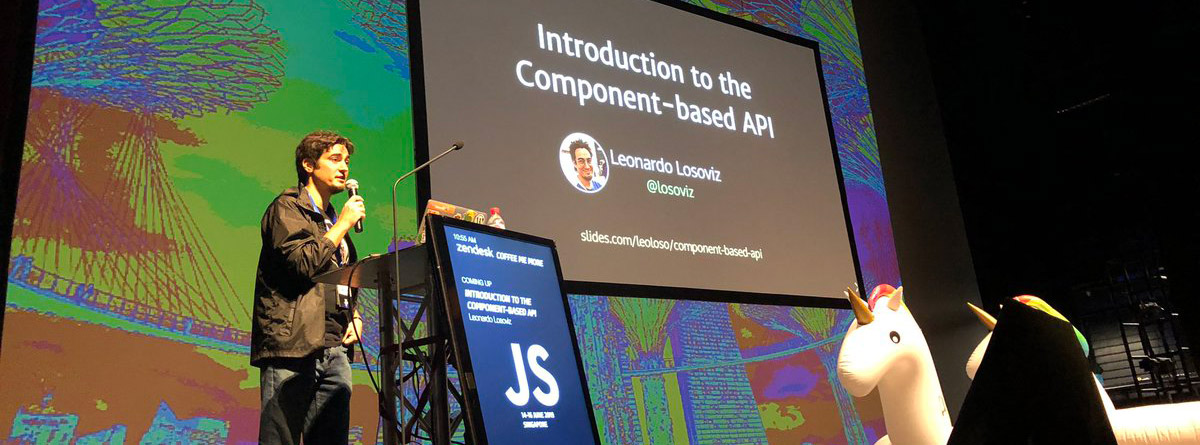

]]>My presentation starts at 25:37. It is only around 20 min long (15 min presenting + 5 min of Q&A).

Please check it out. It's a succinct summary of the benefits of using GraphQL in WordPress, through my plugin GraphQL API for WordPress.

These are the slides:

I hope you enjoy it!

]]>My proposed feature then appears to be not about GraphQL as we know it nowadays, but about an über GraphQL, or what GraphQL could possibly be. That's either a problem, or an opportunity. In this write-up, Alan Johnson says:

[...] the execution model of GraphQL is in many ways just like a scripting language interpreter. The limitations of its model are strategic, to keep the technology focused on client-server interaction. What's interesting is that you as a developer provide nearly all of the definition of what operations exist, what they mean, and how they compose. For this reason, I consider GraphQL to be a meta-scripting language, or, in other words, a toolkit for building scripting languages.

I agree with this observation, but then I wonder: Where do these limitations start? What should be allowed, and what not? If any feature made GraphQL's scripting capabilities a bit more visible, gave a bit more control to the developer, and made the query a bit more powerful, should that be straightforward rejected? Or could it be given a chance?

Let's talk business now. Here is something that GraphQL is not good at.

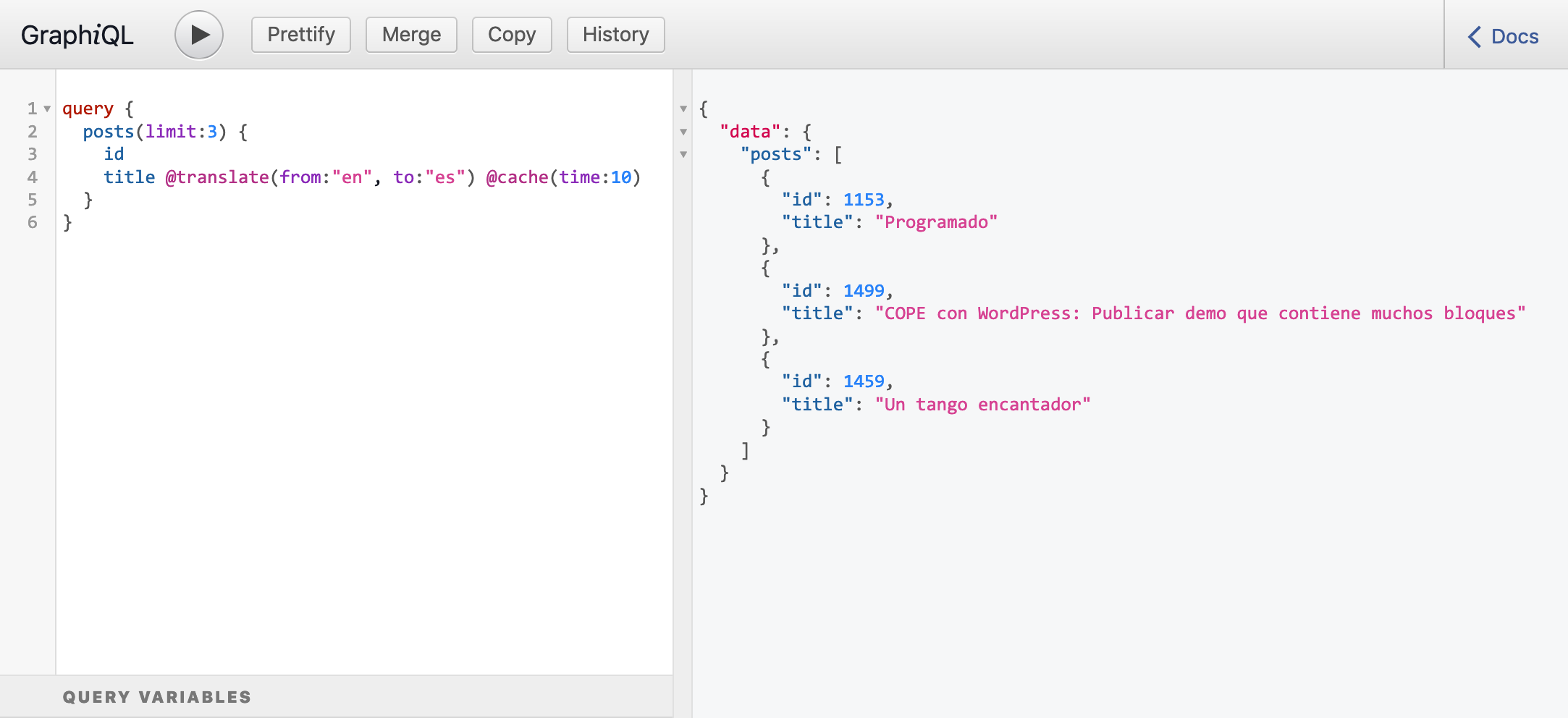

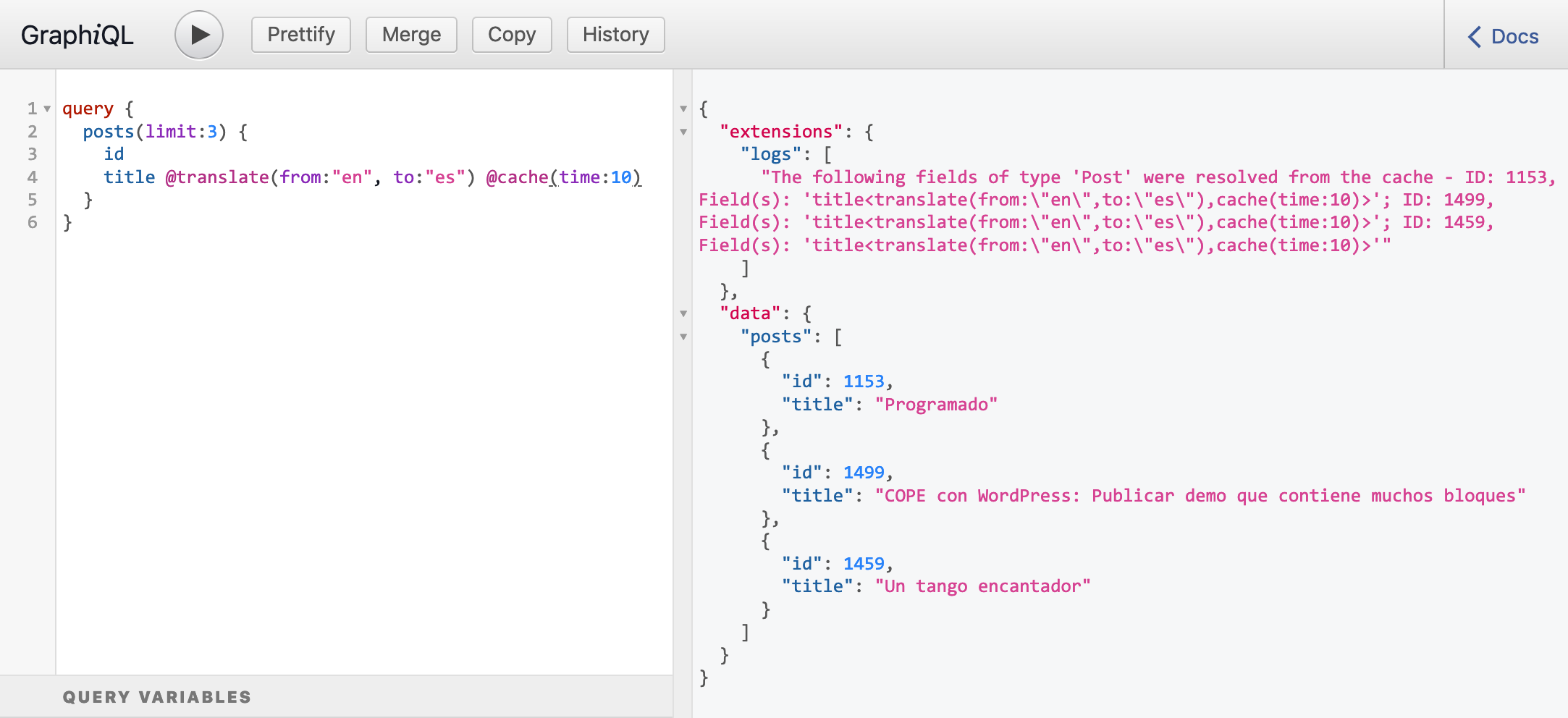

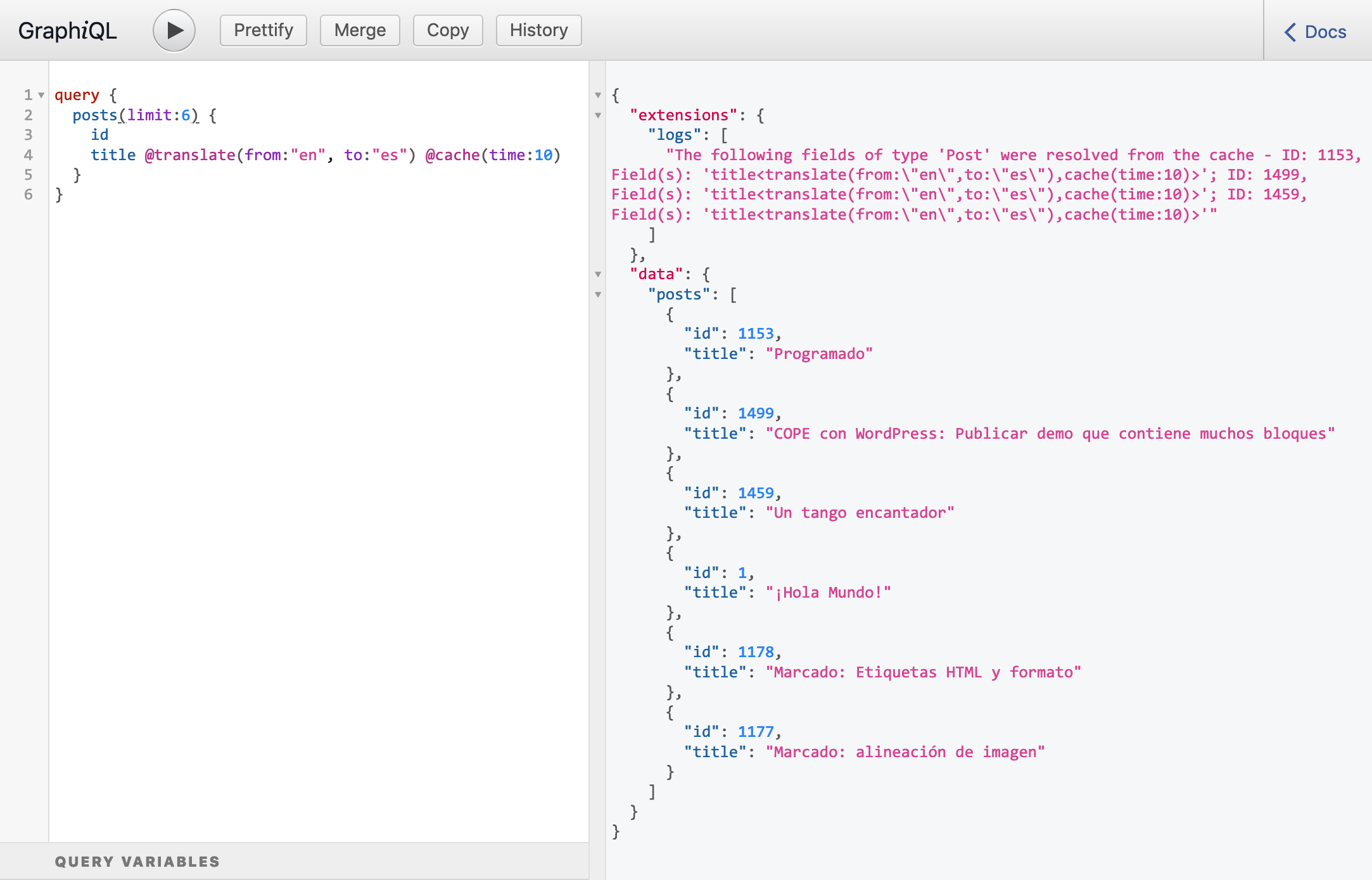

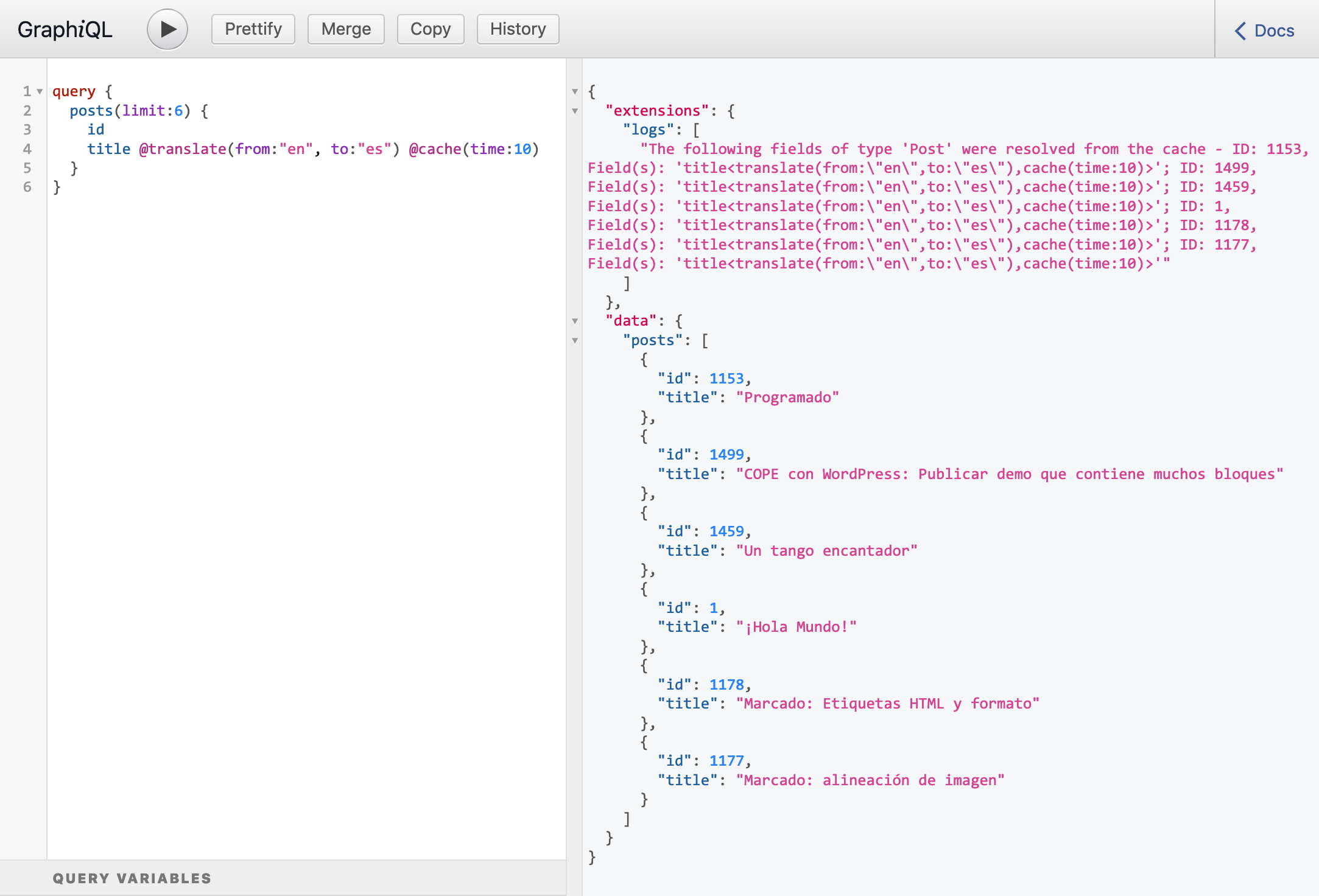

Say that you have a @translate directive that is applied on a String, as in this query:

{

posts {

id

title @translate(from: "en", to: "es")

}

}You cannot apply @translate on a field different than a String. If you need to, you must then create a new directive, which involves extra effort (often being ad-hoc) and pollutes the schema:

If a field returns [String], you'd need to create another directive @translateArrays

If only some entries from the array must be translated, you need to add an optional argument $keys: [String] to specify which keys to translate

If the keys are not strings, but are numeric, you need another argument $numericKeys: [Int] as to avoid type conflicts

If instead of an array, you get an array of arrays, you need yet another directive

And so on, concerning any random requirement from your clients.

As a result, the schema might eventually become unwieldy.

So, how could this situation be improved for GraphQL?

If GraphQL had capabilities to compose or manipulate fields, then a few elements could already satisfy all possible combinations.

GraphQL by PoP (the engine powering the recently launched GraphQL API for WordPress) is a GraphQL server because it respects the GraphQL spec, but is also a non-standard API server that provides other capabilities, including composable fields and composable directives.

Let's see how this server can satisfy all combinations described above, with just a few elements:

Notes:

- GraphQL by PoP relies on the URL-based PQL syntax, so you can click on the links to execute the query and see its response

- Field

Root.echois used to build the arraysforEachandadvancePointerInArrayare directives that composes another directive

Translating posts as strings (run query):

posts.title<

translate(from:en, to:es)

>Translating a list of strings (run query):

echo([

hello,

world,

how are you today?

])<

forEach<

translate(from:en,to:es)

>

>Translating only one element from the list of strings, with numeric keys (run query):

echo([

hello,

world,

how are you today?

])<

advancePointerInArray(path: 0)<

translate(from:en,to:es)

>

>Translating only one element from the list of strings, with keys as strings (run query):

echo([

first:hello,

second:world,

third:how are you today?

])<

advancePointerInArray(path:second)<

translate(from:en,to:es)

>

>Translating an array of arrays (run query):

echo([[

one,

two,

three

], [

four,

five,

six

], [

seven,

eight,

nine

]])<

forEach<

forEach<

translate(from:en,to:es)

>

>

>And so on, concerning any random requirement from your clients.

In my opinion, these features make the queries more powerful, and the schema more elegant. So they could be perfectly considered to be added to GraphQL.

Bringing these additional scripting capabilities to GraphQL, wouldn't it be more valuable than not?

I understand that there is more complexity added to the server. But that's a one-time off. The GraphQL server maintainers can implement these features in a few months, and developers would be able to use them forever.

Isn't that a good tradeoff?

]]>If we want to make GraphQL good at transforming data, we need much more than string interpolation.

I don't disagree with this, but I don't have a clear answer. If we allow String interpolation, should we do the same for Ints, such as allowing additions or substractions?

I'd say no, but then why not? If we do allow it, something like this could be possible:

query {

service @include(if: {{ totalCredits }} - {{ usedCredits }} > 0) {

id

}

}I do not support this use case as shown here, I certainly don't like it. The question is why then we do allow for String interpolation? Because it enables templating, which could be considered a legitimate use case:

mutation {

comment(id: 1) {

replyToComment(data: data) {

id @sendEmail(

to: "{{ parentComment.author.email }}",

subject: "{{ author.name }} has replied to your comment",

content: "

<p>On {{ comment.date(format: \"d/m/Y\") }}, {{ author.name }} says:</p>

<blockquote>{{ comment.content }}</blockquote>

<p>Read online: {{ comment.url }}</p>

"

)

}

}

}Programming languages are good at transforming data. Why not use application logic?

Indeed, my initial proposed features for the spec, composable fields and composable directives, add meta-scripting capabilities to GraphQL.

How could that be benefitial? Say that you have a @translate directive that is applied on a String, as in this query:

query {

posts {

id

title @translate(from: "en", to: "es")

}

}Now, what happens if a field returns [String], i.e. a list of Strings? Then you can't use @translate anymore, you'd need to create another directive @translateArrays. And if there is only one entry from the array you need to translate, and not all of them? Then you need to add an optional argument $keys: [String] to specify which keys to translate. And if the keys are not strings, but are numeric? Or if instead of an array, you get an array of arrays? And so on, and on, and on.

Working with only fields to fetch data, the schema might eventually become unwieldy.

Now, if we have capabilities to compose or manipulate fields, then there is no need to pollute the schema with ad-hoc fields to satisfy each custom combination.

For GraphQL by PoP (a GraphQL server that I've designed from scratch), I have accomplished this through a syntax called PQL, which is a superset from the GraphQL query, supporting composable fields and composable directives.

Let's see how all combinations can be satisfied just composing elements:

posts.title<translate(from:en,to:es)>echo([hello, world, how are you today?])<forEach<translate(from:en,to:es)>>echo([hello, world, how are you today?])<advancePointerInArray(path: 0)<translate(from:en,to:es)>>echo([first:hello, second:world, third:how are you today?])<advancePointerInArray(path:second)<translate(from:en,to:es)>>echo([[one, two, three], [four, five, six], [seven, eight, nine]])<forEach<forEach<translate(from:en,to:es)>>>Embeddable fields is a watered-down version of composable fields, good enough for templating, but not for more advanced use cases.

In your article you argue, that it is better to do this on GraphQL, but I don't understand why it would be.

I think there is value in GraphQL having additional capabilities. If a GraphQL query can execute a complex operation all by itself, the query may become more difficult, but the overall application would become much simpler.

For instance, instead of a typical workflow of using GraphQL to retrieve data, process the data in the client with JavaScript, and then execute some operation in the server with this data, a single GraphQL query with meta-scripting capabilities can completely do away with the client. This is not just fewer lines of code, it's also fewer systems involved.

As an example that I've implemented for demonstration purposes, a single query can send a localized newsletter.

This is not far-fetched. I think GraphQL can be considered good for more than just fetching and posting data, because in this modern world of APIs interacting with cloud-based services, it's difficult to determine what is fetching data, and what is executing functionality.

For instance, are these cases within the confines of just fetching/posting data?

These are all operations that can be perfectly integrated within the GraphQL service, and that are typically found on a CI/CD pipeline. Imagine if the pipeline stages were GraphQL queries. GraphQL would then become the interface not just for fetching/posting data, but also for interacting with services.

I'm pretty confident that providing a robust support to GraphQL to interact with these cloud-based services can only make our API more powerful, capable of supporting more use cases, and better prepared for new requirements in the future.

Another principle for GraphQL was coined by Lee Byron: A GraphQL server should only expose queries, that it can fulfill efficiently.

These are not contradictory propositions. If well architected, the GraphQL server will not necessarily degrade its performance. GraphQL by PoP, for instance, resolves the query with linear complexity time on the number of types, so it supports composable fields to any number of levels without a scratch.

The more features we add to GraphQL, the harder it becomes to ensure, that the queries are efficiently executable.

Same as above.

Furthermore, your functionality requires consecutive resolver executions for one single field. This fundamentally changes how queries are executed (in a way that IMO is incompatible with the spec).

That's up to interpretation. I have not seen it described in the spec, and I believe it should not be there, since the spec is about defining standards on how the API must behave, and not about the nitty-gritty of the server's implementation.

GraphQL is designed to be simple on purpose.

I agree that these changes add complexity to the GraphQL servers, and extra capabilities to the GraphQL queries that make it more difficult to learn.

But at the same time, they make the GraphQL service more powerful and versatile, and enable the architecture of the overall application to become simpler.

For me the question is, is it worth it?

]]>This post is part of the groundwork to find out if there is support for this feature within the GraphQL community. If there is, only then I'll submit it as a new issue to the GraphQL spec repo for a thorough discussion, and offer to become its champion.

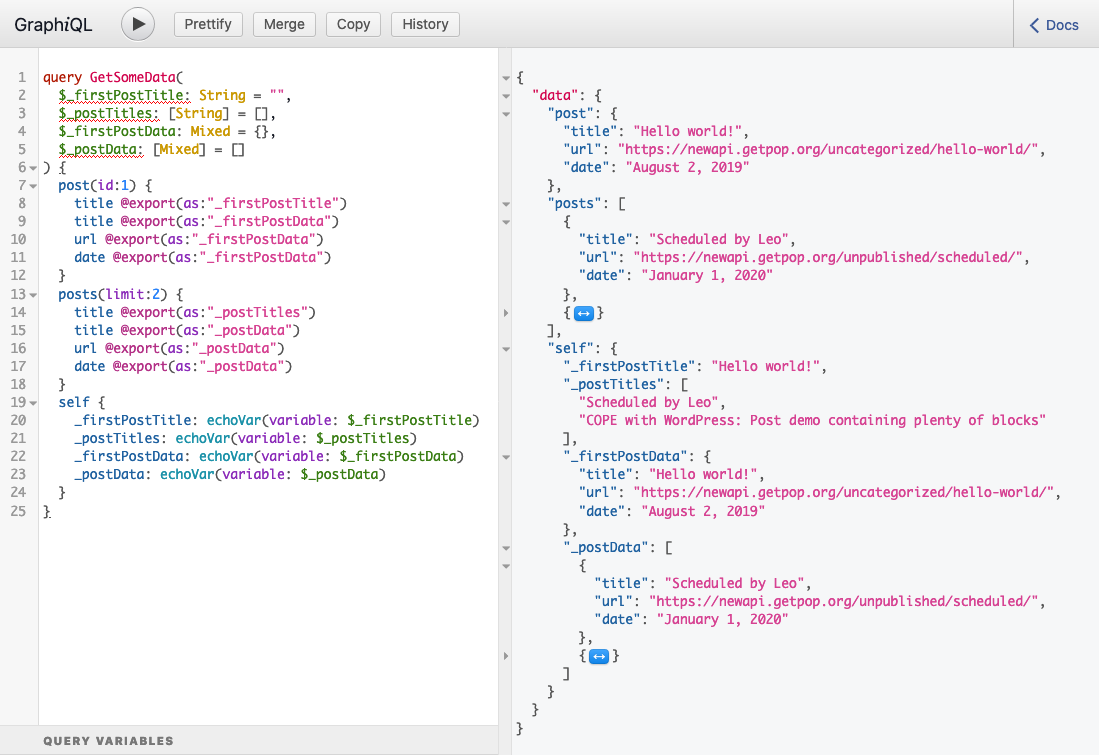

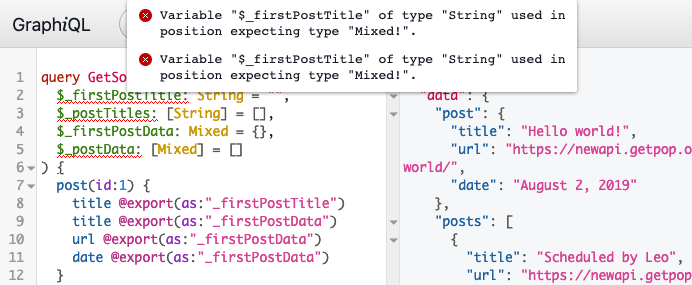

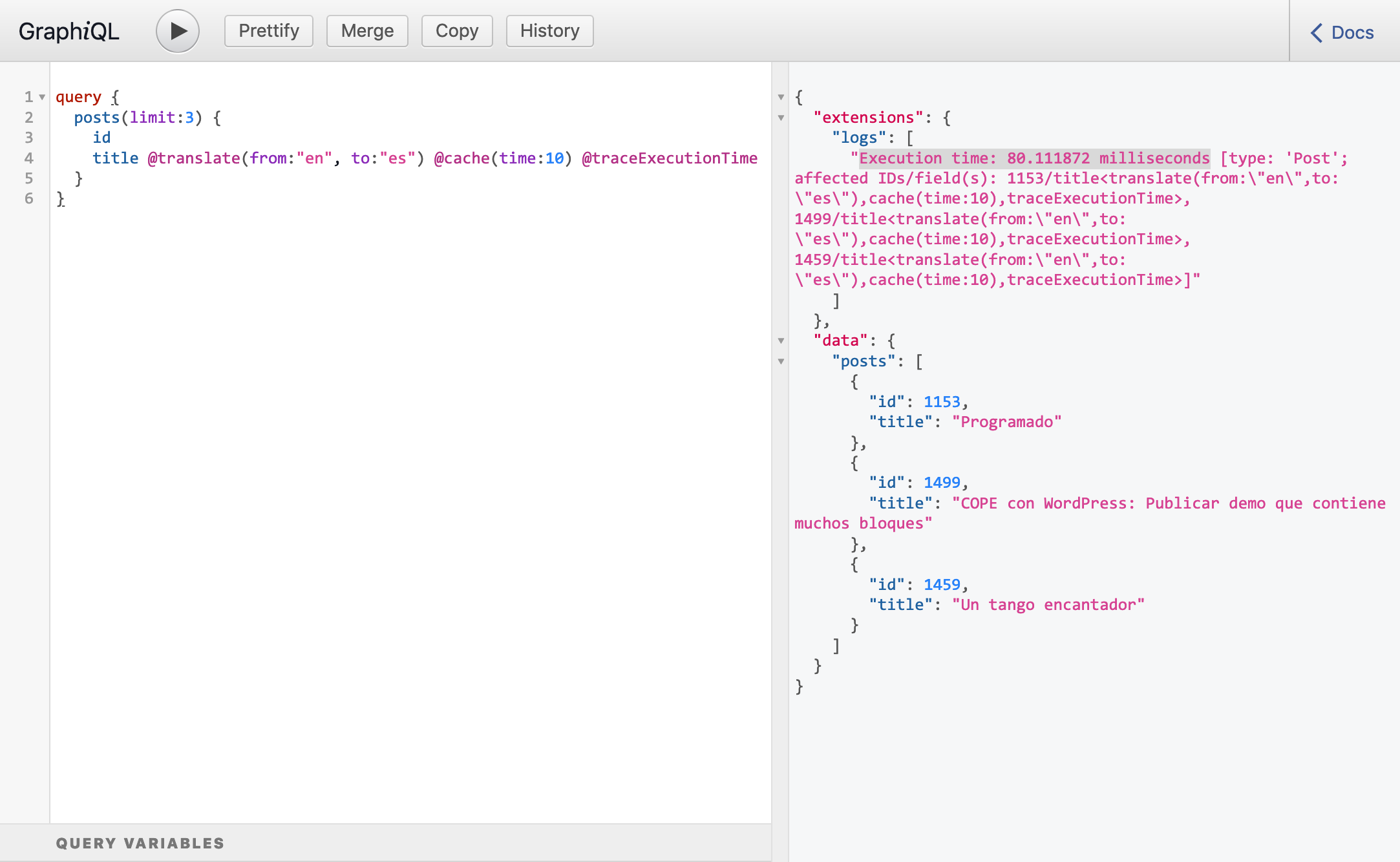

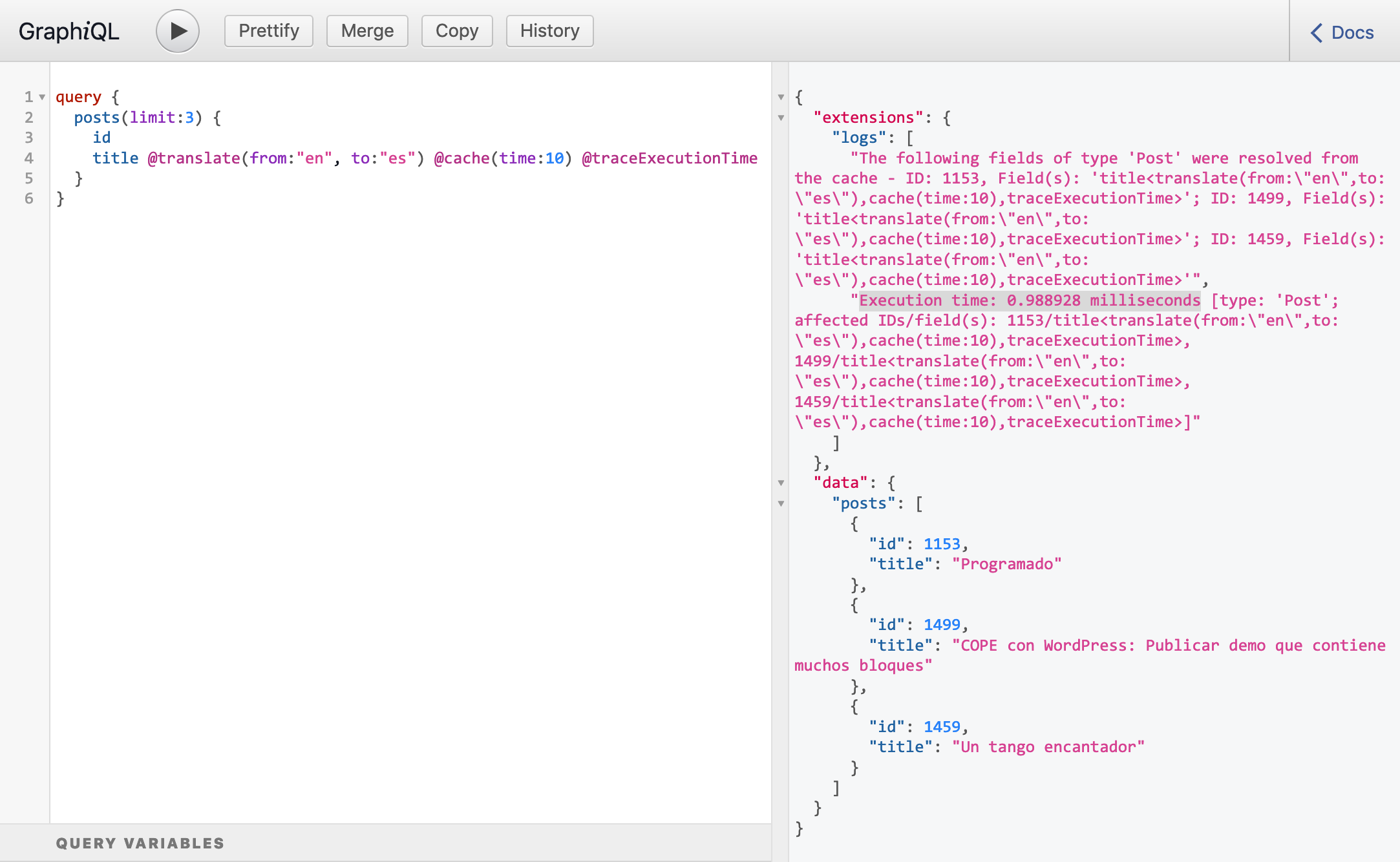

Note: This feature is already supported by GraphQL by PoP. Click on the "Run" button on the GraphiQL clients throughout this post, to execute the query and see the expected response.

Embeddable fields is a syntax construct, that enables to resolve a field within an argument for another field from the same type, using the mustache syntax {{field}}.

Note: To make it convenient to use, field

echoStr(value: String): Stringcan be added to the schema, as in the examples shown throughout this post.

This query contains embedded fields {{title}} and {{date}}:

The syntax can contain whitespaces around the field: {{ field }}.

This query contains embedded fields {{ title }} and {{ date }}:

The embedded field may or may not contain arguments:

{{ fieldName }}{{ fieldName(fieldArgs) }}This query formats the date: date(format: \"d/m/Y\"):

Note: The string quotes must be escaped:

\"

Embedded fields also work within directive arguments.

This query resolves field title only if the same post has comments:

Note: Using embeddable fields together with directives

@skipand@includeis an interesting use case. However, conditionifexpects aBoolean, not aString; even though the query can be resolved properly in the server, there is type mismatch in the client.This proposal may suggest to accept embedded fields also on their own, and not only within a string, so they can be casted to their own type:

@skip(if: {{ hasComments }}). More on this below.

This query resolves field title in two different ways, depending on the post having comments or not:

Why would we want a GraphQL query to support embeddable fields? The following are benefits I've identified so far.

In most situations, we have a client to request the data from the GraphQL server and transform it into the required format.

For instance, a website on the client-side can process the data with JavaScript, as to transform fields title and date into a description:

const desc = `Post ${ response.data.title } was published on ${ response.data.date }`However, in some situations we may need to retrieve the data for a service that we do not control, and which does not offer tools to process the results.

For instance, a newsletter service (such as Mailchimp) may accept to define an endpoint from which to retrieve the data for the newsletter. Whatever data is returned by the endpoint is final; it can't be manipulated before being injected into the newsletter.

In these situtations, the query could use embeddable fields to manipulate the response into the required format. This could be particularly useful when accessing GraphQL over HTTP.

The use case above could also be satisfied by adding an extra field Post.descriptionForNewsletter to the schema. But this solution clutters the schema, and embeddable fields could be considered a more elegant solution.

Embeddable fields could be compared to arrow functions in JavaScript, which is syntactic sugar over a feature already available in the language.

Arrow functions are not really needed, but they provide benefits:

As such, the feature becomes a welcome-to-have in the language, producing a better development experience.

if condition in @skip and @include can become dynamicCurrently, argument "if" for the @skip and @include directives can only be an actual boolean value (true or false) or a variable with the boolean value. This behavior is pretty static.

Embeddable fields would enable to make this behavior more dynamic, by evaluating the condition on some property from the object itself.

There is an issue to address: if is a Boolean, not a String, so to avoid type conflicts the GraphQL syntax may also need to accept the embedded field on its own, not wrapping it between string quotes:

query {

posts {

id

title @skip(if: {{ hasComments }})

}

}Removing the need to wrap {{ }} between quotes " " would solve this issue for every scalar type other than String, not just Boolean (check the example below with droid, using embeddable fields to resolve an ID).

Embeddable fields enable to embed a template within the GraphQL query itself, which would render the GraphQL service more configuration-friendly.

For instance, combined with the flat chain syntax and nested mutations (two other features also proposed for the spec), we could produce the following query, which sends an email to the user notifying that his/her comment was replied to:

mutation {

comment(id: 1) {

replyToComment(data: data) {

id @sendEmail(

to: "{{ parentComment.author.email }}",

subject: "{{ author.name }} has replied to your comment",

content: "

<p>On {{ comment.date(format: \"d/m/Y\") }}, {{ author.name }} says:</p>

<blockquote>{{ comment.content }}</blockquote>

<p>Read online: {{ comment.url }}</p>

"

)

}

}

}Proposed feature [RFC] exporting variables between queries attempts to @export the value of a field, and inject it into another field in the same query:

query A {

hero {

id @export(as: "droidId")

}

}

query B($droidId: String!) {

droid (id: $droidId) {

name

}

}With embeddable fields and the flat chain syntax, this use case could be satisfied like this:

query {

droid (id: {{ hero.id }} ) {

name

}

}This feature breaks backwards compatibility. From the spec:

Once a query is written, it should always mean the same thing and return the same shaped result. Future changes should not change the meaning of existing schema or queries or in any other way cause an existing compliant GraphQL service to become non-compliant for prior versions of the spec.

In our case, if a query currently has this shape:

query {

foo: echoStr(value: "Hello {{ world }}!")

}...it expects the response to be:

{

"data": {

"foo": "Hello {{ world }}!"

}

}With embeddable fields the query above will produce a different response and, moreover, it may even produce an error message, as when there is no field Root.world.

In addition, considering the case of not wrapping {{ }} between string quotes " ", as in the query below:

query {

posts {

id

title @skip(if: {{ hasComments }})

}

}Currently, this query would produce a syntax error, being displayed in the GraphiQL client, and possibly not parsed by the server. This behavior would change.

Because of being backwards incompatible, it is suggested to make embeddable fields an opt-in feature, prompting users to be fully aware of the consequences before enabling it.

Embeddable fields would affect some components from the GraphQL workflow. How should these be dealt with?

The GraphiQL client shows an error message when a field does not exist, or if a field argument receives a value with a different type than declared in the schema, among other potential errors. Can this information be conveyed for embeddable fields too?

For this to happen, GraphiQL would need to parse the field argument inputs and identify all {{ fieldName(fieldArgs) }} instances, as to do the validations and show the error messages.

What happens when an embedded field does not exist? For instance, if in the query below, field {{ name }} exists but {{ surname }} does not:

{

users {

fullName: echoStr(value: "{{ name }} {{ surname }}")

}

}Should the response produce an error message, and skip processing the field? Eg:

{

"errors": [

"Field 'surname' does not exist, so 'echoStr(value: \"{{ name }} {{ surname }}\")' cannot be resolved"

]

}Or should the missing field be skipped but still resolve the field, and possibly show a warning? Eg:

{

"warnings": [

"Field 'surname' does not exist"

],

"data": {

"users": [

{

"fullName": "Juan {{ surname }}"

},

{

"fullName": "Pedro {{ surname }}"

},

{

"fullName": "Manuel {{ surname }}"

}

]

}

}Or should the failing field be removed altogether? (Notice there's still a space at the end of each resolved value):

{

"warnings": [

"Field 'surname' does not exist"

],

"data": {

"users": [

{

"fullName": "Juan "

},

{

"fullName": "Pedro "

},

{

"fullName": "Manuel "

}

]

}

}{{ field }}If we actually want to print the string "{{ field }}" in the response, without resolving it, how should it be done?

This feature is a less ambitious version of composable fields, differing in these aspects:

Embeddable fields are supported in GraphQL server GraphQL by PoP, and its implementation for WordPress GraphQL API for WordPress, in both as an opt-in feature.

If there is enough support for this feature, I will add an RFC issue to the GraphQL spec. Everyone is welcome to provide feedback in this Reddit post:

This is a mighty new version, with several new features and improvements:

✅ The GraphiQL Explorer has been added to all the GraphiQL clients, including the public ones ✅ Added support for GitHub Updater, to enable self-updating when there's a new version ✅ The plugin is coded with PHP 7.4, and can run with PHP 7.1 ✅ Introduced "embeddable fields", a custom GraphQL query syntax construct to enable templating and improve performance ✅ PHPStan has been upgraded to level 8 (the strictest level), reducing the change of bugs happening ✅ Release notes are displayed within the plugin, after being updated

👉🏽 Read the descriptions in detail in the release notes.

👉🏽 Install the plugin in your site: download gatographql.zip, and in the wp-admin go to Plugins => Add New => Upload Plugin to install it.

mutation {

comment(id: 1) {

replyToComment(data: data) {

id @sendEmail(

to: "{{ parentComment.author.email }}",

subject: "{{ author.name }} has replied to your comment",

content: "

<p>On {{ comment.date(format: \"d/m/Y\") }}, {{ author.name }} says:</p>

<blockquote>{{ comment.content }}</blockquote>

<p>Read online: {{ comment.url }}</p>

"

)

}

}

}This query demonstrates how sending notifications via Symfony Notifier will be accomplished (for email, Slack and SMS). It makes use of a few pioneering features, still being considered (to more or less extent) for the GraphQL spec:

🔥 Nested mutations

🔥 Embeddable fields (based on composable fields)

🔥 Flat chain syntax

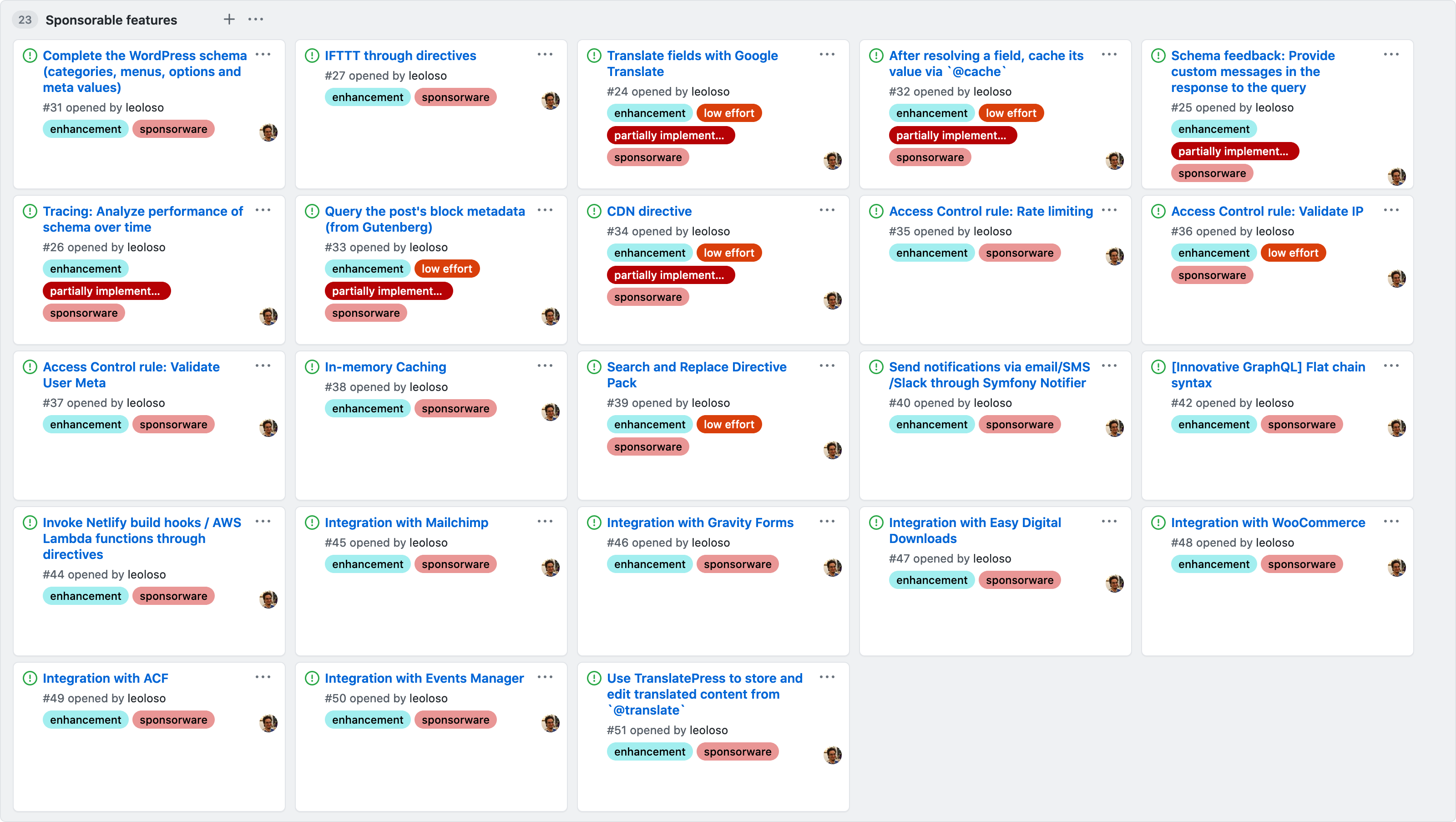

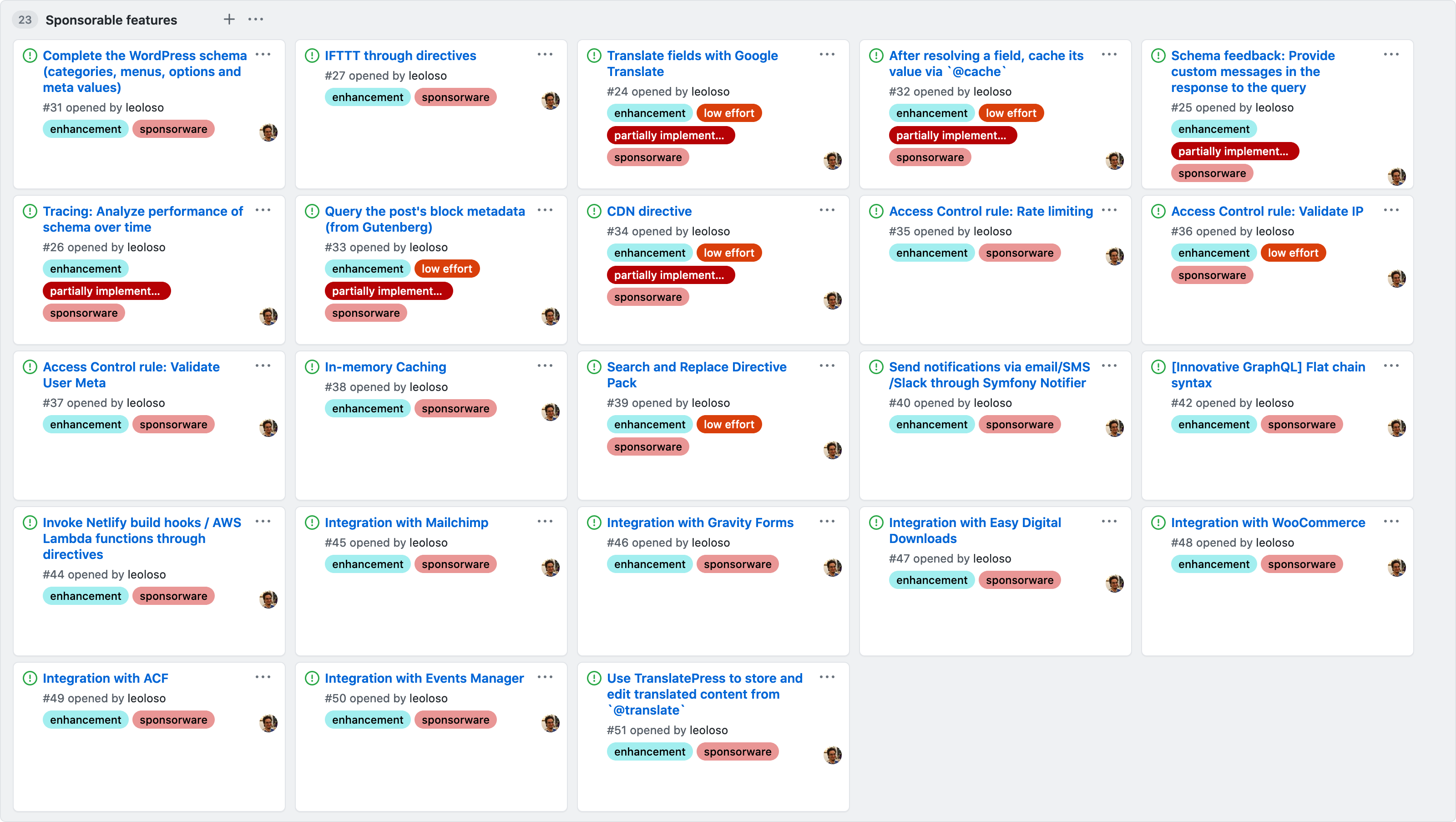

I am working to get the funding to implement them, through my recently launched GitHub sponsors. In total, currently there are 23 features looking for sponsorship:

Once implemented, the GraphQL API for WordPress may perfectly be the most forward-looking GraphQL in the market 🙀.

What do you think? Is it worth sponsoring this project? Want to become a sponsor?

Please share with your friends and colleagues! 🙏

]]>I'm building (I hope) the most forward-looking GraphQL server out there.

— Leonardo Losoviz (@losoviz) September 14, 2020

Here is how I plan to make it happen.https://t.co/2lOvqqh4lM

Following the example set by Caleb Porzio (who's making more than u$d 100k/y doing open source), I have decided to use the sponsorware model to fund my project. It works like this:

In a few months, I will also start creating instructional videos, explaining how to make the most out of the plugin. According to Caleb, this is the biggest money-making strategy.

I have also decided to add a middle tier (at u$d 70/m), where I provide Slack-based personal support, to help users of my plugin set-up GraphQL with WordPress, troubleshooting, and answering their questions. A user needed help to develop a functionality, so he decided to sponsor me <= my first sponsor ❤️

Finally, I added a higher tier (at u$d 700) for corporate sponsors. I plan to ask around in the WordPress community if their companies may be interested in participating. That would be a win-win: They get plenty of face from contributing to open source, and I get the certainty that I can make a living wage from my work and can focus on the development of the plugin (and not on marketing, which is not my forte).

I hope the sponsorware model works, and I can make a living while working on open source. I'll keep writing updates on how it goes, here on my blog, and on IndieHackers.

I have now listed down all the features I plan to implement if I can get the funding. Right now, there are 23 of them (some of them are low-effort, so they can be bundled together):

Adding directives to the schema in code-first GraphQL servers

This time, I explore several topics:

Now that the GraphQL API for WordPress has been released, I can use it to demonstrate the IFTTT feature, to add directives to the schema by configuration, not code:

As always, I hope you enjoy it!

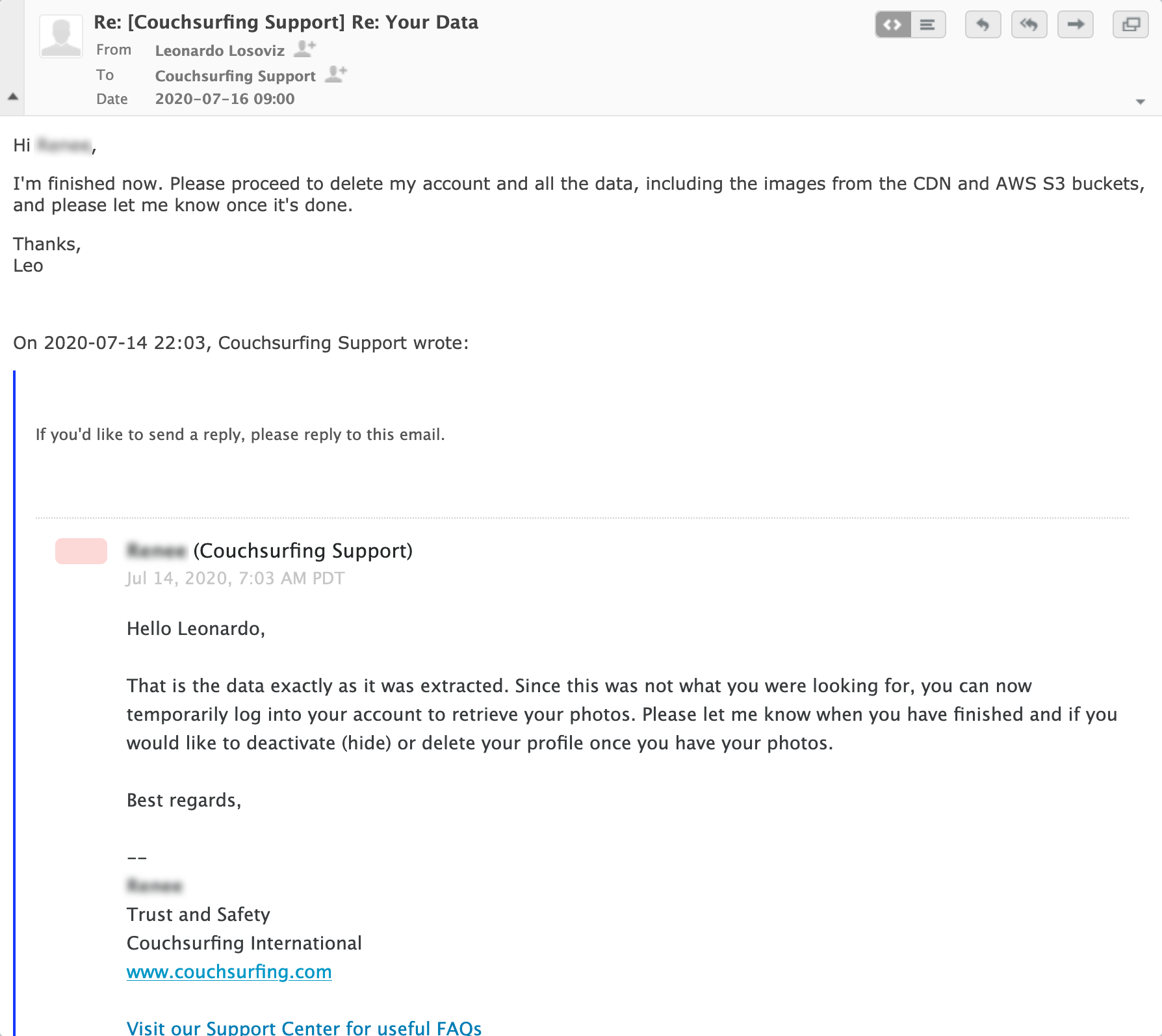

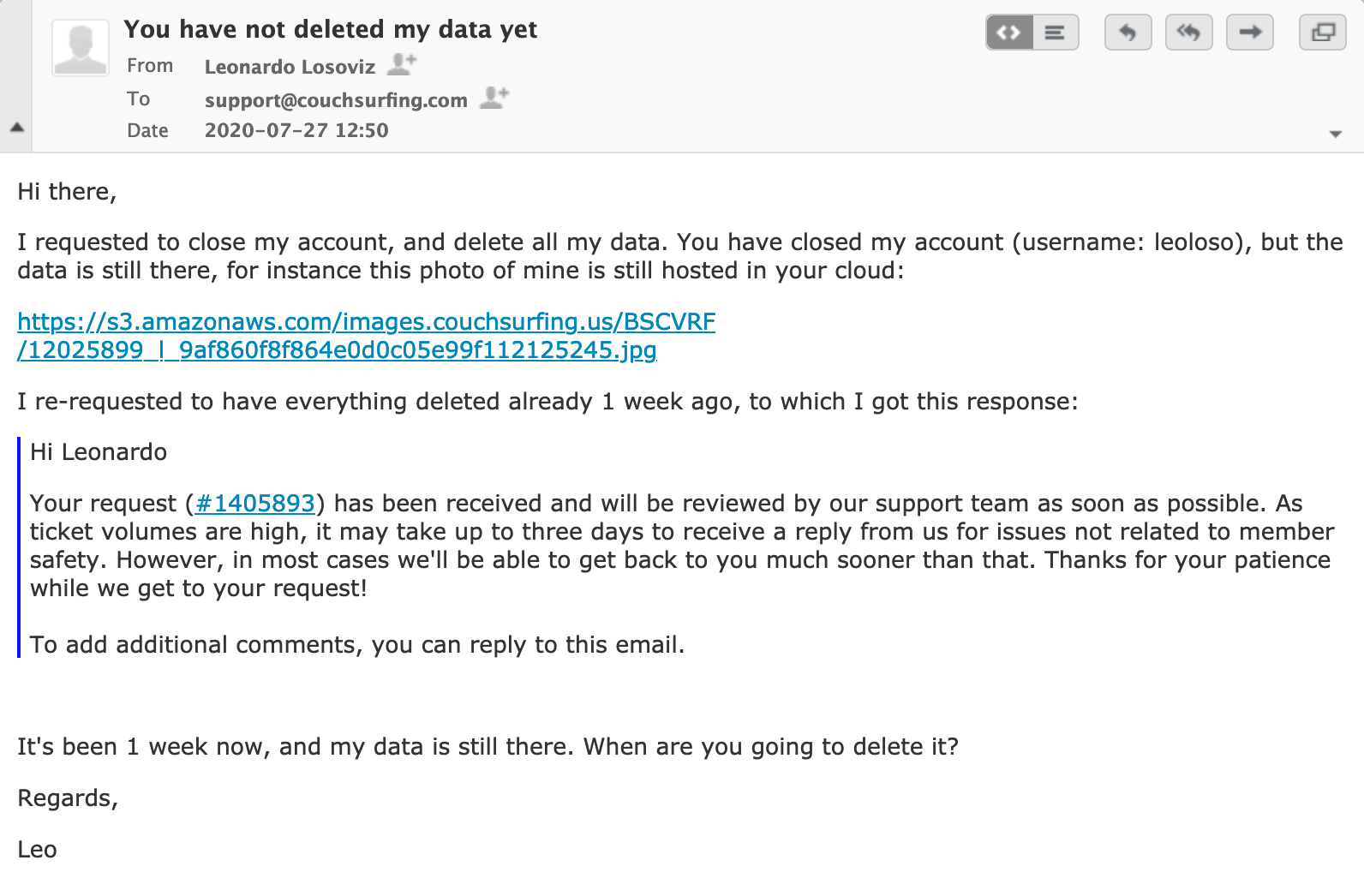

]]>But, surprise surprise, they had not deleted my photos! For instance, this photo of mine was still hosted in their cloud:

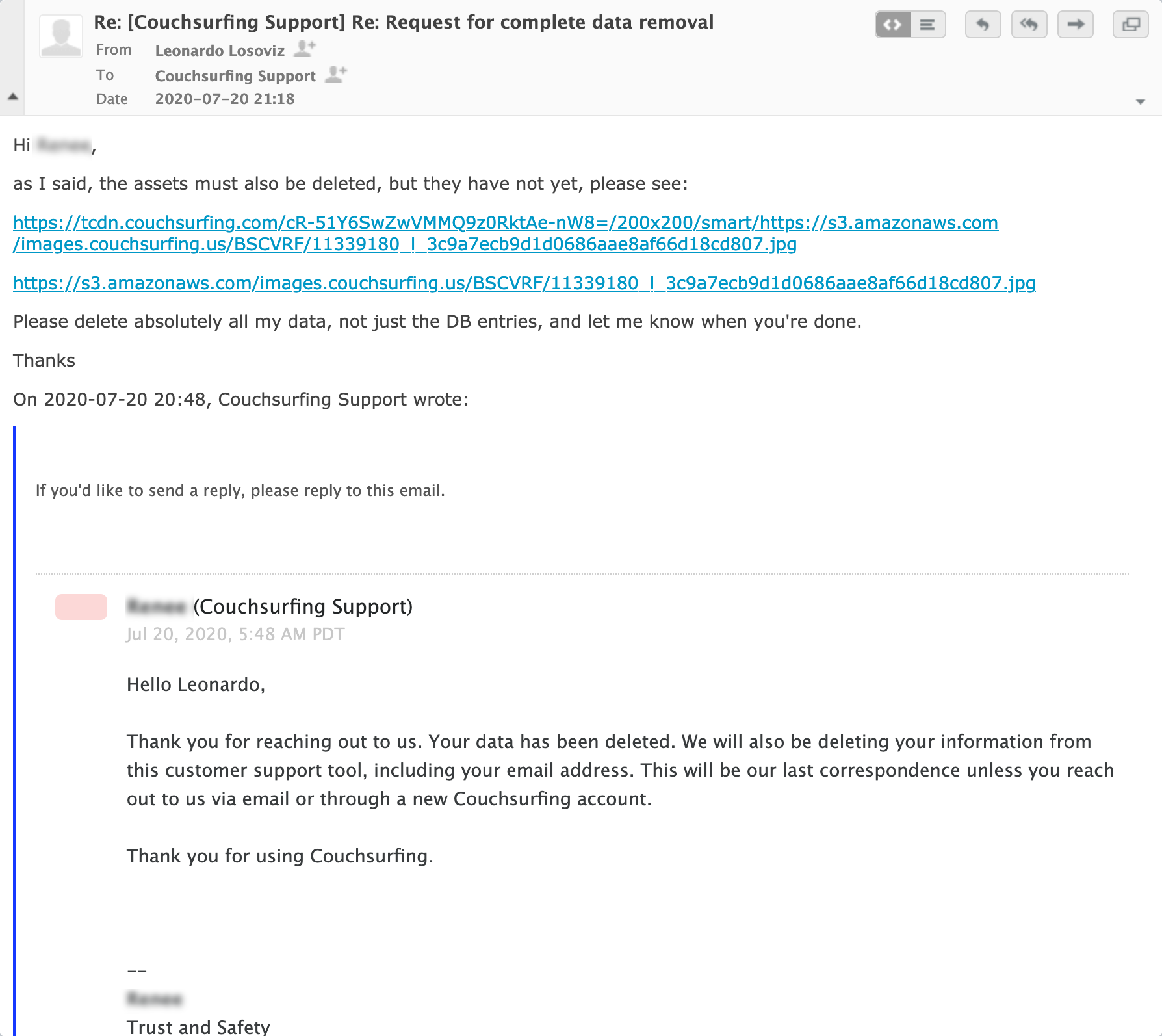

These guys should have deleted all my data, absolutely all of it, including photos. To be sure that that would happen, I explicitly mentioned this when requesting to close down my account:

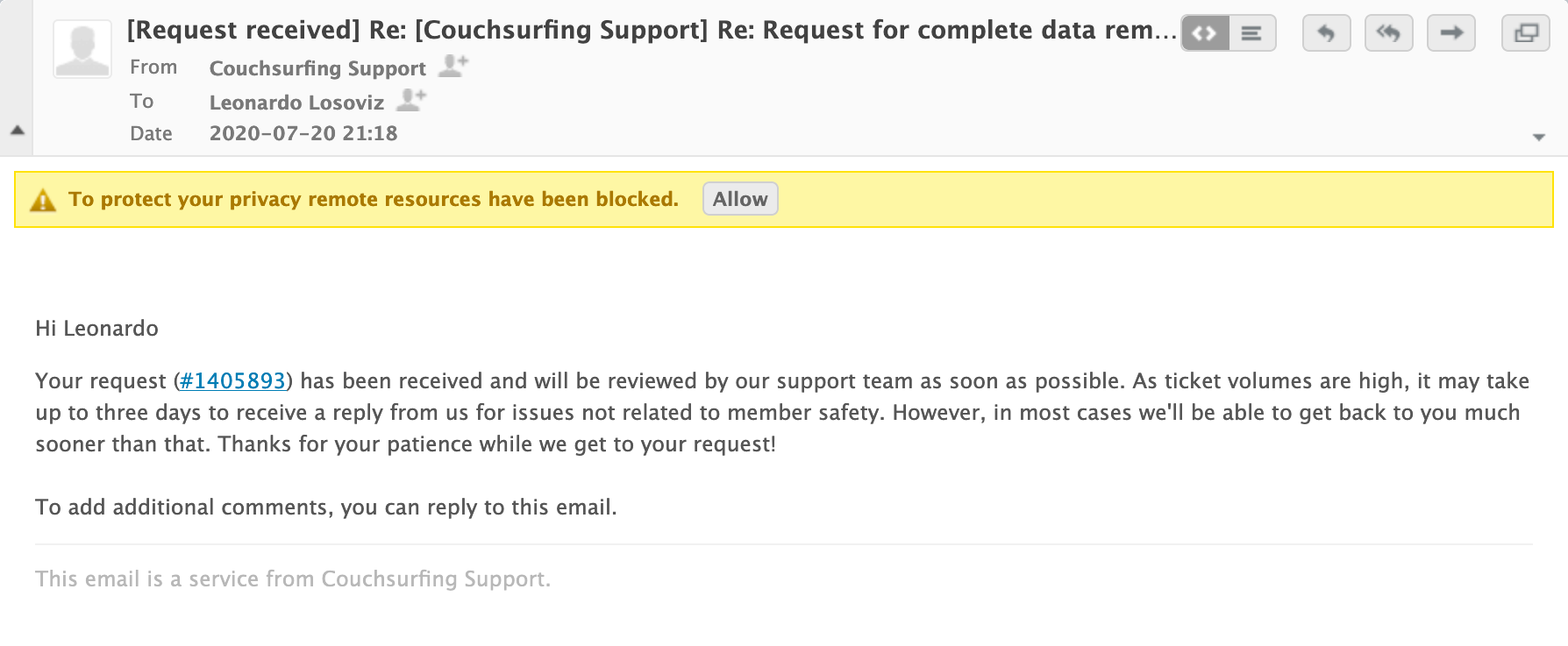

They deleted my account, and replied back saying that they were closing the ticket. As I noticed that my photos were still there, I replied to that same email, asking them, once again, to delete them:

To which I got a response, saying that my ticket would be handled within 3 days:

(Btw, if they deleted my data from their customer support tool, how does this system still know that my name is Leonardo? I hope they got it from the email headers, instead of lying about it.)

After one week, no response, my photos were still there. I wrote a new ticket to them:

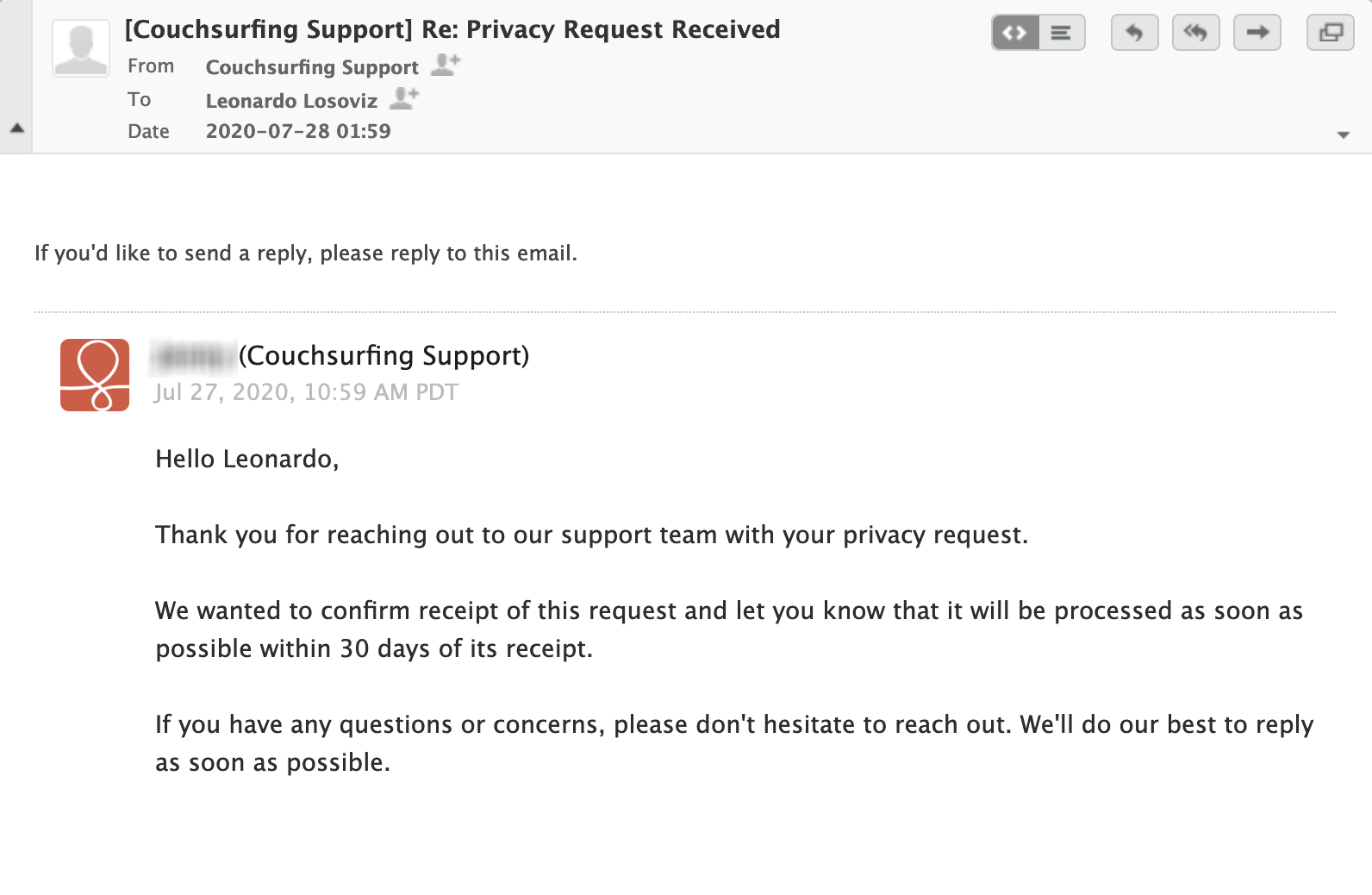

And what was their response? That they needed 1 month to delete my photos!!!

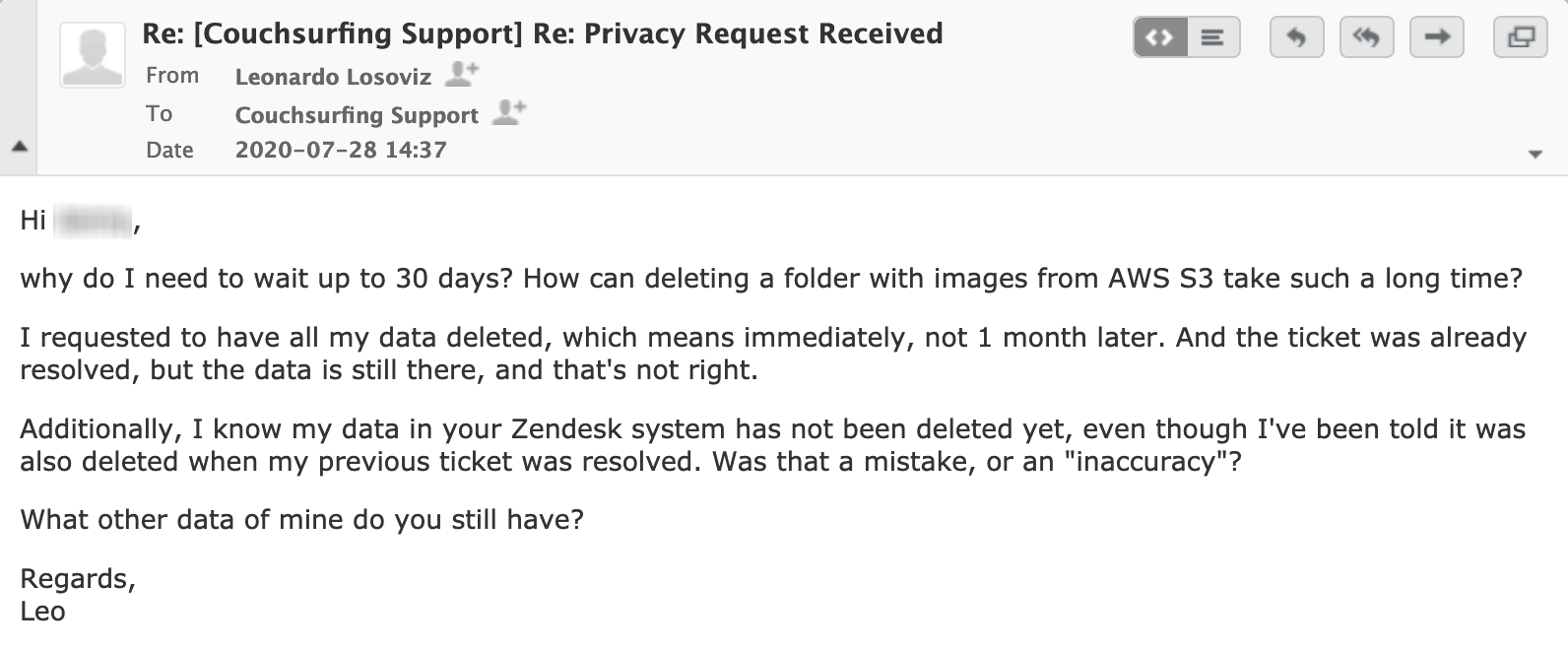

I replied back, asking why deleting a folder from AWS S3 (the hosting service from Amazon) takes such a long time:

I use AWS myself, and I know what it takes: Login to AWS => Click on the S3 link => Browse to the folder => Delete all the images => Delete the folder. Amount of time required: 5 minutes. 15 minutes max.

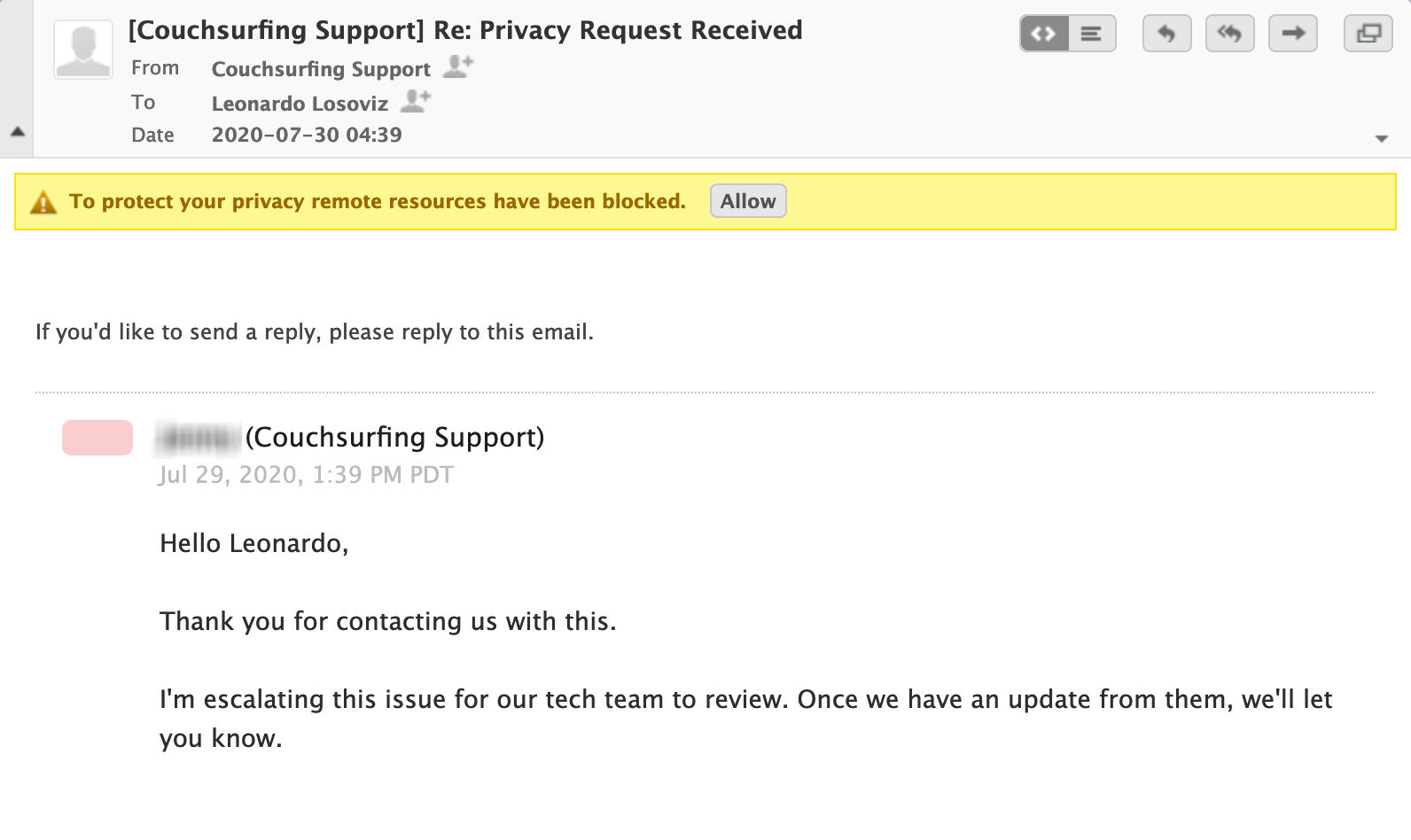

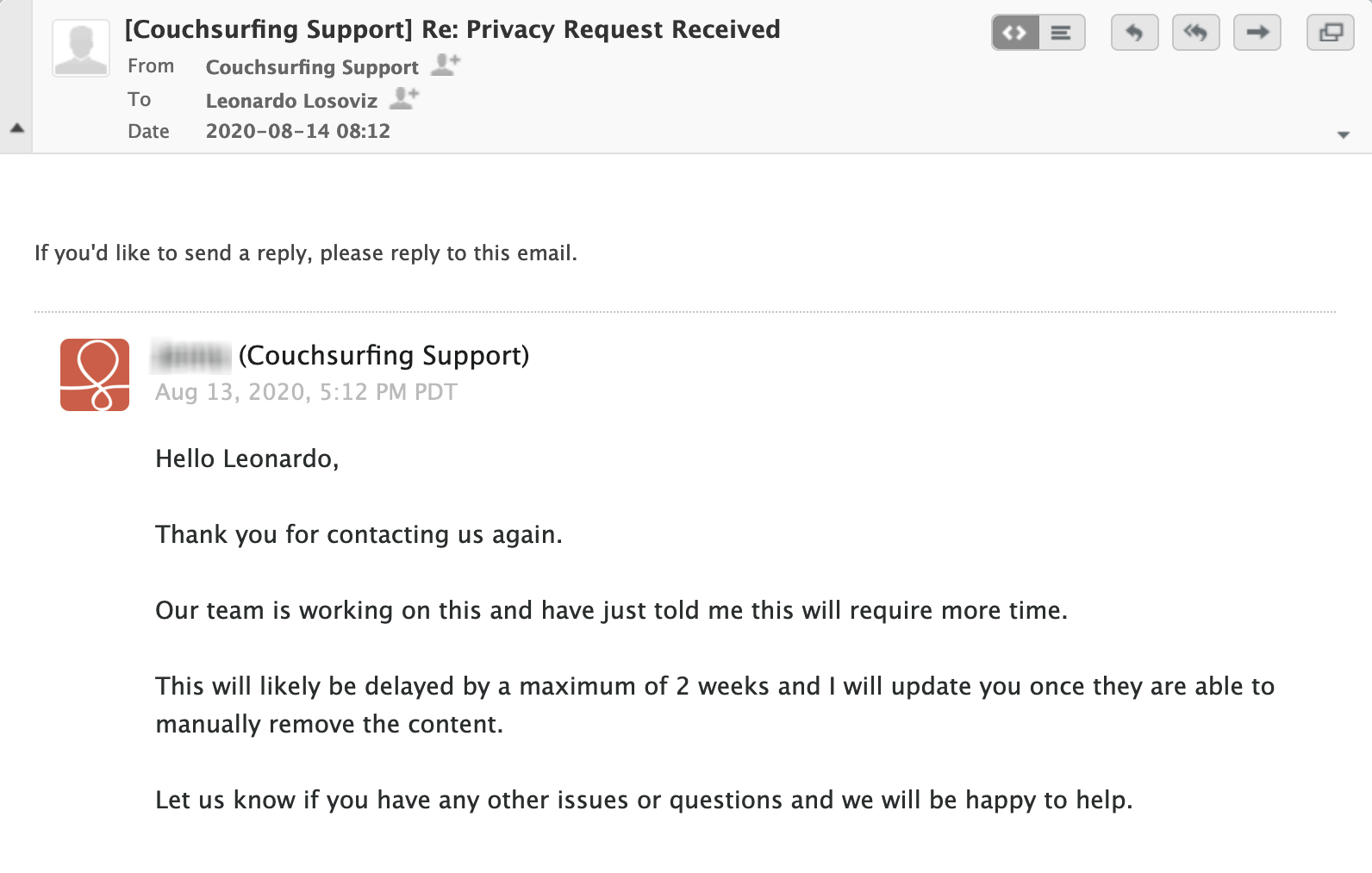

I got their response, saying they were escalating this issue:

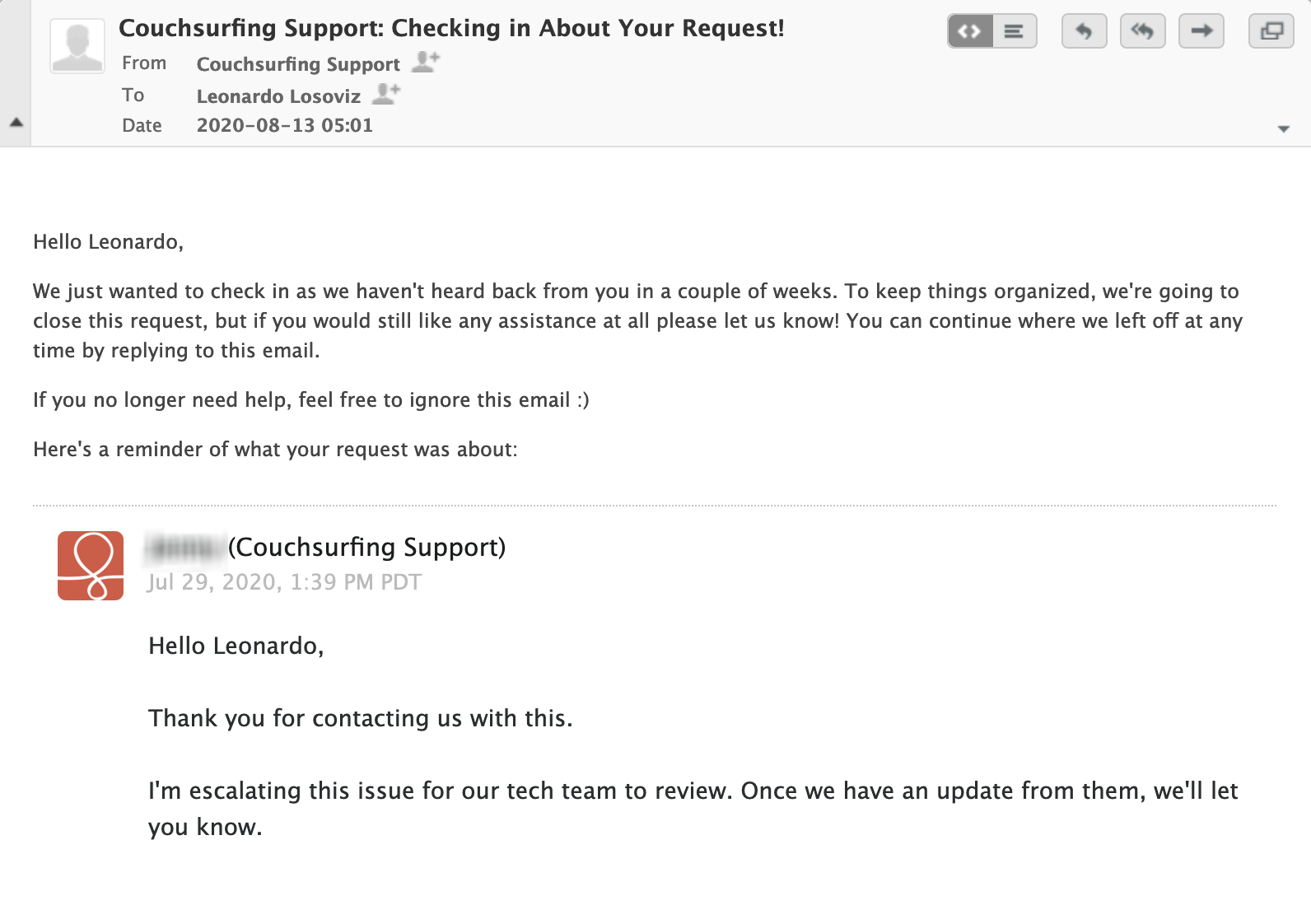

But, surprise surprise, they never contacted me again! And even more, 2 weeks later I got an automatic response, saying that my ticket was being closed because they hadn't heard back from me!:

I had to reply again, just to keep the ticket open:

And then I got a new response: they still needed 2 weeks to "manually" process my request:

That was the last interaction with them. These 2 weeks, I kept checking if my photos were there. Just before the 2 weeks were over, the photos had been deleted.

Escalation? What escalation? They took their whole time. Giving me a response after they had deleted my photos? Nops, that never happened. How did Zendesk know my name? They never explained. What other data do they still have about me? Who knows?

The main issue is: why did they have to manually delete my photos? When I requested to have my data deleted, that meant all my data, including the photos. If they have some automatic system to delete data, they seem to be cherry-picking what data to delete.

I had to write not once but twice, to have my data actually deleted, and wait and wait and wait.

My wife also wrote to them, twice, to have her photos deleted. But they never replied back to her, and up to this day her photos are still in their cloud.

I know what will happen. If they come across this blog post, the Couchsurfing guys will make some excuse, they will say it was a mistake, "we are very sorry, but look, we have deleted the images now, and we'll take better care in the future, because we care about our community, oh yes we love our community" (and then they'll repeat the word community 37 times).

I wouldn't be surprised that this is not an isolated case (and my wife's photos are still hosted by them, after she repeatedly requested for their deletion). I bet that they are only deleting the user data from their website database, but they are keeping some other assets, such as the user photos.

And this, through the GDPR legislation, is illegal.

I'm not European, so I can't do anything about it. But if you're European, and you have requested to delete your CS account and all its data, you can try to find out if they have deleted your images!.

If they still hold your photos (which is your data, not theirs), they could be punished through GDPR.

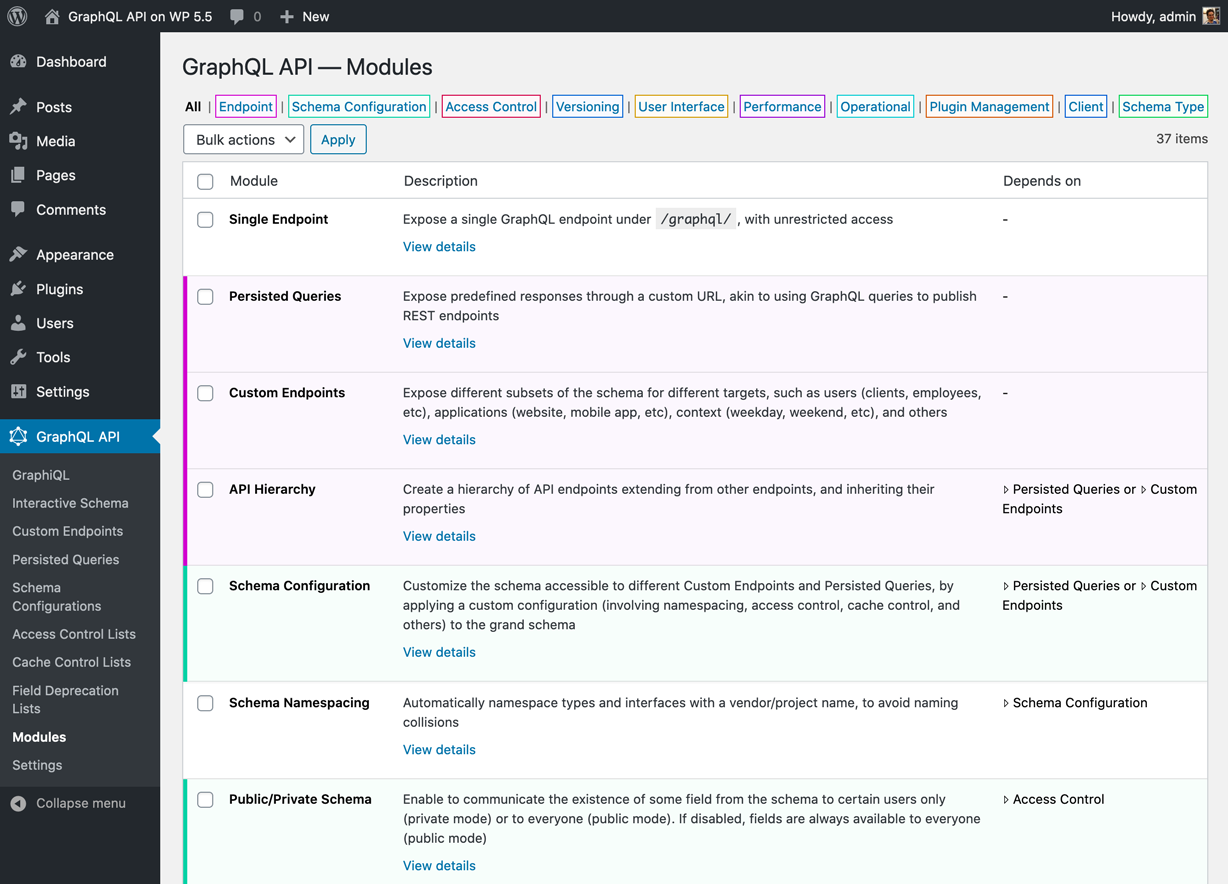

]]>The plugin now ships 37 modules from 10 categories, distinguished by color:

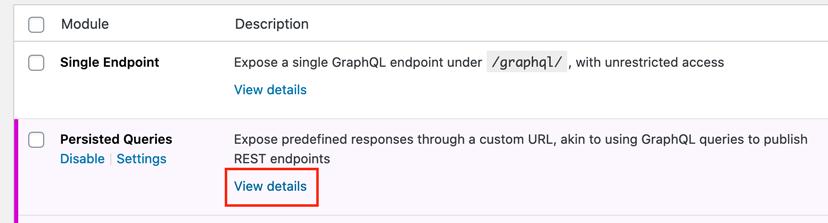

The docs have been implemented as Markdown, and they are opened when clicking on the View details link below each module:

Since Markdown can also be viewed directly in the GitHub repo, I implemented a cool feature: the same docs can be viewed within the plugin, or in the repo, from a single source of truth, but with 2 different presentations:

Check out the doc for Persisted Queries in the repo, and the same doc in the plugin:

Cool, isn't it? 😎

If you want to find out how it's done, the implementation code is here.

]]>It's been only 15 days since releasing the GraphQL API for WordPress, and I couldn't help myself, so this week I added yet a new feature: the server can now execute multiple queries in a single operation.

This is not query batching. When doing query batching, the GraphQL server executes multiple queries in a single request. But those queries are still independent from each other. They just happen to be executed one after the other, to avoid the latency from multiple requests.

In this case, all queries are combined together, and executed as a single operation. That means that they will reuse their state and their data. For instance, if a first query fetches some data, and a second query also accesses the same data, this data is retrieved only once, not twice.

This feature is shipped together with the @export directive, which enables to have the results of a query injected as an input into another query. Check out the query below, hit "Run" and select query with name "__ALL", and see how the user's name obtained in the first query is used to search for posts in the second query:

(GraphiQL currently does not allow to execute multiple operations. Hence, that __ALL query is a hack I added, as to tell the GraphQL server to execute all queries.)

This functionality is currently not part of the GraphQL spec, but it has been requested:

This feature improves performance, for whenever we need to execute an operation against the GraphQL server, then wait for its response, and then use that result to perform another operation. By combining them together, we are saving this extra request.

You may think that saving a single roundtrip is no big deal. Maybe. But this is not limited to just 2 queries: it can be chained, containing as many operations as needed.

For instance, this simple example chains a third query, and adds a conditional logic applied on the result from a previous query: if the post has comments, translate the post's title to French, but if it doesn't, show the name of the user. Click on the "Run" button below, see the results, then change variable offset to 1, run the query again, and see how the results change:

As we've seen, we could attempt to use GraphQL to execute scripts, including conditional statements and even loops.

GraphQL by PoP, which is the GraphQL engine over which the GraphQL API for WordPress is based, is a few steps ahead in providing a language to manipulate the operations performed on the query graph.

For instance, I have implemented a query which allows to send a newsletter to multiple users, fetching the content of the latest blog post and translating it to each person's language, all in a single operation!

Check the query below, which is using the PoP Query Language, an alternative to the GraphQL Query Language:

/?

postId=1&

query=

post($postId)@post.

content|

date(d/m/Y)@date,

getJSON("https://newapi.getpop.org/wp-json/newsletter/v1/subscriptions")@userList|

arrayUnique(

extract(

getSelfProp(%self%, userList),

lang

)

)@userLangs|

extract(

getSelfProp(%self%, userList),

email

)@userEmails|

arrayFill(

getJSON(

sprintf(

"https://newapi.getpop.org/users/api/rest/?query=name|email%26emails[]=%s",

[arrayJoin(

getSelfProp(%self%, userEmails),

"%26emails[]="

)]

)

),

getSelfProp(%self%, userList),

email

)@userData;

post($postId)@post<

copyRelationalResults(

[content, date],

[postContent, postDate]

)

>;

getSelfProp(%self%, postContent)@postContent<

translate(

from: en,

to: arrayDiff([

getSelfProp(%self%, userLangs),

[en]

])

),

renameProperty(postContent-en)

>|

getSelfProp(%self%, userData)@userPostData<

forEach<

applyFunction(

function: arrayAddItem(

array: [],

value: ""

),

addArguments: [

key: postContent,

array: %value%,

value: getSelfProp(

%self%,

sprintf(

postContent-%s,

[extract(%value%, lang)]

)

)

]

),

applyFunction(

function: arrayAddItem(

array: [],

value: ""

),

addArguments: [

key: header,

array: %value%,

value: sprintf(

string: "<p>Hi %s, we published this post on %s, enjoy!</p>",

values: [

extract(%value%, name),

getSelfProp(%self%, postDate)

]

)

]

)

>

>;

getSelfProp(%self%, userPostData)@translatedUserPostProps<

forEach(

if: not(

equals(

extract(%value%, lang),

en

)

)

)<

advancePointerInArray(

path: header,

appendExpressions: [

toLang: extract(%value%, lang)

]

)<

translate(

from: en,

to: %toLang%,

oneLanguagePerField: true,

override: true

)

>

>

>;

getSelfProp(%self%,translatedUserPostProps)@emails<

forEach<

applyFunction(

function: arrayAddItem(

array: [],

value: []

),

addArguments: [

key: content,

array: %value%,

value: concat([

extract(%value%, header),

extract(%value%, postContent)

])

]

),

applyFunction(

function: arrayAddItem(

array: [],

value: []

),

addArguments: [

key: to,

array: %value%,

value: extract(%value%, email)

]

),

applyFunction(

function: arrayAddItem(

array: [],

value: []

),

addArguments: [

key: subject,

array: %value%,

value: "PoP API example :)"

]

),

sendByEmail

>

>(Please don't be shocked by this complex query! The PQL language is actually even simpler than GraphQL, as can be seen when put side-by-side.)

To run the query, there's no need for GraphiQL: it's URL-based, so it can be executed via GET, and a normal link will do. Click here and marvel: query to create, translate and send newsletter (this is a demo, so I'm just printing the content on screen, not actually sending it by email 😂).

What is going on there? The query is a series of operations executed in order, with each passing its results to the succeeding operations: fetching the list of emails from a REST endpoint, fetching the users from the database, obtaining their language, fetching the post content, translating the content to the language of each user, and finally sending the newsletter.

To check it out in detail, I've written a step-by-step description of how this query works.

You may think that you don't need to implement a newsletter-sending service. But that's not the point. The point is that, if you can implement this, you can implement pretty much anything you will ever need.

The query above uses a couple of features available in PQL but not in GQL, which I have requested for the GraphQL spec:

Sadly, I've been told that these features will most likely not be add to the spec.

Hence, GraphQL cannot implement the example, yet. But through executing multiple queries in a single operation, @export, and powerful custom directives, it can certainly support novel use cases.

vendor/, are not stored in the GitHub repo, because they do not belong there.

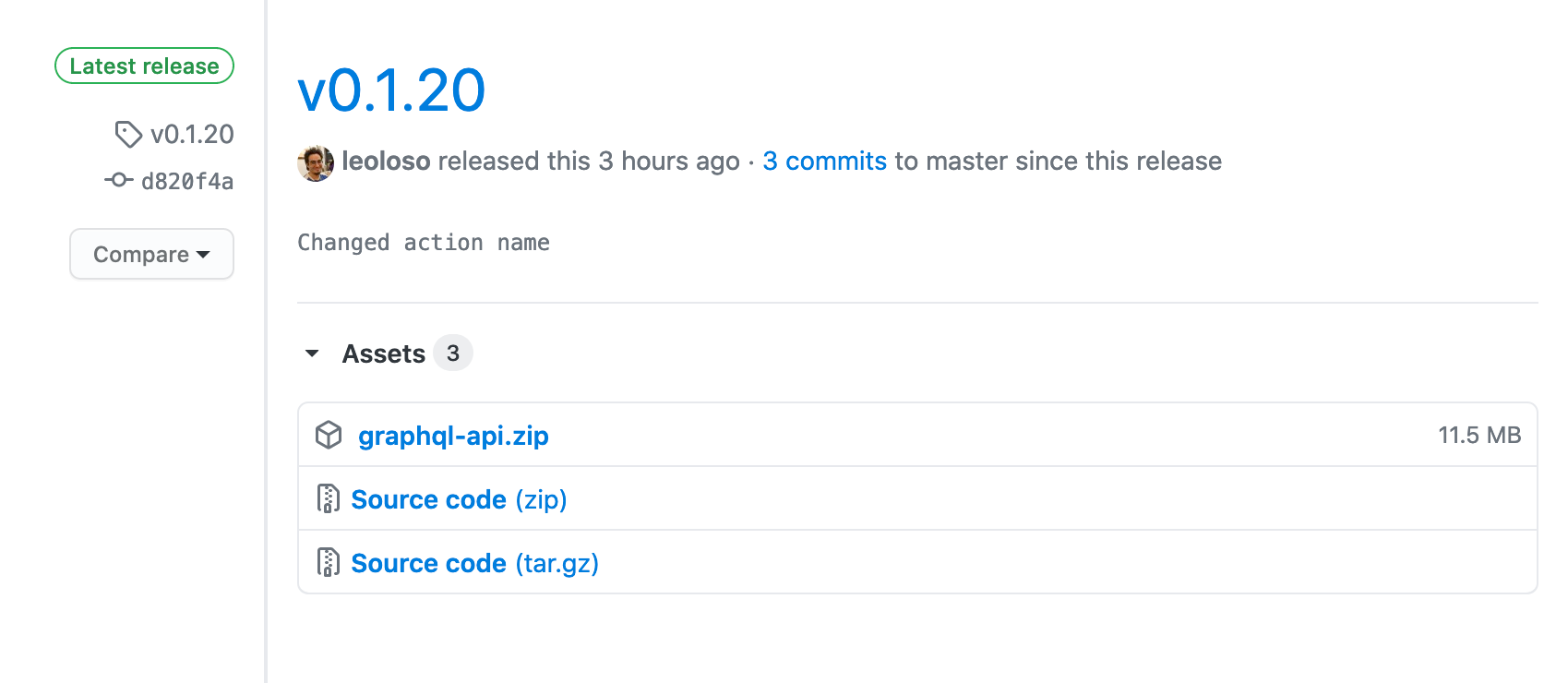

However, these dependencies must be inside the .zip file when installing the plugin in the WordPress site. Then, when and how do we add them into the release?

The answer is to create a GitHub action which, upon tagging the code, will automatically create the .zip file and upload it as a release asset.

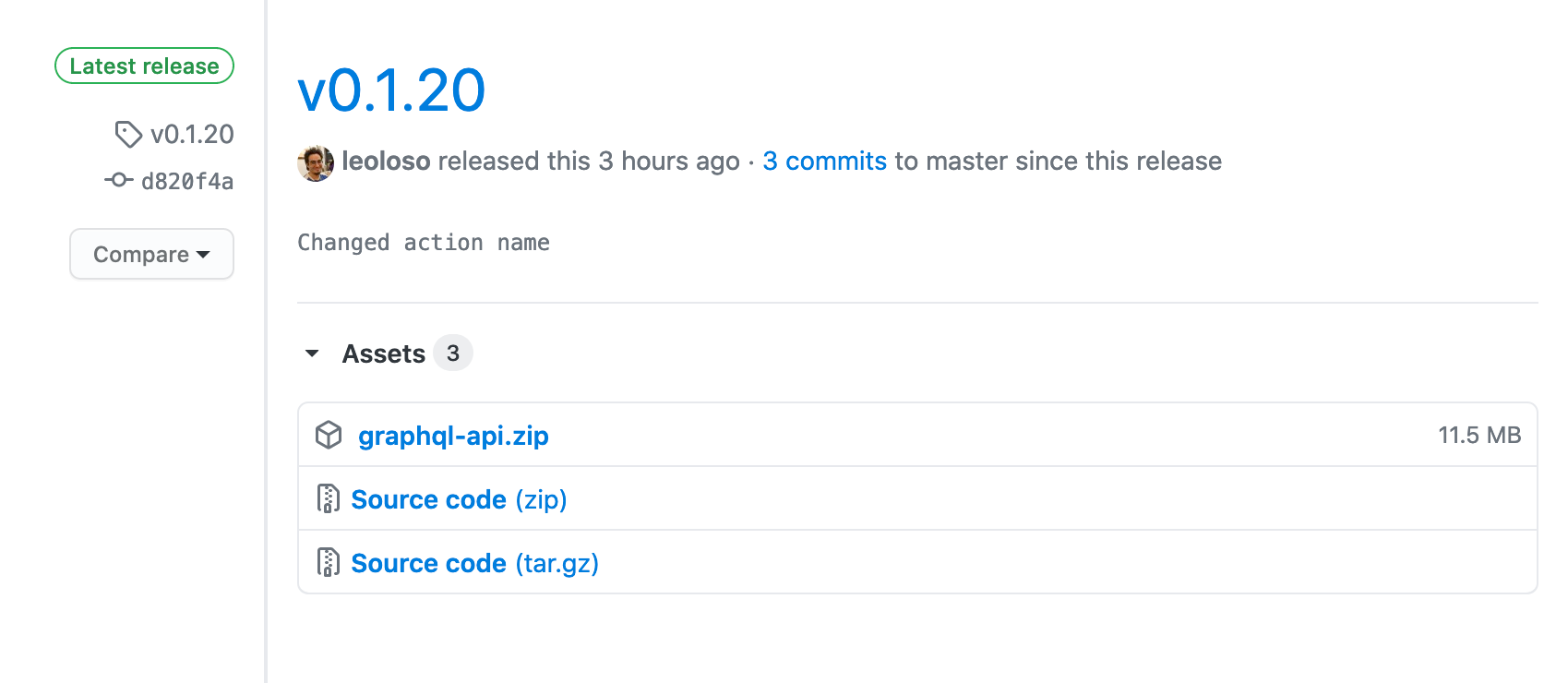

The end result looks like this: In addition to the Source code (zip) (which does not contain the PHP dependencies), the release assets contain a gatographql.zip file, which does have the PHP dependencies, and is the actual plugin to install in the WordPress site:

In this post, I'll demonstrate step-by-step the GitHub action to build the plugin.

Before attempting to create my own action, I tried the following ones:

None of them worked for my case. Concerning 10up's action, its purpose is to upload the plugin release from GitHub to WordPress' SVN. This can be very useful, saving us plenty of time by avoiding to do this bureaucratic conversion manually. However, I can't use it, because my plugin is not in the WordPress plugin directory yet (for the time being, it's available only through GitHub). I attempted to use it just to generate the .zip file, without uploading to the SVN, but nope, it doesn't work.

upload-release-asset should have been suitable for my use case, however I couldn't make it work properly, because this action creates a release, which is then uploaded as an asset. However, when tagging the source code (say, with v0.1.5), the release is already created! Hence, this tool would create yet-another release, which is far from ideal. And even worse, it requires parameter tag_name, but this tag can't be the same used for tagging the source code, or it gives a duplicated error. Then, my source code was being tagged twice: first manually as v0.1.5, and then automatically as plugin-v0.1.5. Very far from ideal.

So, I created my own action.

The action is this one:

name: Generate Installable Plugin, and Upload as Release Asset

on:

release:

types: [published]

jobs:

build:

name: Upload Release Asset

runs-on: ubuntu-latest

steps:

- name: Checkout code

uses: actions/checkout@v2

- name: Build project

run: |

composer install --no-dev --optimize-autoloader

mkdir build

- name: Create artifact

uses: montudor/action-zip@v0.1.0

with:

args: zip -X -r build/gatographql.zip . -x *.git* node_modules/\* .* "*/\.*" CODE_OF_CONDUCT.md CONTRIBUTING.md ISSUE_TEMPLATE.md PULL_REQUEST_TEMPLATE.md *.dist composer.* dev-helpers** build**

- name: Upload artifact

uses: actions/upload-artifact@v2

with:

name: graphql-api

path: build/gatographql.zip

- name: Upload to release

uses: JasonEtco/upload-to-release@master

with:

args: build/gatographql.zip application/zip

env:

GITHUB_TOKEN: ${{ secrets.GITHUB_TOKEN }}The workflow is like this:

The action, called "Generate Installable Plugin, and Upload as Release Asset", is executed whenever a new release is created, i.e. whenever I tag my code, as defined in the on entry:

name: Generate Installable Plugin, and Upload as Release Asset

on:

release:

types: [published]The computer (called a "runner") where it runs is a Linux:

jobs:

build:

name: Upload Release Asset

runs-on: ubuntu-latestThe first step is to check out the source code from the repo:

steps:

- name: Checkout code

uses: actions/checkout@v2Then, it builds the WordPress plugin, by having Composer download the PHP dependencies and store them under vendor/. This is the crucial step, for which this action exists.

Because this is the plugin for production, we can attach options --no-dev --optimize-autoloader to optimize the release:

- name: Build project

run: |

composer install --no-dev --optimize-autoloaderNext, we will create the .zip file, stored under a build/ folder. We first create the folder:

mkdir buildAnd then make use of montudor/action-zip to zip the files into build/gatographql.zip.

In this step, I also exclude those files and folder which are needed when coding the plugin, but are not needed in the actual final plugin:

.git, .gitignore, etc)node_modules/ folder (there should be none, but just in case...)phpcs.xml.dist, phpstan.neon.dist and phpunit.xml.dist)composer.json and composer.lockCODE_OF_CONDUCT.md, CONTRIBUTING.md, ISSUE_TEMPLATE.md and PULL_REQUEST_TEMPLATE.mdbuild/, which is created only to store the .zip filedev-helpers/, which contains helpful scripts for development - name: Create artifact

uses: montudor/action-zip@v0.1.0

with:

args: zip -X -r build/gatographql.zip . -x *.git* node_modules/\* .* "*/\.*" CODE_OF_CONDUCT.md CONTRIBUTING.md ISSUE_TEMPLATE.md PULL_REQUEST_TEMPLATE.md *.dist composer.* dev-helpers** build**After this step, the release will have been created as build/gatographql.zip. Next, as an optional step, we upload it as an artifact to the action:

- name: Upload artifact

uses: actions/upload-artifact@v2

with:

name: graphql-api

path: build/gatographql.zipAnd finally, we make use of JasonEtco/upload-to-release upload the .zip file as a release asset, under the release package which triggered the GitHub action. The secret secrets.GITHUB_TOKEN is implicit, GitHub already sets it up for us:

- name: Upload to release

uses: JasonEtco/upload-to-release@master

with:

args: build/gatographql.zip application/zip

env:

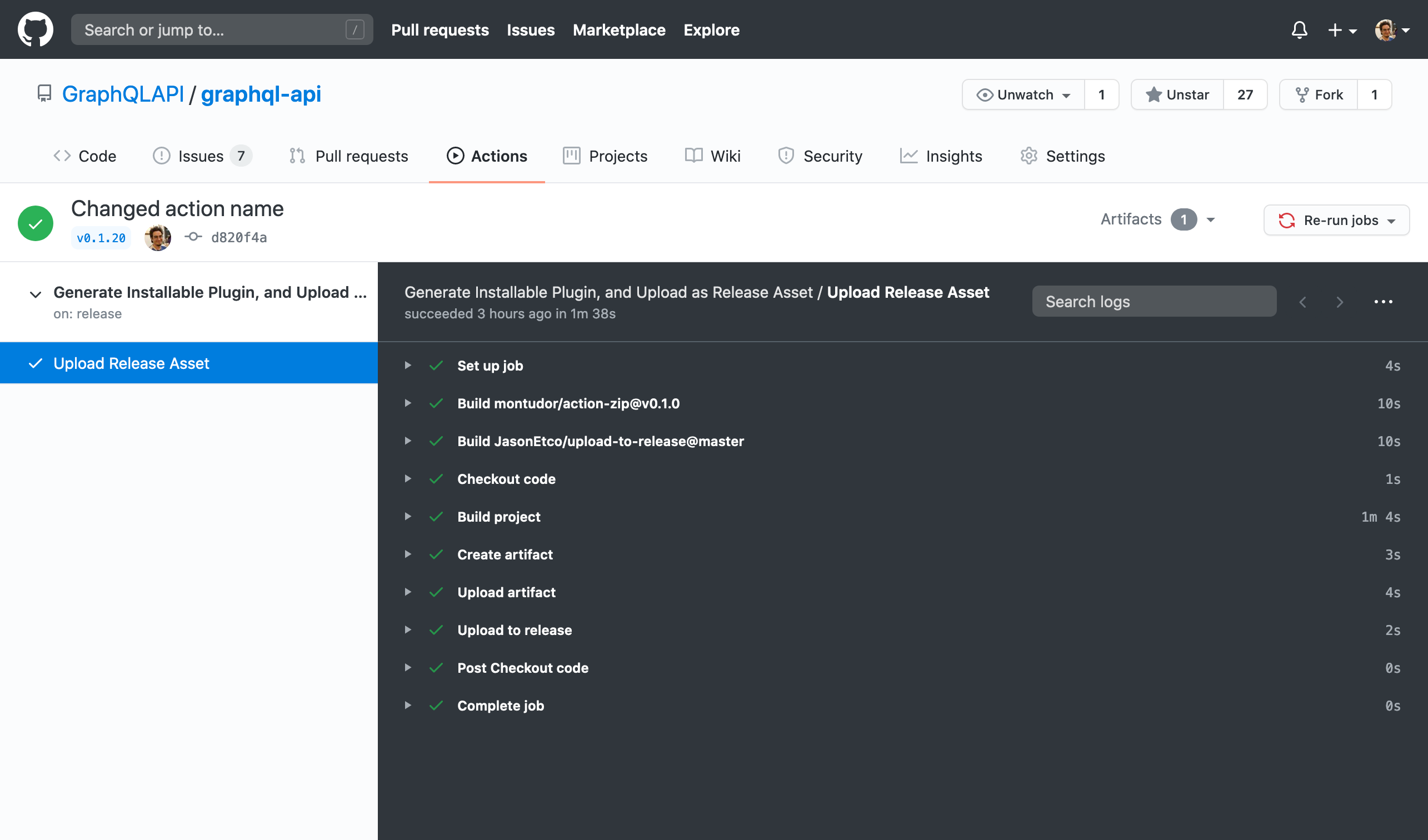

GITHUB_TOKEN: ${{ secrets.GITHUB_TOKEN }}When tagging the source code with tag v0.1.20, the action is triggered, and we can see in real-time what the process is doing. Once finished, if everything went fine, all the steps executed in the workflow will have a beautiful ✅ mark:

Now, heading to the releases for tag v0.1.20, it displays a link to the newly-create release graphql-api:

Hurray!

]]>Why build your own when one already , and is developed by a gatsby dev?

I reproduce my response here.

I can reply to this question from 3 different angles:

I actually started working on this project much earlier than WPGraphQL. It's just that, when I started with it, I didn't know it would eventually become a GraphQL server for WordPress, or even a GraphQL server!

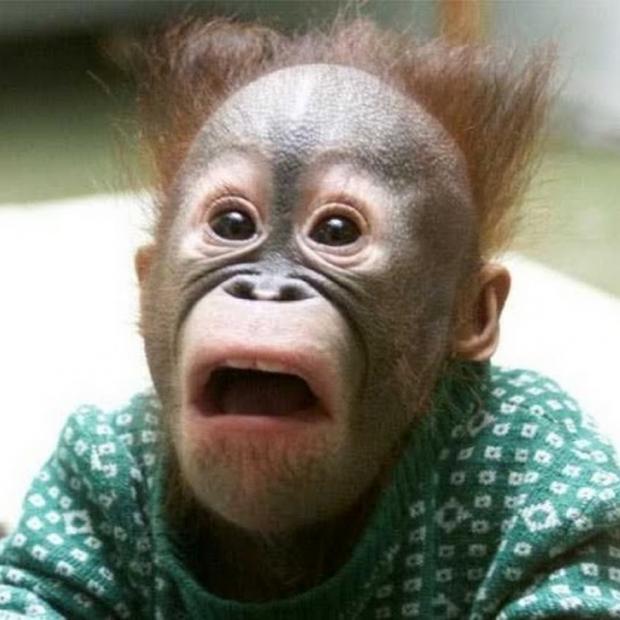

I started the mental process of thinking about my solution after I published this article on Smashing Magazine, describing an architecture of server-side components to load data:

https://www.smashingmagazine.com/2019/01/introducing-component-based-api/

This article describes the foundation of how my GraphQL server works. It was published in January 2019, before Jason Bahl was even hired by Gatsby.

Around then I learnt about GraphQL, and how it returns exactly the queried data. And with my architecture, I was already solving that problem, and beautifully, since it doesn't employ a graph, so it's super performant.

So then, I had no alternative towards myself than to go ahead, and implement the GraphQL server. It took around 1 year to do. But in the process, I built it to be super super powerful, as I've been trying to show in my series of articles for LogRocket, and sharing in this channel [/r/graphql in Reddit].

And concerning the component-based architecture, when did I start working on it? Its repo, https://github.com/GatoGraphQL/GatoGraphQL, was actually published in September 2016!

So I was working on my GraphQL server way back before I even knew about the existence of GraphQL.

Btw, Jason is doing a great job with WPGraphQL. Launching an alternative to his project is not about posing a challenge. We just happen, by chance, to have implemented 2 different solutions to the same problem...

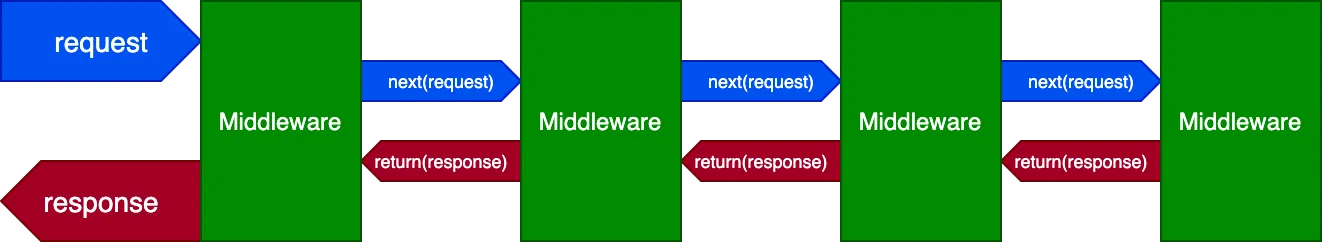

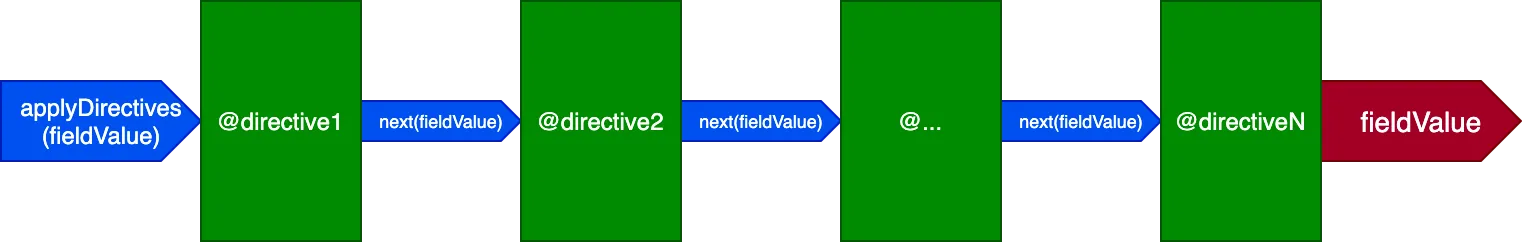

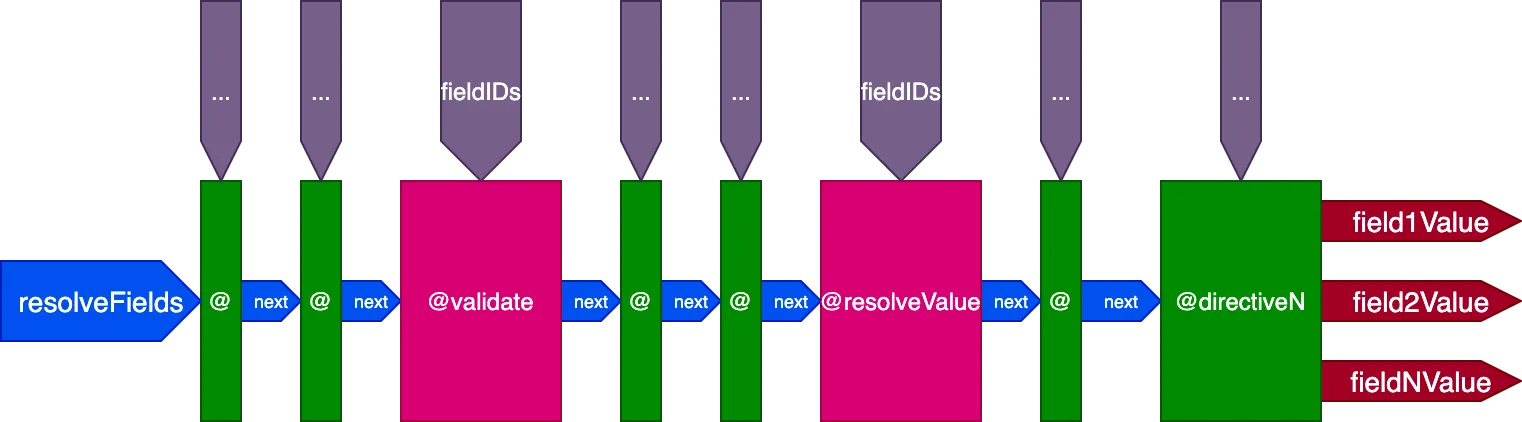

I don't want to sound arrogant, I truly do not want. But my solution is better. Indeed, I meant it in my original post when I said this is serious GraphQL business. I'm extremely proud of what features it supports and, the best of all, is that the features it can potentially implement are boundless, thanks to the directive pipeline architecture that I described here:

https://blog.logrocket.com/treating-graphql-directives-as-middleware/

WPGraphQL is, on the opposite, quite limited. Its support for directives is quite paltry. This is because it relies on webonyx/graphql-php, which is OK, but nothing great.

I have just published a blog post, comparing my plugin to both WP REST API and WPGraphQL, and explaining what makes my plugin special from a feature-point-of-view. Please read it:

https://leoloso.com/posts/introducing-the-graphql-api-for-wordpress/

I understand the feeling that, if somebody is working on an open source project about the topic I want to implement, then I should contribute there instead of doing my own thing.

But that doesn't apply if your idea to solve the problem is completely different, and can solve the problem better. Imagine if we had declared the problem of search solved by 1994, so that Google would not have been created, and we'd still be searching with Altavista nowadays.

In addition: creating another project brings innovation and improvement all across. Now that my project is out, WPGraphQL has to improve. I'm providing persisted queries. They are not. They will need to implement it, or risk having their users switch to my plugin. Can they implement it? I hope their architecture supports it (I guess it should), but I don't know for sure. What if I had contributed to their project, instead of working on mine? Well, then I couldn't have created 1/10th of what I did using my own architecture.

Have I convinced you with my explanation? 😀

]]>Update 23/01: The GraphQL API for WordPress has its own site now: gatographql.com.

Yesterday I launched the project I've put all my efforts into: the GraphQL API for WordPress, a plugin which enables to retrieve data from a WordPress site using the increasingly popular GraphQL API.

I've been developing this plugin full time for most of the last 12 months. And, taken together with GraphQL by PoP (the CMS-agnostic GraphQL server in PHP, on which it is based), I've spent several years into this project.

So it's a great relief and pleasure to be finally able to release it to the world. In this blog post I explain all about it.

Before anything, let's tackle the elephant in the room. You may be thinking: "Wait a second. Aren't there already API solutions for WordPress?"

Yes, there are. The 2 most popular solutions are WP REST API, which is already part of WordPress core, and WPGraphQL, a plugin which is also based in GraphQL.

"I thought so! But aren't these APIs already good?"

Yes, they are indeed good. The WP REST API is kept always up-to-date with the latest requirements from the WordPress project, most notably concerning Gutenberg. And WPGraphQL, even though it hasn't been published to the WordPress directory yet, has become more stable during the past year, gained an increasing community of users, and is approaching its 1.0 version.

"So then, why do we need yet another solution?"

Possibly, you do not need another solution. If whichever solution you're already using satisfies all your needs, and doesn't give you any trouble at all, then stay there.

But if your solution doesn't fully satisfy your needs, because it's not so fast, secure or friendly to use; it takes plenty of time to code or write documentation for it; it has limitations that hinder your application; or any other reason at all... then hear me out.

These are, I believe, GraphQL API for WordPress's two killer features:

Persisted queries use GraphQL to provide pre-defined enpoints as in REST, obtaining the benefits of both APIs.

With REST, you create multiple endpoints, each returning a pre-defined set of data.

| Advantages |

|---|

| ✅ It's simple |

✅ Accessed via GET or POST |

| ✅ Can be cached on the server or CDN |

| ✅ It's secure: only intended data is exposed |

| Disadvantages |

|---|

| ❌ It's tedious to create all the endpoints |

| ❌ A project may face bottlenecks waiting for endpoints to be ready |

| ❌ Producing documentation is mandatory |

| ❌ It can be slow (mainly for mobile apps), since the application may need several requests to retrieve all the data |

With GraphQL, you provide any query to a single endpoint, which returns exactly the requested data.

| Advantages |

|---|

| ✅ No under/over fetching of data |

| ✅ It can be fast, since all data is retrieved in a single request |

| ✅ It enables rapid iteration of the project |

| ✅ It can be self-documented |

| ✅ It provides an editor for the query (GraphiQL) that simplifies the task |

| Disadvantages |

|---|

❌ Accessed only via POST |

| ❌ It can't be cached on the server or CDN, making it slower and more expensive than it could be |

| ❌ It may require to reinvent the wheel, such as uploading files or caching |

| ❌ Must deal with additional complexities, such as the N+1 problem |

Persisted queries combine these 2 approaches together:

Hence, we obtain multiple endpoints with predefined data, as in REST, but these are created using GraphQL, obtaining the advantages from each:

| Advantages |

|---|

✅ Accessed via GET or POST |

| ✅ Can be cached on the server or CDN |

| ✅ It's secure: only intended data is exposed |

| ✅ No under/over fetching of data |

| ✅ It can be fast, since all data is retrieved in a single request |

| ✅ It enables rapid iteration of the project |

| ✅ It can be self-documented |

| ✅ It provides an editor for the query (GraphiQL) that simplifies the task |

And avoiding their disadvantages:

| Disadvantages |

|---|

POST |

Check out this video on creating a new persisted query:

The GraphQL single endpoint, which can return any piece of data accessible through the schema, could potentially allow malicious actors to retrieve private information. Hence, we must implement security measures to protect the data.

The GraphQL API for WordPress provides several mechanisms to protect the data:

👉 We can decide to only expose data through persisted queries, and completely disable access through the single endpoint (indeed, it is disabled by default).

👉 We can create custom endpoints, each tailored to different users (such as one or another client).

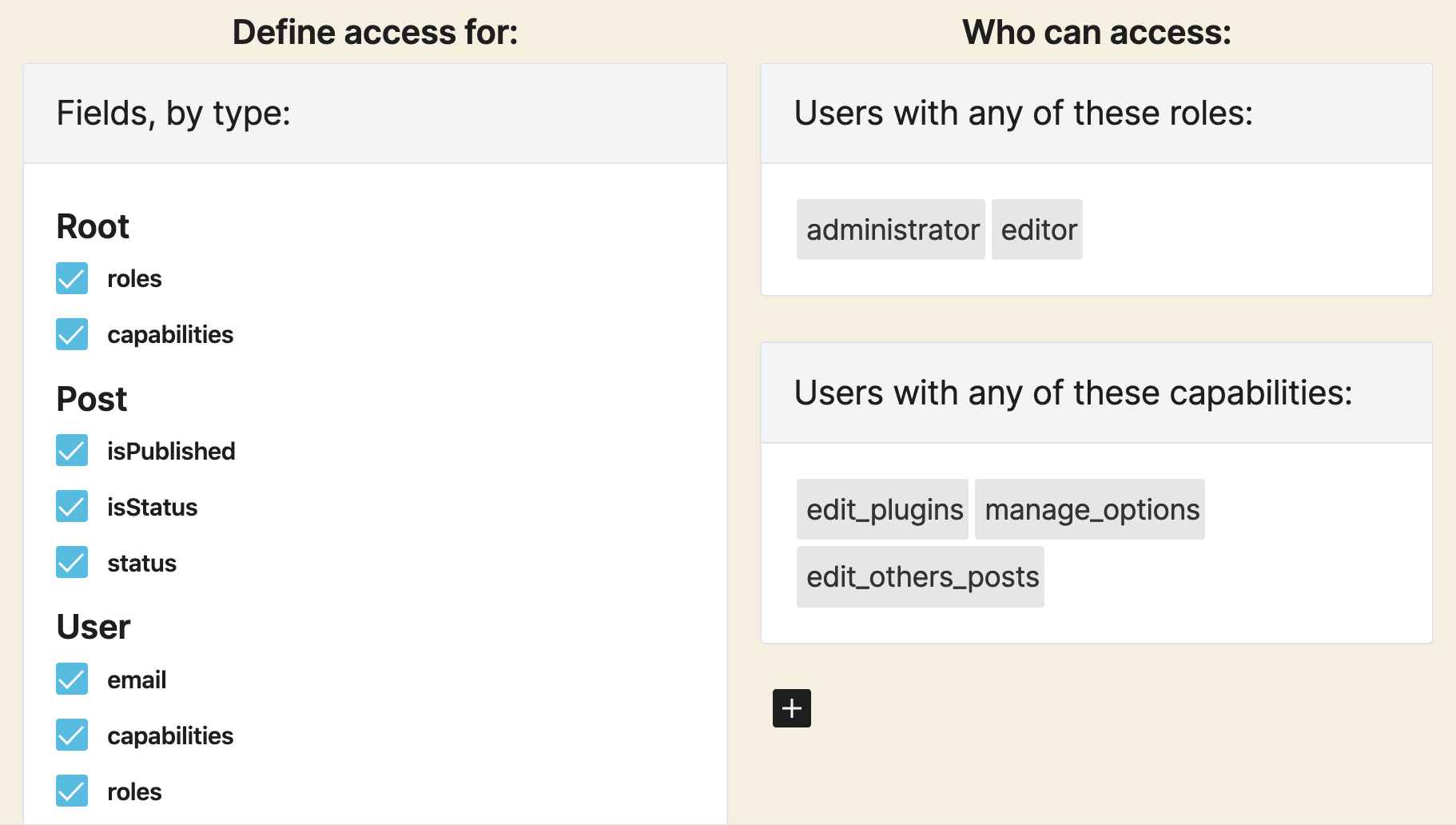

👉 We can set permissions to each field in the schema through Access Control Lists, defining rules such as: Is the user logged-in or not? Does the user have a certain role or capability? Or any custom rule.

👉 We can define the API to be either public or private:

In the public API, the fields in the schema are exposed, and when the permission is not satisfied, the user gets an error message with a description of why the permission was rejected.

In the private API, the schema is customized to every user, containing only the fields available to him or her, and so when attempting to access a forbidden field, the error message says that the field doesn't exist.

Here is an overview of the features shipped with the first version of the plugin.

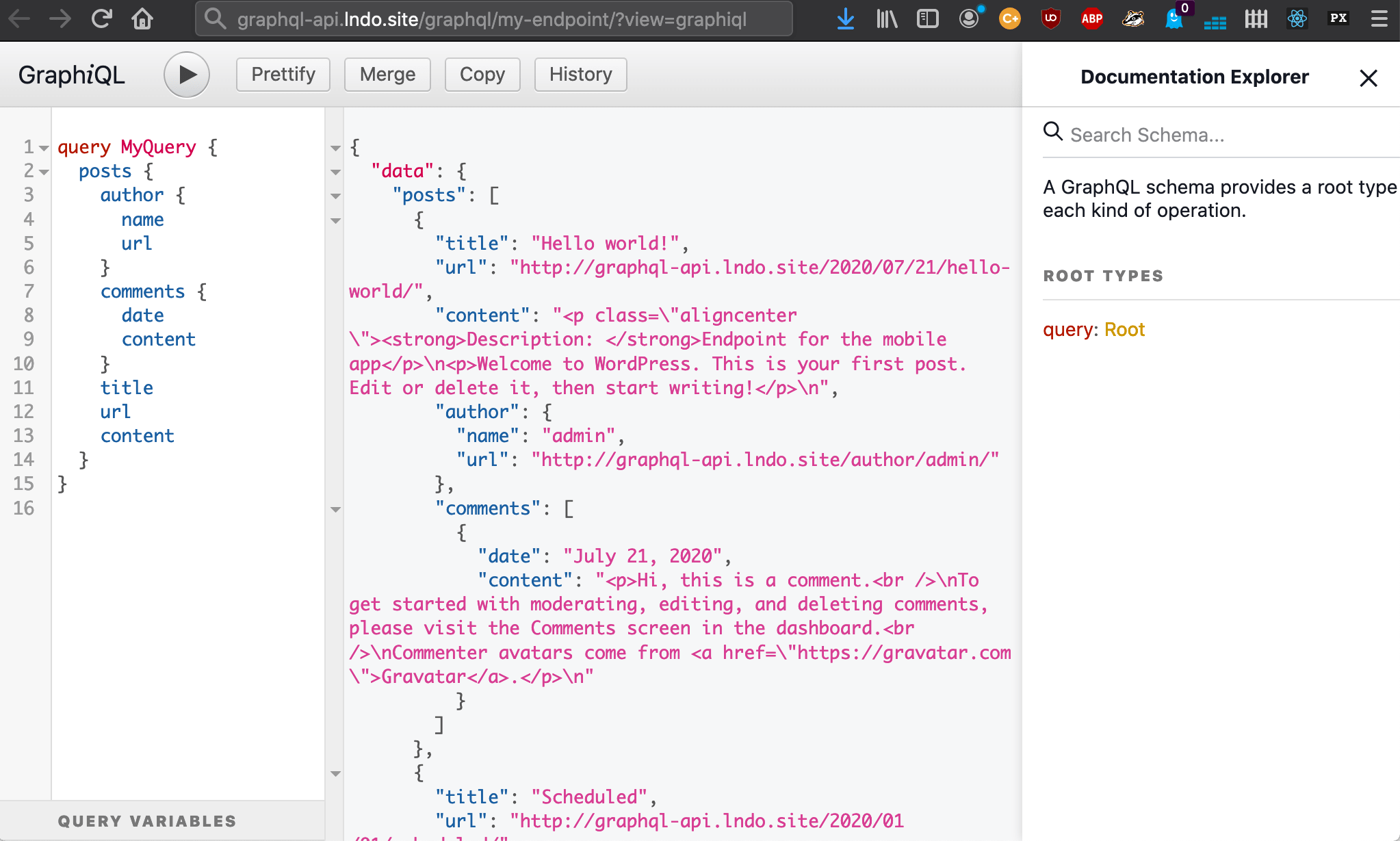

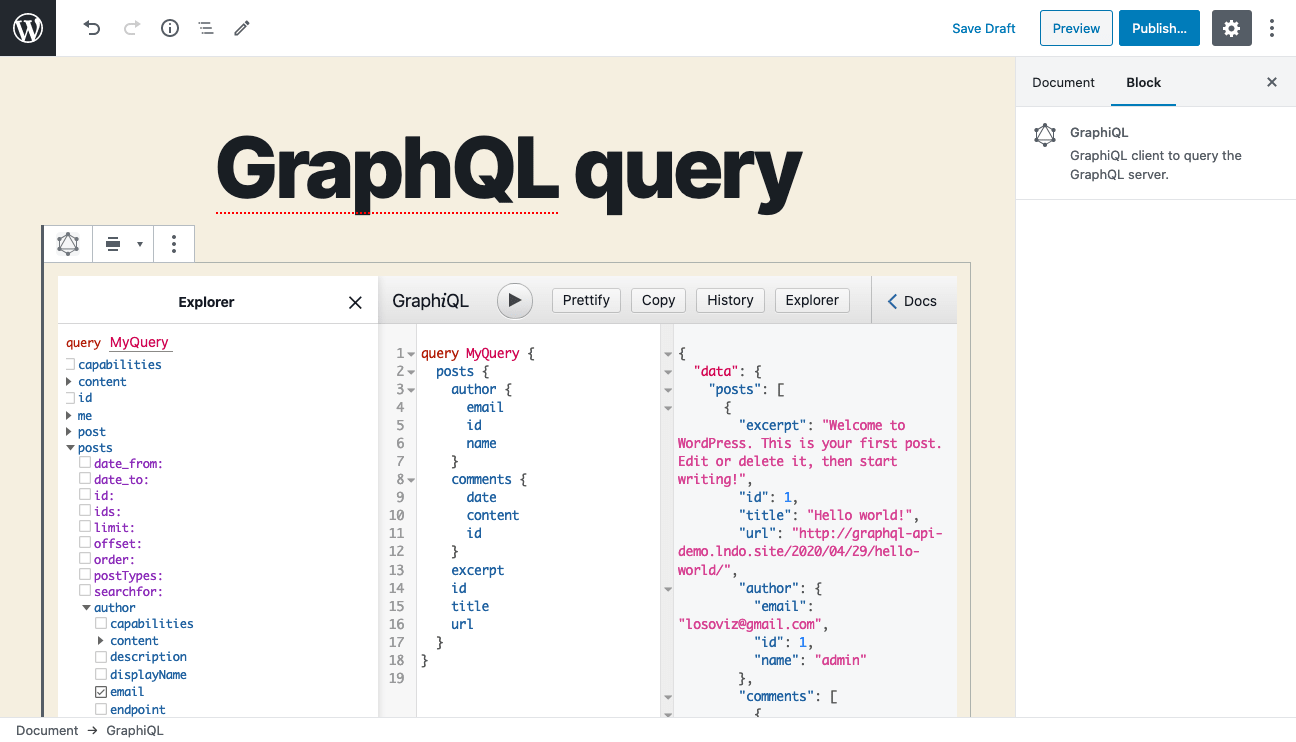

GraphiQL is a user-friendly client to create GraphQL queries.

The GraphiQL Explorer is an interactive tool attached to GraphiQL, that allows to create the query by point-and-clicking on fields.

These 2 tools are embedded in the plugin, making it very easy to create the queries:

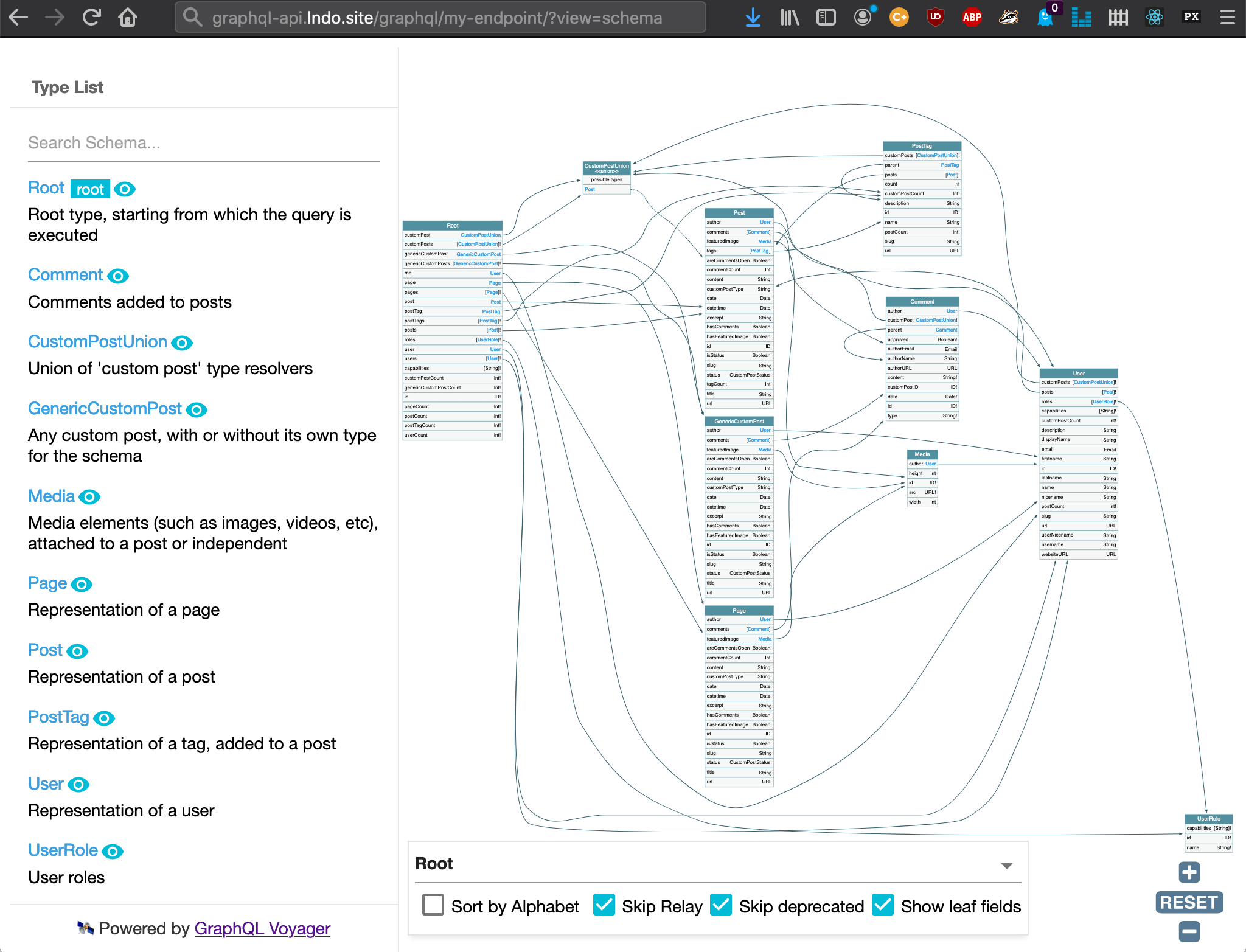

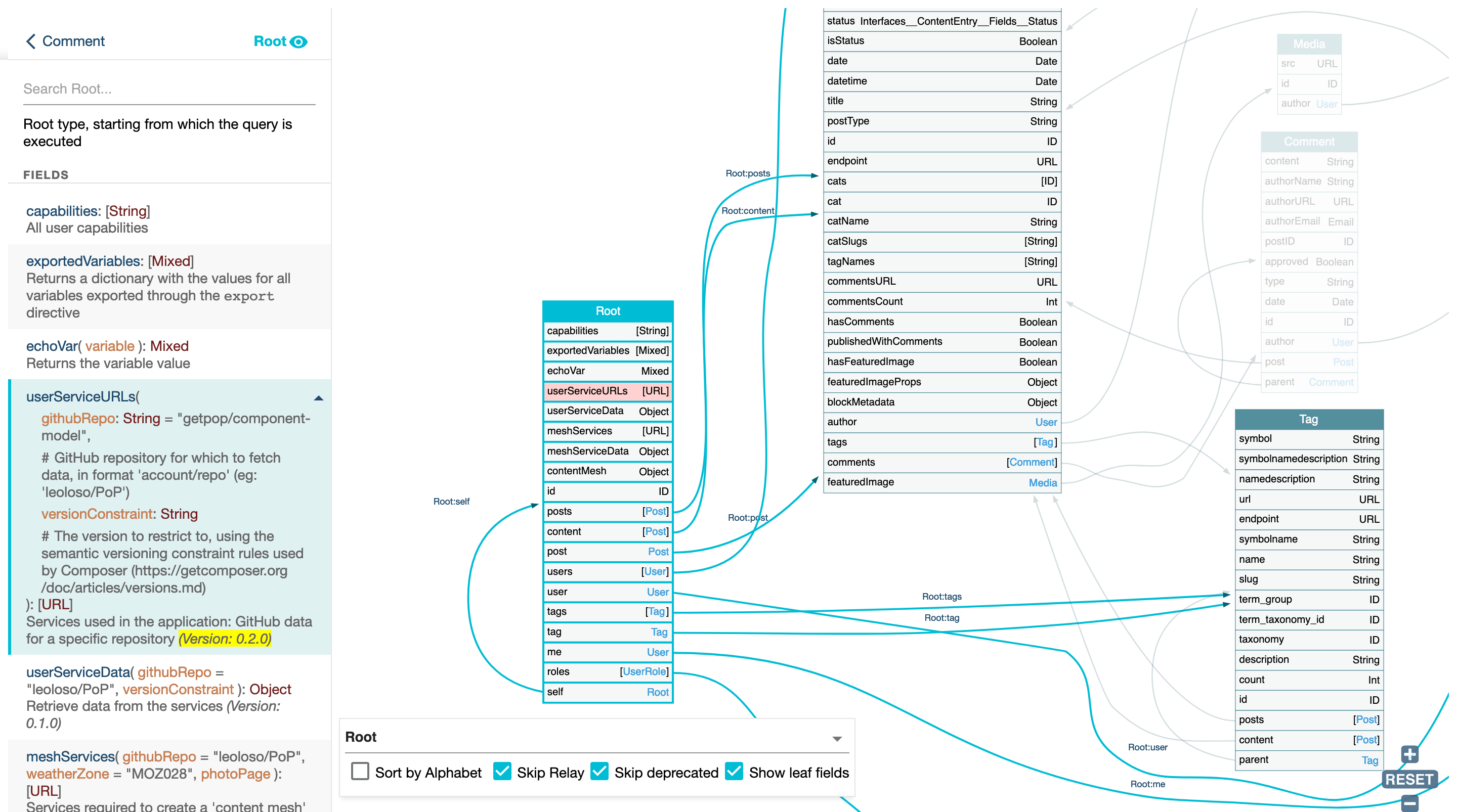

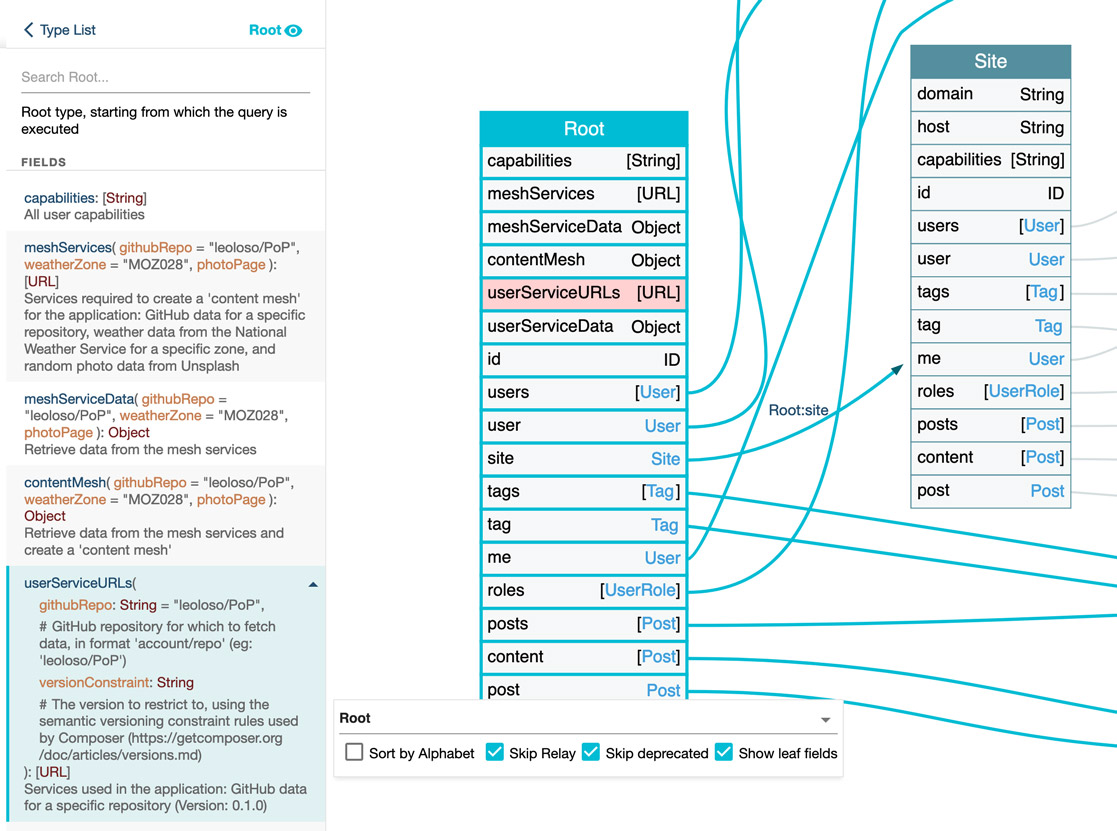

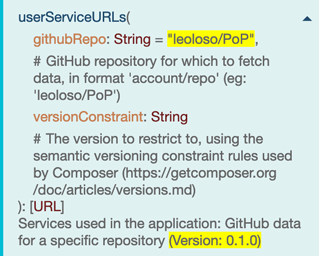

GraphQL Voyager is a tool that enables to explore the GraphQL schema:

As already explained.

A custom endpoint with a specific schema configuration can be created for any target, such as:

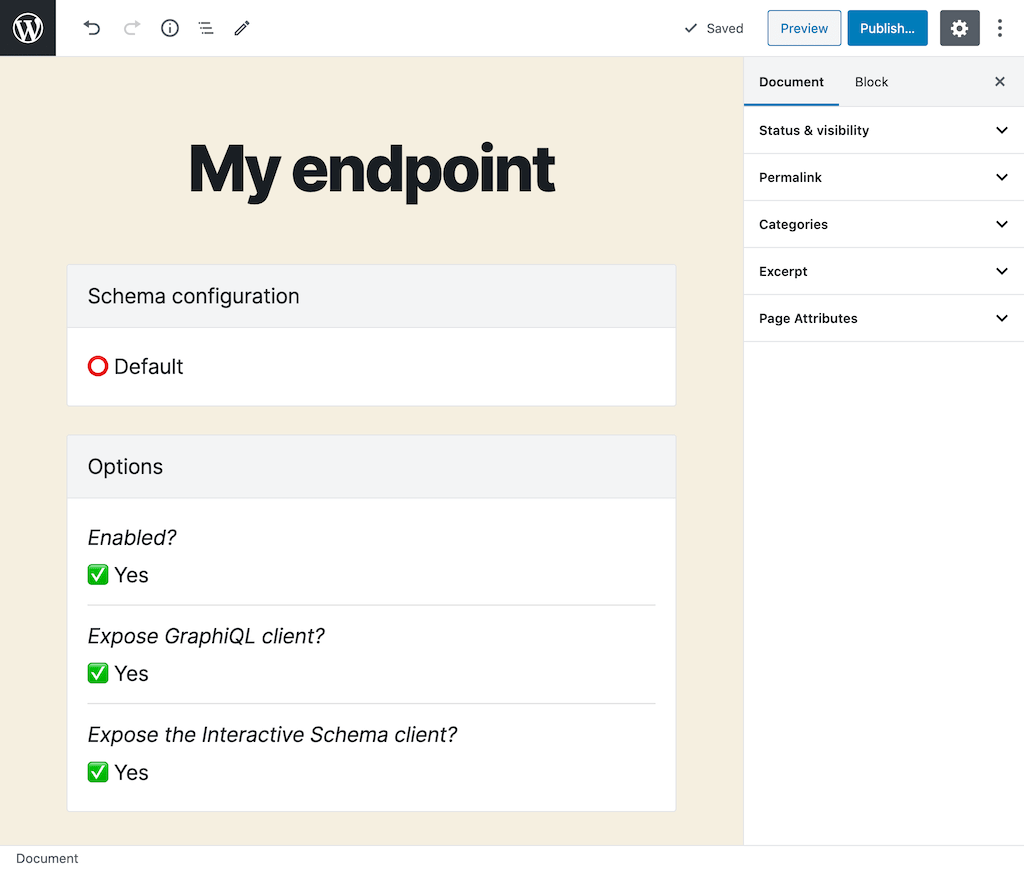

The custom endpoint is a Custom Post Type, and its slug becomes the endpoint. An endpoint with title "My endpoint" and slug my-endpoint will:

/graphql/my-endpoint//graphql/my-endpoint/?view=graphiql/graphql/my-endpoint/?view=schema

Every custom endpoint and persisted query can select a schema configuration, containing the sets of Access Control Lists, HTTP Caching rules, and Field Deprecation entries (and other features, provided by extensions) to be applied on the endpoint.

We define permissions to access every field and directive in the schema through Access Control Lists. Shipped in the plugin are the following rules:

New custom rules can be added, such as:

When access to some a field or directive is denied, there are 2 ways for the API to behave:

Because it sends the queries via POST, GraphQL is normally not cacheable on the server-side or intermediate stages between the client and the server, such as a CDN.

However, persisted queries can be accessed via GET, hence we can cache their response.

The max-age value is defined on a field and directive-basis. The response will send a Cache-Control header with the lowest max-age value from all the requested fields and directives, or no-store if either any field or directive has max-age: 0, or if access control must check the user state for any field or directive.

The plugin provides a user interface to deprecate fields, and indicate how they must be replaced.

Persisted queries (and also custom endpoints) can declare a parent persisted query, from which it can inherit its properties: Its schema configuration and its GraphQL query.

Inheritance is useful for creating a hierarchy of API endpoints, such as:

/graphql-query/posts/mobile-app//graphql-query/posts/website/In this hierarchy, we are able to define the query only on the parent posts persisted query, and then each child persisted query, mobile-app and website, will obtain the query from the parent, and define only its schema configuration (as to set the custom access control rules, HTTP caching and deprecated fields) for each application.

Likewise, we can declare the configuration at the parent level, and then all children implement only the GraphQL query.

/graphql-query/mobile-app/posts//graphql-query/mobile-app/users//graphql-query/website/posts//graphql-query/website/users/Children queries can override variables defined in the parent query. For instance, we can generate this structure:

/graphql-query/posts/english//graphql-query/posts/french/The GraphQL query in posts can have variable $lang, which is then set in each of the children queries with the value for the language: "en" and "fr".

The number of levels is unlimited, so we can also create:

/graphql-query/mobile-app/posts/english//graphql-query/mobile-app/posts/french/

When different plugins use the same name for a type or interface, there will be a conflict in the schema. Whenever this happens, enabling schema namespacing will fix the problem, since it prepends all types and interfaces with their namespace.

For instance, if WooCommerce and Easy Digital Downloads both implement a type Product, there there will be a conflict. With namespacing enabled, these types become Automattic_WooCommerce_Product and SandhillsDevelopment_EDD_Product, and the conflict is resolved.

Here a response to some questions I've received:

In theory yes, but since I've just launched the plugin, you'd better test if for some time to make sure there are no issues.

Update 04/02: the plugin is now scoped! So the issue below does not apply anymore 🥳

In addition, please be aware that the GraphQL API has a dependency on a few 3rd-party PHP packages, which must be scoped to avoid potential problems with a different version of the same package being used by another plugin in the site, but the scoping must yet be done.

Hence, test the plugin in your development environment first, and with all other plugins also activated. If you run into any trouble, please create an issue.

Yes, you can, because the GraphQL API for WordPress is extensible, supporting integration with any plugin. But, this integration must still be done!

If there is any plugin you need support for, and you're willing to do the implementation (i.e. creating the corresponding types and resolvers for the fields), please be welcome to create an issue and I will help.

In theory yes, it is doable, but I don't know why you'd want to do that: Jason Bahl, the creator of WPGraphQL, works for Gatsby, so relying on WPGraphQL is clearly the way to go.

Hopefully, everyone! Even though GraphQL involves technical concepts, I've worked hard to make the plugin as easy-to-use as possible.

Following the ethos from WordPress, this plugin attempts to allow anyone, i.e. bloggers, designers, marketers, salesmen, and everyone else, to be able to create an API in a simple way:

Also, because the single endpoint is disabled by default, the risk of unintentionally exposing sensitive data is minimal.

You can, but you will need to rewrite your existing GraphQL queries, because the shape of the schema provided by both plugins is different.

For instance, some differences are:

postTags instead of tagswhere argument for the posts field, handled differently in GraphQL APIUpdate 04/02: the plugin has guides on how to use it, and has been scoped! So the issues below do not apply anymore 🥳

GraphQL API is stable and, I'd dare say, ready for production (that is, after playing with it in development). But some things are not complete yet:

When these two issues are resolved, I may already decide to publish the GraphQL API plugin to the WordPress plugin repository, depending on the feedback I have received by then.

Moving forward, the schema must be completed to cover all WordPress entities, including:

Finally, GraphQL API does not currently support mutations. It must also be implemented.

WordPress is the most popular CMS in the world, because it makes it easy to anyone to create and publish content. It provides a great user experience.

GraphQL is steadily becoming the most popular API solution, because it makes it easy to access the data from a website. It provides a great developer experience.

I believe that the GraphQL API for WordPress can succeed to integrate these 2 together, combining their characteristics: to make it easy to anyone to provide access to their content.

This is, I believe, "democratizing data publishing".

If you like what you've seen, please:

🙏 Try it out

🙏 Star it on GitHub

🙏 Share it with your friends and colleagues

🙏 Talk about it (please do! I have no deep-pockets to promote it, I depend on word of mouth)

And please, give me feedback about your experience, either good or bad. If you enjoyed it and found it useful, please let me know. If you think that something can be improved, let me know. If something didn't work, or something else broke in the site, let me know. Be welcome to create an issue on the repo.

Thanks for reading!

]]>Essentials for building your first Gutenberg block

This article gives a few tips for starting a new Gutenberg project, as I discovered them in my own journey. It's mainly useful for newbies, who either haven't started yet, or have recently begun and are navigating uncharted waters.

As always, I hope you enjoy it!

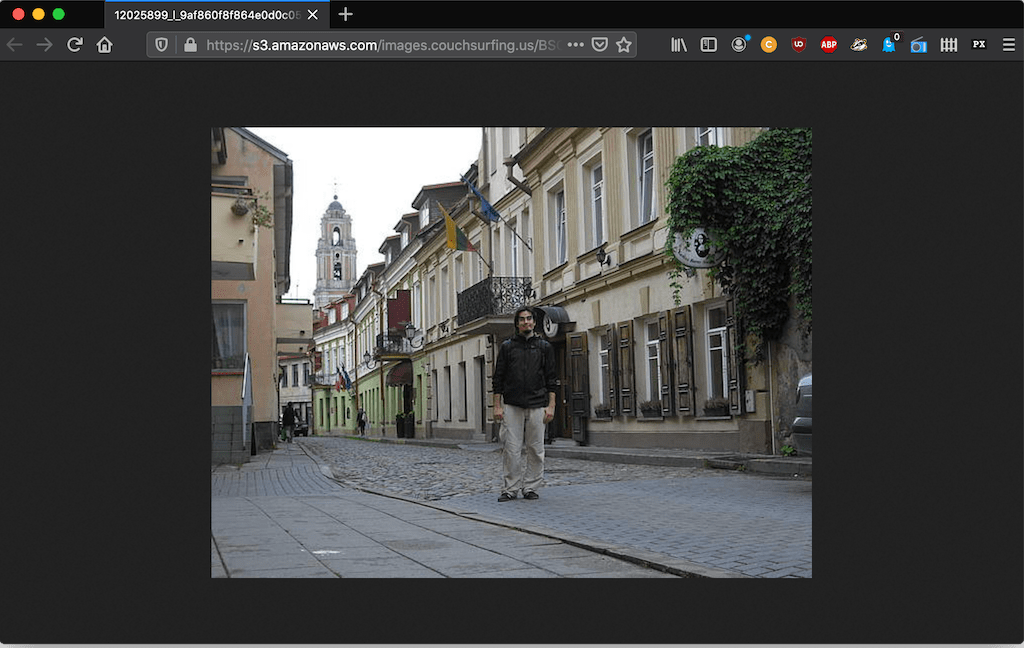

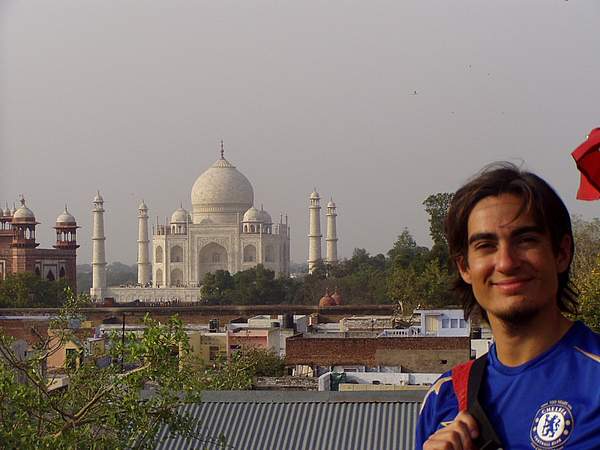

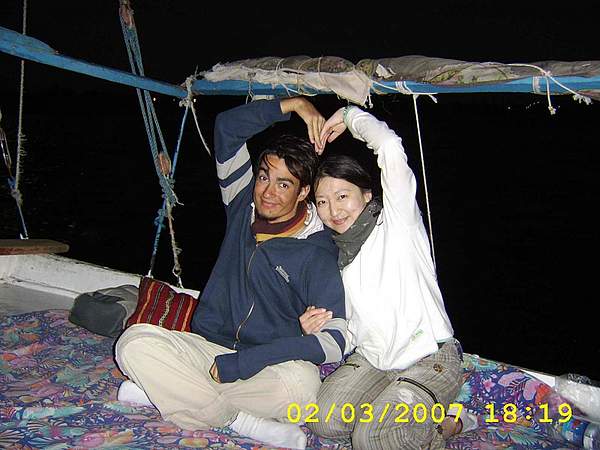

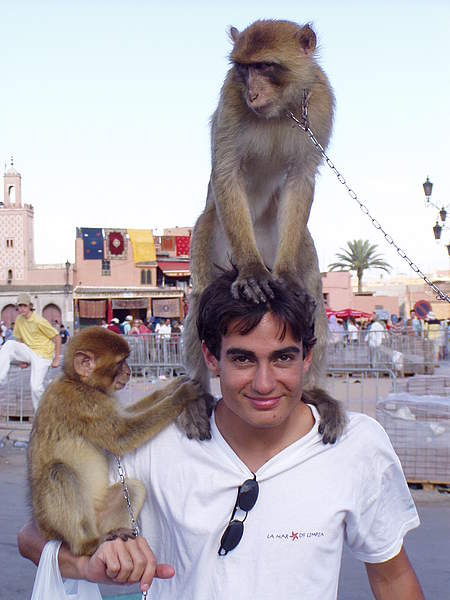

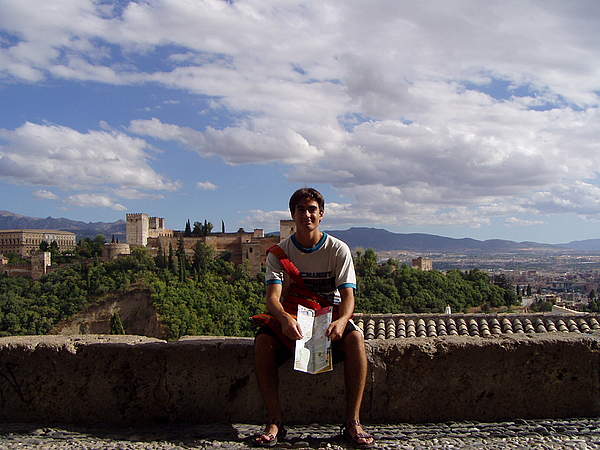

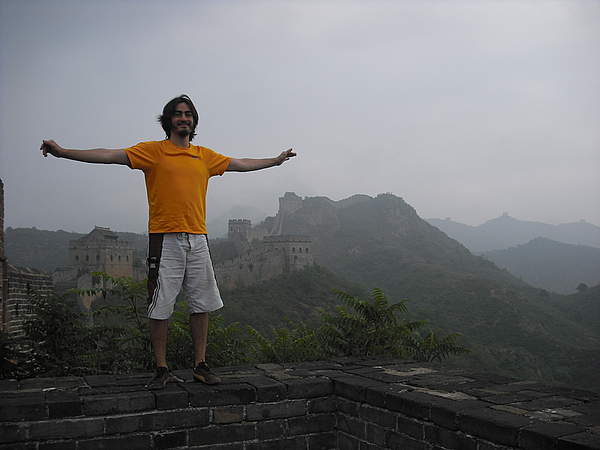

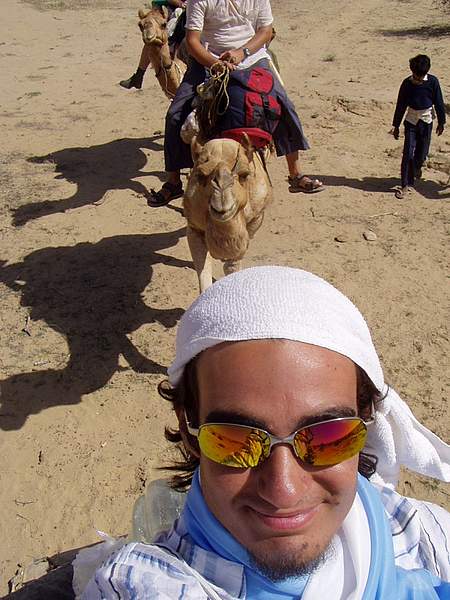

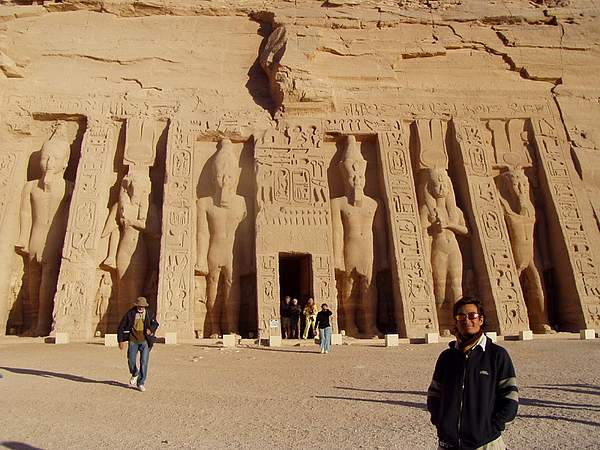

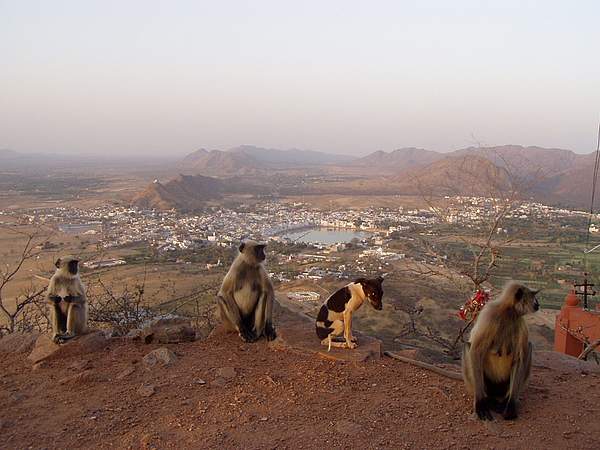

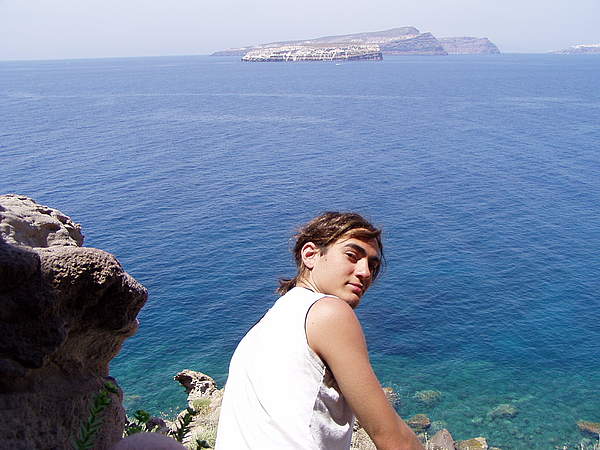

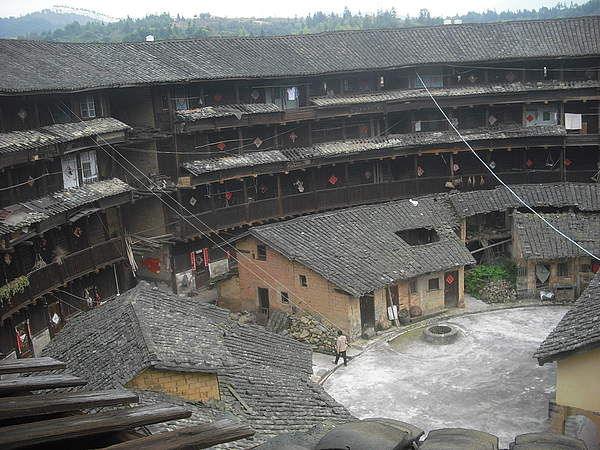

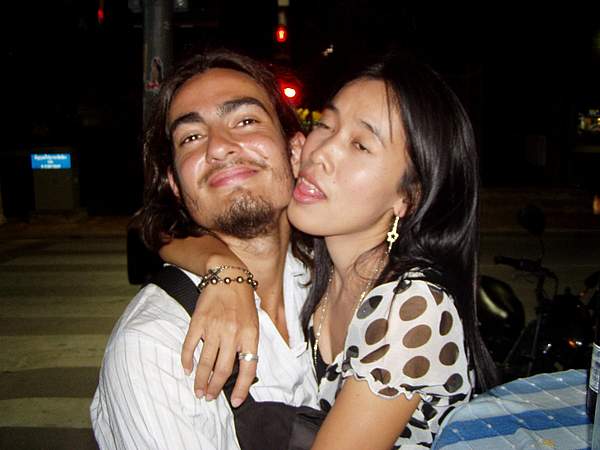

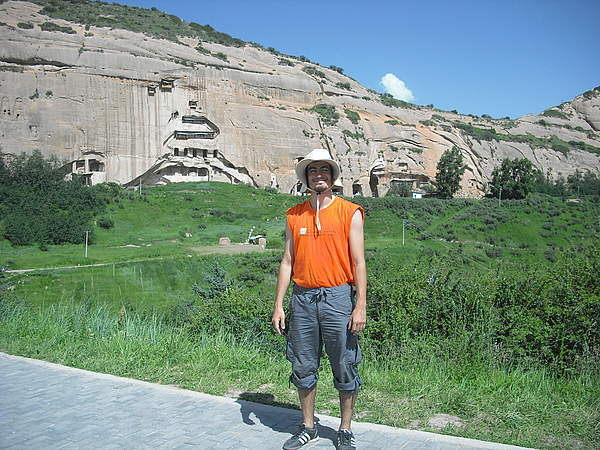

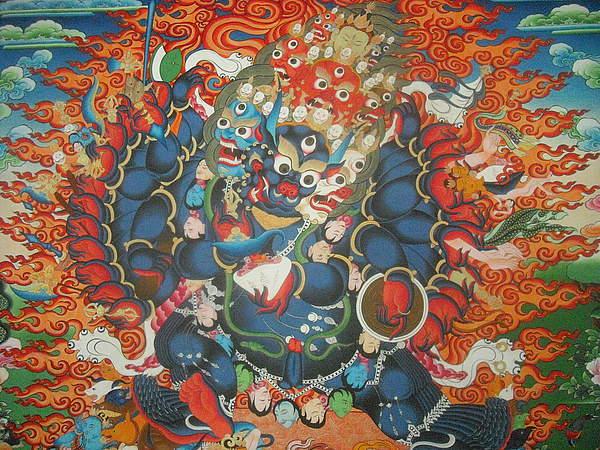

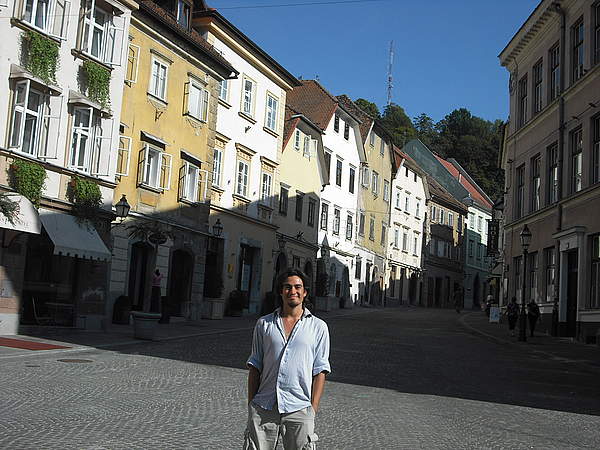

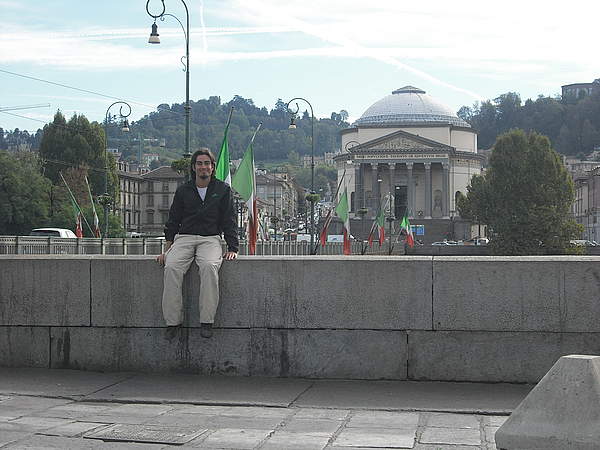

]]>Here they are, the images of some of my travels, when I was young and energetic (I was even smiling in many of those pics!):

I have the impression than most WordPress developers are in the same situation. So I've been compiling my aha moments, and wrote a couple of articles for the LogRocket blog with my tips.

I just had the first article published:

👉🏻 Setting up your first Gutenberg project

The second part will come next week.

I hope you enjoy it!

]]>I just got the data: the images are resized down (they don't have the original dimensions I uploaded them with), and the data is a dull .json file, which does not reflect any of the personality, feelings or enjoyment that this same piece of data had in the site.

It sucks.

But at least, because the CS reps didn't know how to satisfy my requirements for my own data, they gave me temporary access to the site. So I could log in a final time, and took a few screenshots of my profile in the site.

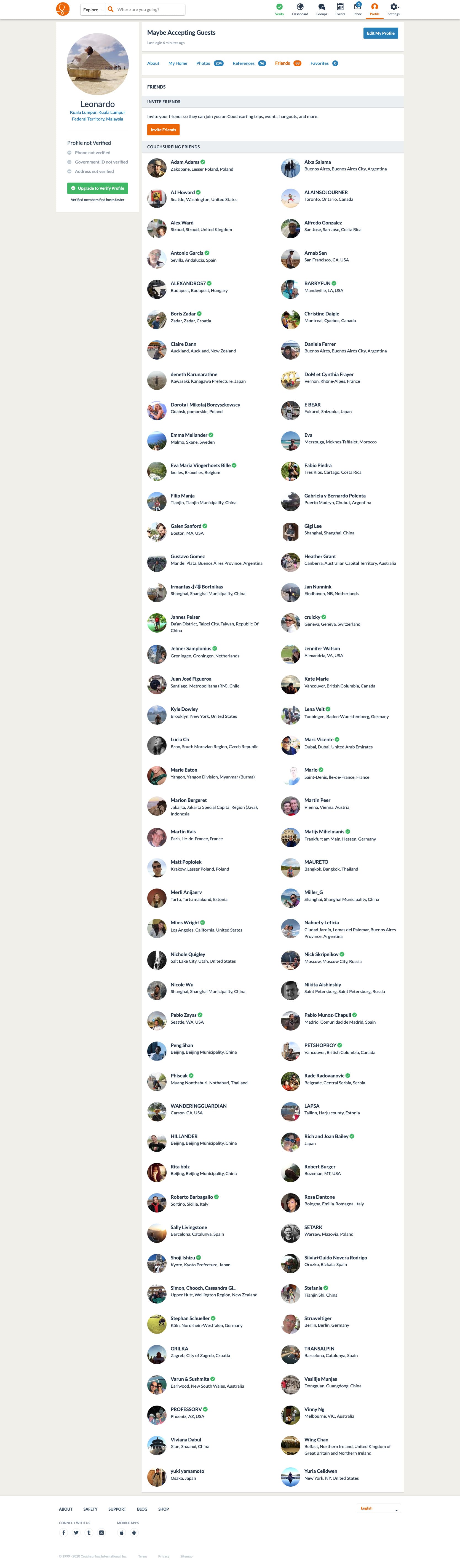

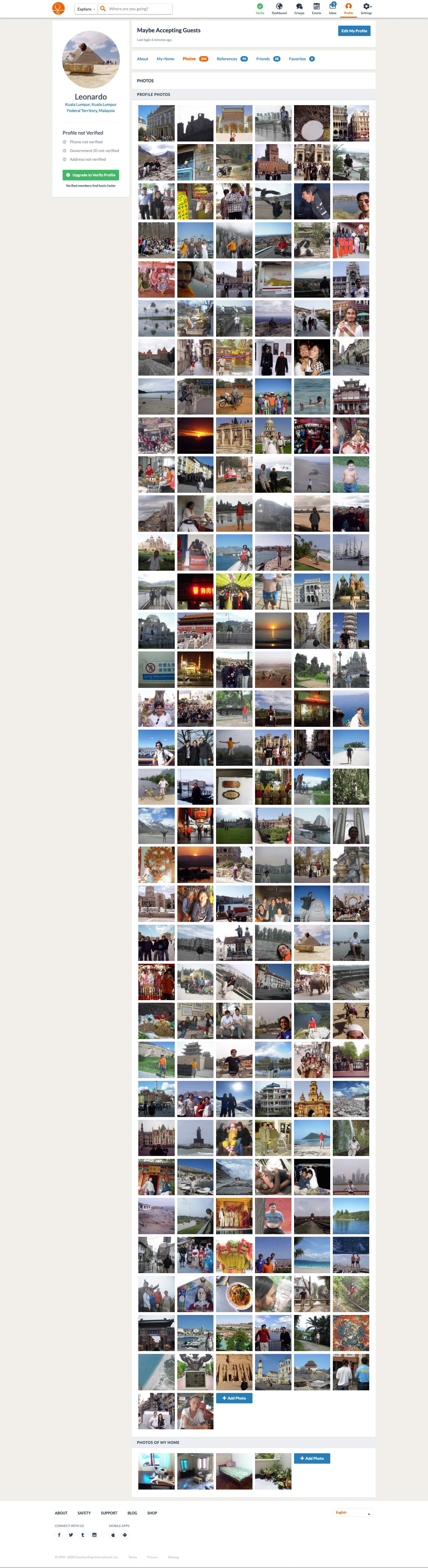

These are the last glimpses of my CouchSurfing activity, after being a member for 14 years!

My CouchSurfing friends (open big):

My CouchSurfing photos (open big):

My references from surfers (open big):

My references from hosts (open big):

My personal references (open big):

I met many friends. I met my wife. Those were good times.

But that's no more. Couchsurfing (with a lowercase s) is dead. Today I logged-in to the website, and wherever I go, I can only access this screen:

Mind you, if you're logged in, my profile is still available:

But if I click on "Edit my profile", I can't do anything, I can't change my status to "Not hosting". I'm presented the contribution screen.

So I'm being held hostage to access my own data. These guys managing the site are effectively putting a ransom on my own data. To say that this is extremely f*cked up is an understatement.

They say they hear us:

- Aren’t you holding my data hostage?

Nope. We understand some of you feel we are keeping your profiles “hostage” or require that you pay a “ransom”, and apologize for this. This was not the intention we had in trying to rally the community around saving Couchsurfing. We remain compliant with all privacy regulations. As has always been the case, you can ask for a copy of your data and to delete your account by contacting Couchsurfing Support at support@couchsurfing.com or through privacy@couchsurfing.com.

Yeah. Bullshit. Bullshit bullshit bullshit. I can't access my data in the website, I can't edit it, yet my profile is still publicly available.

Aren't these guys liable to be sued through GDPR? I hope they are, and I hope somebody punishes them. CouchSurfing (with uppercase S) was such a beautiful project. Until it was sold out to transform it into a business for personal profit, never mind the website was a community project